Introduction

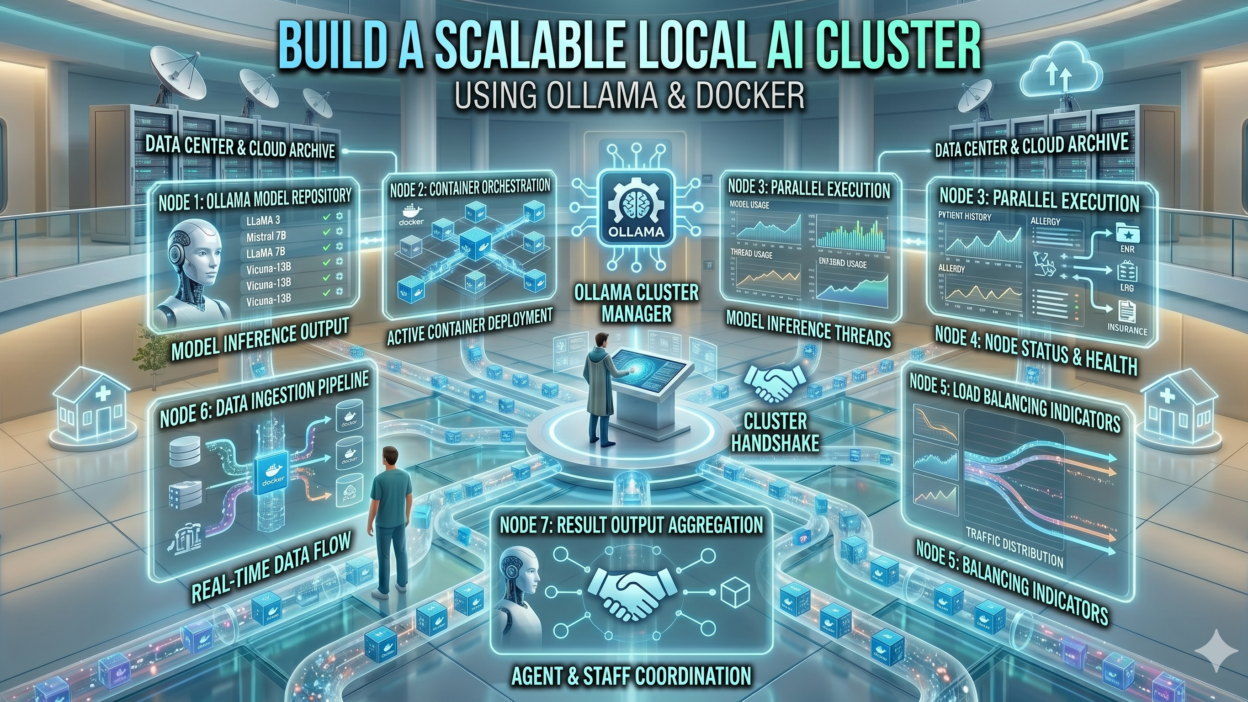

TL;DR Running large language models locally has become a serious priority for developers, researchers, and enterprise teams. Data privacy concerns push many organizations away from cloud-based AI APIs. Infrastructure costs motivate others to explore self-hosted solutions. Ollama and Docker together answer both of these needs precisely.

Ollama simplifies local LLM deployment dramatically. It wraps complex model setup into a clean command-line interface. Docker adds the portability and scalability that production environments demand. Together, Ollama and Docker create a foundation for a local AI cluster that scales without sacrificing control or performance.

This guide walks you through building that cluster from scratch. You will understand why this combination works so well. You will learn the architecture behind a scalable local AI setup. You will get hands-on configuration steps that take you from a single machine to a multi-node cluster. By the end, you will have a production-ready local AI infrastructure built on Ollama and Docker.

Why Ollama and Docker Make a Powerful AI Infrastructure Pair

Ollama handles model management with remarkable elegance. It pulls models from a central registry, similar to how Docker pulls container images. A single command downloads and runs a model like Llama 3, Mistral, or Gemma. No manual weight file management is needed. No Python environment conflicts arise. Ollama handles all of that internally.

Docker contributes the infrastructure layer that Ollama lacks on its own. It wraps Ollama inside a container that runs identically on any machine. A developer laptop, a bare-metal server, and a cloud VM all run the same containerized Ollama instance without configuration drift. This reproducibility is essential when building a cluster.

Ollama and Docker complement each other because each solves a different problem. Ollama solves model management. Docker solves environment consistency and orchestration. Combining them produces a system greater than either tool alone. Production AI teams worldwide are adopting this stack precisely because it removes the friction that used to make local LLM deployment painful.

Key Advantages of Running Ollama Inside Docker

Running Ollama inside a Docker container offers several concrete benefits. Resource allocation becomes precise. Docker lets you assign specific CPU cores, RAM limits, and GPU access to each Ollama container. Multiple model instances run on the same host without interfering with each other. Each container gets exactly the resources it needs.

Container isolation also improves security. Each Ollama instance runs in its own sandboxed environment. A crash in one container does not affect others. Model files stay inside the container’s volume. Host system files remain untouched. This clean separation matters deeply in production environments where stability is non-negotiable.

Version management gets easier with Docker too. Rolling back to a previous Ollama version means pulling the previous Docker image. Testing a new Ollama release happens in a separate container without disturbing the production environment. Teams iterate confidently because the risk of breaking things drops significantly.

Understanding the Architecture of a Local AI Cluster

A local AI cluster built on Ollama and Docker follows a straightforward architecture. At the base sits one or more host machines. Each machine runs a Docker engine. Docker containers on each machine host individual Ollama instances. These instances communicate through a shared network that Docker manages automatically.

A load balancer sits in front of the Ollama instances. It distributes incoming inference requests across available nodes. When one Ollama instance is busy processing a large prompt, the load balancer routes new requests to an idle instance. Request queuing prevents overloads from crashing any single node.

Single-Node vs Multi-Node Cluster Design

A single-node setup runs multiple Ollama containers on one powerful machine. This works well for teams with a single high-memory GPU workstation. Multiple models run simultaneously in separate containers. The load balancer distributes requests across these local containers. A single-node cluster handles moderate concurrent request volumes without complexity.

A multi-node setup spans Ollama instances across several machines. Each machine contributes its CPU, RAM, and GPU capacity to the cluster pool. Docker Swarm or Kubernetes manages container scheduling across nodes. This horizontal scaling handles high concurrent request volumes. Enterprise teams and research labs with serious throughput requirements need multi-node designs.

Network Topology for Ollama Cluster Nodes

Every Ollama container in the cluster exposes port 11434 internally. The Docker network maps these to unique host ports externally. The load balancer configuration lists each node’s address and port. Nginx and Traefik both work well as load balancers for Ollama and Docker clusters.

Container networking uses Docker’s overlay network driver for multi-node setups. Overlay networks span physical machine boundaries. Containers on different hosts communicate as if they share the same local network. This abstraction simplifies cluster configuration significantly. Developers write one network configuration that works across all nodes.

Prerequisites and System Requirements for Your Ollama and Docker Cluster

Hardware Requirements

Hardware selection directly determines cluster performance. Each node needs at minimum 16 GB of RAM for running 7-billion parameter models. Models with 13 billion parameters need 32 GB comfortably. Larger models like Llama 3 70B require 64 GB or more depending on quantization level. Plan your node hardware around the models you intend to serve.

GPU acceleration transforms inference speed dramatically. NVIDIA GPUs with CUDA support work natively with Ollama. An RTX 3090 or RTX 4090 handles most 7B and 13B models at fast inference speeds. A cluster of machines each with a mid-range NVIDIA GPU outperforms a single machine with a CPU-only setup by a wide margin.

Storage capacity matters for model files. Each LLM model file ranges from 4 GB for quantized 7B models to over 40 GB for full-precision 70B models. A cluster serving multiple models simultaneously needs several hundred gigabytes of dedicated model storage. Fast NVMe SSDs reduce model load times significantly.

Software Prerequisites

Install Docker Engine version 24 or higher on each cluster node. Docker Compose version 2.20 or higher handles multi-container orchestration on single nodes. For multi-node clusters, Docker Swarm or Kubernetes handles cross-node scheduling. Verify Docker installation with the docker version command before proceeding.

NVIDIA Container Toolkit is mandatory for GPU-accelerated Ollama containers. This toolkit lets Docker containers access the host machine’s NVIDIA GPU hardware. Install it using the official NVIDIA documentation for your operating system. Test GPU access inside a container before building the full cluster stack.

Nginx or Traefik serves as the cluster load balancer. Both offer Docker-native configuration through labels or config files. Nginx is simpler to configure for basic load balancing. Traefik offers more dynamic routing capabilities for clusters that add and remove nodes frequently.

Step-by-Step Guide to Setting Up Ollama with Docker

Pull the Official Ollama Docker Image

Start by pulling the official Ollama Docker image from Docker Hub. Open a terminal on your cluster node and run the pull command. The image contains everything Ollama needs to run, including the model management system and the REST API server.

docker pull ollama/ollama:latest

Verify the image pulled successfully by listing local Docker images. The ollama/ollama image should appear in the list with its size and creation date. This image serves as the base for every Ollama container in your cluster.

Run a Single Ollama Container

Run your first Ollama container to verify the basic setup works correctly. The command below starts an Ollama instance with GPU access enabled and the model directory mounted as a persistent volume.

docker run -d –gpus all -v ollama_data:/root/.ollama -p 11434:11434 –name ollama_node1 ollama/ollama

The -d flag runs the container in detached mode. The –gpus all flag grants access to all available NVIDIA GPUs. The -v flag mounts a persistent volume so model files survive container restarts. Port 11434 maps to the host for external API access.

Pull a Model Inside the Container

With the container running, pull your first model using the Ollama CLI inside the container. The exec command runs a command inside an existing container.

docker exec -it ollama_node1 ollama pull llama3

Ollama downloads the model file and stores it in the mounted volume. This model persists across container restarts. Pull additional models the same way. Each model stores separately in the volume so the cluster serves multiple models simultaneously.

Test the Ollama API Endpoint

Verify the Ollama container responds correctly by sending a test inference request. Use curl to hit the generate endpoint directly from the host machine.

curl http://localhost:11434/api/generate -d ‘{“model”: “llama3”, “prompt”: “Hello, how are you?”, “stream”: false}’

A successful response returns a JSON object containing the generated text. If the response arrives correctly, the single-node Ollama and Docker setup works. Now scale this to multiple containers for the full cluster.

Building the Multi-Container Cluster with Docker Compose

Writing the Docker Compose Configuration

Docker Compose defines multi-container applications in a single YAML file. Create a docker-compose.yml file that defines multiple Ollama service instances. Each service runs a separate container with its own port mapping and volume.

version: ‘3.8’ services: ollama_node1: image: ollama/ollama:latest ports: – “11434:11434” volumes: – ollama_data1:/root/.ollama deploy: resources: reservations: devices: – driver: nvidia count: 1 capabilities: [gpu] ollama_node2: image: ollama/ollama:latest ports: – “11435:11434” volumes: – ollama_data2:/root/.ollama volumes: ollama_data1: ollama_data2:

This configuration creates two Ollama containers on the same host. Node 1 uses port 11434. Node 2 uses port 11435. Each node gets its own persistent volume for model storage. Scaling to three or four nodes means adding additional service blocks following the same pattern.

Adding Nginx as the Load Balancer

Add an Nginx service to the Docker Compose file to distribute requests across Ollama nodes. Create an nginx.conf file that defines the upstream pool and proxy rules.

upstream ollama_cluster { server ollama_node1:11434; server ollama_node2:11434; } server { listen 80; location / { proxy_pass http://ollama_cluster; } }

Add the Nginx service to docker-compose.yml pointing to this configuration. The Nginx container joins the same Docker network as the Ollama containers. Requests arriving at port 80 on the host route automatically to available Ollama nodes. Nginx uses round-robin distribution by default, which works well for Ollama and Docker clusters handling similar request types.

Starting the Full Cluster

Start the entire cluster with a single Docker Compose command. Run this from the directory containing your docker-compose.yml file.

docker compose up -d

Docker pulls any missing images, creates the defined volumes, and starts all containers. Verify all services run correctly by checking container status. Every container should show a running status within thirty seconds of the command completing.

docker compose ps

Scaling the Cluster with Docker Swarm

Initializing Docker Swarm Mode

Docker Swarm extends Docker Compose orchestration across multiple physical machines. Initialize Swarm mode on your primary node with one command. This machine becomes the Swarm manager.

docker swarm init –advertise-addr <manager-ip-address>

The command outputs a join token. Run that token command on each additional machine to add worker nodes to the Swarm. Worker nodes accept and run container tasks that the manager schedules. The Ollama and Docker cluster now spans multiple physical machines.

Deploying the Ollama Stack Across Swarm Nodes

Convert your Docker Compose file into a Swarm stack deployment. Swarm stacks support replica counts, placement constraints, and resource reservations across nodes. Set the replica count for the Ollama service to match the number of cluster nodes.

docker stack deploy -c docker-compose.yml ollama_cluster

Swarm distributes Ollama container replicas across all available nodes automatically. Adding a new machine to the Swarm increases cluster capacity immediately. Removing a failed node triggers Swarm to reschedule its containers on healthy nodes. This self-healing behavior makes Ollama and Docker clusters resilient to hardware failures.

Monitoring Cluster Health

Monitor the Swarm cluster using built-in Docker commands. Check service status and replica counts with the service list command. Inspect individual service logs when debugging inference errors or performance issues.

docker service ls docker service logs ollama_cluster_ollama

Prometheus and Grafana integrate with Docker Swarm for visual monitoring. Ollama exposes metrics at the /metrics endpoint. Scrape these metrics with Prometheus and visualize them in Grafana dashboards. Track requests per second, inference latency, and GPU utilization across all cluster nodes from a single dashboard.

Performance Optimization Tips for Your Ollama and Docker Cluster

Model Quantization for Faster Inference

Quantized models run faster and consume less memory than full-precision versions. Ollama supports Q4 and Q8 quantized variants of most popular models. Pull the quantized version of a model by appending the quantization tag to the model name.

docker exec -it ollama_node1 ollama pull llama3:8b-instruct-q4_0

Q4 quantization reduces model memory footprint by roughly 75 percent compared to full precision. Inference speed increases significantly on GPU hardware. Quality degradation is minimal for most conversational and coding tasks. Use quantized models in production Ollama and Docker clusters to maximize throughput per GPU.

GPU Resource Partitioning Across Containers

Assign specific GPUs to specific Ollama containers when a node has multiple GPUs. The NVIDIA_VISIBLE_DEVICES environment variable controls GPU assignment inside containers. Container 1 gets GPU 0. Container 2 gets GPU 1. This partitioning prevents GPU memory contention between concurrent Ollama instances.

Persistent Volume Optimization

Model files load from disk into GPU memory at startup. Slow storage extends startup time significantly. Mount model volumes from NVMe SSDs rather than spinning hard drives. Docker volume drivers support high-performance storage backends for production deployments. Fast storage makes model switching between requests much more responsive in high-traffic Ollama and Docker clusters.

Real-World Use Cases for Local AI Clusters Built with Ollama and Docker

Private Enterprise AI Assistants

Enterprises with strict data governance requirements need AI assistants that never send data to external servers. An Ollama and Docker cluster runs entirely on company infrastructure. Legal documents, financial reports, and customer data stay within the corporate network. Employees get powerful AI assistance without compliance risks.

Developer AI Tooling and Code Completion

Development teams use local AI clusters for code completion, code review, and documentation generation. An Ollama and Docker cluster serves coding-focused models like DeepSeek Coder or CodeLlama. IDE plugins connect to the cluster’s load balancer endpoint. Every developer on the team shares a high-performance AI backend without individual API costs.

Research and Experimentation Environments

Research teams need to experiment with different models quickly. An Ollama and Docker cluster makes this easy. Researchers pull different models into the cluster without disrupting production workloads. A/B testing different model versions happens on separate cluster nodes simultaneously. The containerized architecture keeps experiments isolated and reproducible.

Frequently Asked Questions About Ollama and Docker

Can Ollama run inside Docker without a GPU?

Yes. Ollama runs on CPU-only hardware inside Docker containers. Performance is significantly slower without GPU acceleration. Smaller models with aggressive quantization run acceptably on modern multi-core CPUs. For production workloads with latency requirements, GPU-equipped nodes are strongly recommended. CPU-only nodes work well for low-traffic or development environments.

How many Ollama containers can one machine run?

The number of Ollama containers per machine depends entirely on available GPU VRAM and system RAM. A machine with 64 GB of GPU VRAM might run four containers each loading a 7B model at Q4 quantization. A machine with 128 GB system RAM can run CPU-only containers limited only by RAM capacity. Benchmark your specific hardware and model combination before setting production replica counts.

Does Docker Swarm work better than Kubernetes for Ollama clusters?

Docker Swarm is simpler to set up and manage for small to medium Ollama clusters. Kubernetes offers more granular control, better GPU scheduling support, and richer ecosystem tooling for larger deployments. Teams already using Kubernetes should use it for Ollama workloads. Teams new to container orchestration should start with Docker Swarm and migrate if requirements grow.

How do you update models across all cluster nodes?

Pull the updated model on each node individually using docker exec commands targeting each container. For large clusters, write a shell script that loops through all node containers and runs the pull command on each. Docker Swarm makes this easier through rolling updates that update one replica at a time without cluster downtime.

What secondary keywords relate to Ollama and Docker?

Related search terms include local LLM deployment, self-hosted AI infrastructure, Docker containerized AI, Ollama cluster setup, private AI server, open-source LLM hosting, Docker Compose AI stack, GPU Docker container, Nginx load balancer LLM, and self-hosted language model server. These terms appear frequently alongside Ollama and Docker in technical AI infrastructure content.

Read More:-Replit Agent vs. Bolt.new: Best No-Code Tools to Build Full-Stack Apps

Conclusion

Building a scalable local AI cluster with Ollama and Docker puts powerful language model infrastructure directly under your control. The combination is genuinely practical. Ollama handles model complexity. Docker handles environment consistency and scaling. Together they create a production-ready foundation that grows with your needs.

Start small and build deliberately. A single Ollama container running inside Docker proves the concept in under an hour. A multi-container Docker Compose setup extends that to a basic cluster in an afternoon. Docker Swarm takes the same configuration across multiple machines without rewriting anything. Each step builds on the previous one cleanly.

The architecture scales in every direction. More nodes add throughput. Better GPUs add speed. More model variants add capability. The Ollama and Docker foundation accommodates all of these expansions without architectural redesign. That flexibility makes this stack worth investing in seriously.

Data privacy, cost control, and infrastructure independence are not future goals. They are immediate realities when you run Ollama and Docker on your own hardware. Your models, your data, your cluster — completely under your control. The technical barrier to this level of AI infrastructure ownership has never been lower than it is right now.

Use this guide as your starting point. Adapt the configurations to your specific hardware and workload. Monitor performance, optimize aggressively, and add nodes as demand grows. A well-built Ollama and Docker cluster delivers enterprise-grade AI capabilities at a fraction of the cost of API-based alternatives. Build it once. Run it indefinitely.