Introduction

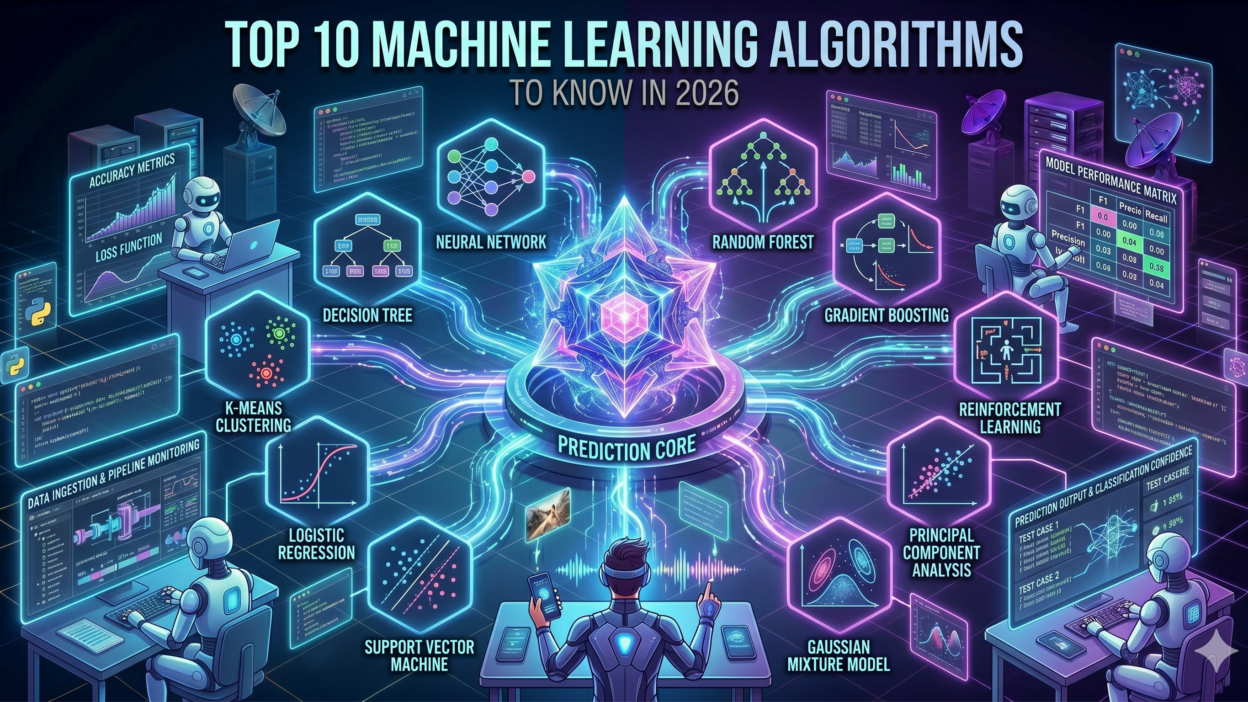

TL;DR Machine learning algorithms now shape every digital experience you use. From the recommendations on your favorite streaming app to fraud alerts from your bank, these systems run quietly in the background. The year 2026 marks a significant point in this journey.

Machine learning algorithms have become smarter, faster, and more accessible than ever before. Businesses of all sizes are adopting them. Developers are building with them daily. Understanding which machine learning algorithms matter most in 2026 is not optional anymore. It is essential.

This blog covers the top 10 machine learning algorithms dominating the industry right now. Each section explains what the algorithm does, where it works best, and why it matters. Whether you are a student, a developer, or a business owner, this guide gives you a clear picture.

Table of Contents

Linear Regression — The Foundation That Never Gets Old

What Makes Linear Regression Still Relevant in 2026?

Linear regression is one of the oldest machine learning algorithms. It predicts a continuous output based on one or more input features. The model draws a straight line through data points to find the best fit.

Many people assume linear regression is too simple for modern problems. That assumption is wrong. It remains a top choice for financial forecasting, real estate price prediction, and supply chain optimization. Its interpretability gives it an edge over complex black-box models.

In 2026, explainable AI is a major priority. Regulators in healthcare and finance demand models that humans can understand. Linear regression fits that requirement perfectly. Companies use it as a baseline before testing more advanced machine learning algorithms.

The algorithm performs well when the relationship between variables is genuinely linear. It trains fast even on large datasets. It requires minimal computing power. These qualities make it a go-to choice for quick prototyping.

Logistic Regression — Classification Done Right

Why Logistic Regression Remains a Business Favorite

Logistic regression handles classification tasks. Unlike linear regression, it predicts categories rather than numbers. It calculates the probability that an input belongs to a specific class.

Email spam detection uses logistic regression extensively. Credit risk scoring relies on it. Medical diagnosis systems apply it to separate positive cases from negative ones. The algorithm delivers reliable results with minimal tuning.

Despite its name containing the word regression, it solves classification problems. It outputs a value between 0 and 1. A threshold then decides the final class label. This simplicity makes it easy to deploy in production environments.

In 2026, logistic regression is a key part of ensemble systems. Developers combine it with other machine learning algorithms to boost overall accuracy. It handles binary and multi-class problems with equal ease.

Decision Trees — Visual and Powerful

How Decision Trees Solve Complex Problems Simply

Decision trees split data into branches based on feature values. Each split asks a yes-or-no question. The process continues until the model reaches a final prediction leaf. The visual structure is easy to explain to anyone.

This algorithm handles both classification and regression tasks. It works well with mixed data types, including numeric and categorical variables. It does not require data scaling or normalization.

Retail companies use decision trees for customer segmentation. Insurance firms apply them for claim approval decisions. Healthcare providers rely on them for patient triage. The visual output lets non-technical stakeholders understand the logic instantly.

One drawback is overfitting. A deep tree memorizes training data rather than learning patterns. Pruning techniques reduce this risk. Combining decision trees into ensembles creates even more powerful machine learning algorithms like Random Forests.

Random Forests — Strength in Numbers

Why Random Forests Lead in Accuracy and Reliability

Random forests build hundreds of decision trees and combine their outputs. Each tree trains on a random subset of data and features. The final prediction comes from a majority vote or average. This approach reduces overfitting dramatically.

Random forests are among the most popular machine learning algorithms across industries. They handle missing data gracefully. They work well even when feature engineering is minimal. They rank feature importance automatically, which helps developers understand the data.

E-commerce platforms use random forests for product recommendations. Cybersecurity teams apply them to detect network intrusions. Pharmaceutical companies rely on them for drug discovery research. The algorithm scales well and delivers consistent performance.

Training a random forest takes more time than a single decision tree. But the accuracy gains are significant. In 2026, random forests are a standard baseline in competitive machine learning.

Support Vector Machines — Maximum Margin Classification

The Enduring Power of SVM in High-Dimensional Data

Support vector machines find the optimal boundary between classes. They maximize the margin between data points and the decision boundary. This margin-maximization approach gives SVM strong generalization capabilities.

SVM excels in high-dimensional spaces. Text classification, image recognition, and bioinformatics are its top domains. It performs well even when the number of features exceeds the number of samples.

The kernel trick allows SVM to handle non-linear data. Different kernel functions transform the input space to make separation easier. This flexibility makes SVM one of the most versatile machine learning algorithms available.

SVM can be slow to train on very large datasets. Memory usage grows with dataset size. For smaller, well-structured datasets, it often outperforms more complex models. Researchers still cite SVM in cutting-edge scientific papers in 2026.

K-Nearest Neighbors — Simplicity Meets Effectiveness

When and Why KNN Still Delivers Results

K-nearest neighbors is a non-parametric algorithm. It does not build a model during training. It stores the training data and compares new inputs to stored records at prediction time. The closest K neighbors vote on the final output.

KNN works well for recommendation systems, anomaly detection, and pattern recognition. It adapts instantly to new data without retraining. This makes it useful in dynamic environments where data changes frequently.

The main challenge is computational cost during prediction. Comparing every new input against thousands of stored records is slow. Approximate nearest neighbor techniques solve this problem in 2026. Libraries like FAISS enable billion-scale similarity searches efficiently.

KNN is one of the machine learning algorithms that non-technical users find intuitive. The logic is straightforward: similar things belong together. This interpretability keeps it relevant even as more complex models emerge.

Naive Bayes — Speed and Scalability for Text

Why Naive Bayes Remains the King of Text Classification

Naive Bayes applies Bayes theorem with a strong independence assumption. It assumes all features are independent of each other given the class label. This assumption simplifies computation massively.

Text classification is where Naive Bayes shines. Spam detection, sentiment analysis, and document categorization benefit greatly from this algorithm. It trains on millions of documents in seconds. Its speed makes it a favorite for real-time applications.

The independence assumption is rarely true in real data. Yet Naive Bayes still performs surprisingly well in practice. It generalizes effectively even on small training datasets. It handles high-dimensional feature spaces without performance degradation.

In 2026, Naive Bayes powers many preprocessing pipelines. Developers use it as a fast first-pass filter before sending data to heavier machine learning algorithms. It is lightweight, interpretable, and remarkably effective for natural language tasks.

Gradient Boosting — The Competitive Edge in Structured Data

How XGBoost and LightGBM Changed the ML Landscape

Gradient boosting builds models sequentially. Each new model corrects the errors of the previous one. The ensemble of weak learners becomes a strong predictor over many iterations.

XGBoost and LightGBM are the most popular gradient boosting implementations. They dominate Kaggle competitions and enterprise deployments alike. They handle tabular data better than neural networks in many real-world scenarios.

Financial institutions use gradient boosting for credit scoring and fraud detection. Retailers apply it for demand forecasting and inventory management. Healthcare companies rely on it for patient outcome prediction.

These machine learning algorithms support GPU acceleration. They include built-in regularization to prevent overfitting. They handle missing values natively. Hyperparameter tuning with tools like Optuna unlocks even higher performance in 2026.

Neural Networks — The Engine Behind Modern AI

Deep Learning Architectures Reshaping Every Industry

Neural networks are inspired by the human brain. They consist of layers of interconnected nodes. Each layer learns increasingly abstract representations of the input data. Deep neural networks contain many hidden layers.

Convolutional neural networks dominate image recognition tasks. Recurrent neural networks handle sequential data like speech and time series. Transformer-based architectures power large language models and multimodal systems.

In 2026, neural networks are no longer just for big tech companies. Cloud platforms make them accessible through APIs and managed services. Smaller businesses train custom models using pre-built frameworks like TensorFlow and PyTorch.

Neural networks are among the most powerful machine learning algorithms ever developed. They achieve superhuman performance in specific domains. The trade-off is their need for large amounts of data and computing power. Efficient architectures like MobileNet and DistilBERT reduce this barrier significantly.

K-Means Clustering — Discovering Hidden Patterns

The Role of Unsupervised Learning in 2026

K-means clustering groups unlabeled data into K distinct clusters. The algorithm assigns each data point to the nearest cluster center. It then recalculates centers based on the assigned points. This process repeats until the clusters stabilize.

Market segmentation is one of the top use cases for K-means. Companies identify customer groups based on purchasing behavior. They then tailor marketing campaigns to each segment. The results improve conversion rates and customer retention.

Anomaly detection uses K-means to identify data points far from all cluster centers. Network security teams apply this technique to spot unusual traffic patterns. Manufacturing plants use it for quality control and defect detection.

K-means is one of the machine learning algorithms that works well without labeled data. Many real-world datasets lack labels. Unsupervised approaches extract value from raw data directly. In 2026, unsupervised learning is growing rapidly as data volumes expand beyond the capacity for manual labeling.

Supervised vs. Unsupervised vs. Reinforcement Learning

Machine learning splits into three main paradigms. Supervised learning trains on labeled examples. Algorithms like linear regression, logistic regression, decision trees, and neural networks fall into this category. Unsupervised learning finds structure in unlabeled data. K-means clustering is the classic example. Reinforcement learning trains agents through reward signals. It powers robotics, game AI, and autonomous systems.

Feature Engineering and Algorithm Performance

The quality of your features determines algorithm performance more than the algorithm itself. Good feature engineering means selecting, transforming, and creating variables that capture relevant patterns. All the machine learning algorithms covered in this blog perform better with well-prepared features. Data preprocessing, normalization, and dimensionality reduction are critical steps before training any model.

Overfitting and Regularization

Overfitting occurs when a model learns the training data too well. It performs well in training but fails on new data. Regularization techniques like L1 and L2 penalties reduce this risk. Cross-validation helps detect overfitting early. Every serious machine learning practitioner masters these techniques before deploying any model.

Model Evaluation Metrics

Accuracy alone does not tell the full story. Precision, recall, F1-score, and AUC-ROC give a complete picture of classifier performance. For regression models, mean absolute error and root mean squared error measure prediction quality. Choosing the right metric depends on the business problem and the cost of different types of errors.

Frequently Asked Questions About Machine Learning Algorithms

Which machine learning algorithm is best for beginners?

Linear regression and logistic regression are ideal starting points. They are easy to understand and interpret. They require minimal data preprocessing. They give beginners a solid foundation before moving to more complex machine learning algorithms.

Which machine learning algorithm is fastest to train?

Naive Bayes is among the fastest machine learning algorithms for training. It scales to millions of samples easily. For structured data, linear models also train very quickly. Deep neural networks are the slowest to train because they require many iterations.

Do I need a powerful computer to run these algorithms?

Not necessarily. Many machine learning algorithms run efficiently on standard laptops. Linear regression, logistic regression, decision trees, and Naive Bayes need minimal resources. Neural networks and gradient boosting benefit from GPU acceleration for large datasets. Cloud services like AWS, Google Cloud, and Azure reduce the hardware barrier significantly.

How do I choose the right machine learning algorithm for my project?

Consider the type of output you need first. Regression problems need different algorithms than classification problems. Check the size of your dataset. Look at interpretability requirements. Start simple and add complexity only when simpler models fail to meet your accuracy targets. Benchmark multiple machine learning algorithms before finalizing your choice.

Are machine learning algorithms replacing human jobs?

Machine learning algorithms automate repetitive and data-heavy tasks. They free human workers to focus on creative, strategic, and interpersonal work. The net effect in 2026 is job transformation rather than simple replacement. New roles like ML engineers, data scientists, and AI ethicists are growing rapidly.

What is the difference between machine learning and deep learning?

Deep learning is a subset of machine learning. It uses neural networks with many layers to learn complex representations. Traditional machine learning algorithms rely more heavily on feature engineering done by humans. Deep learning learns features automatically from raw data. Both approaches are valuable depending on the problem type and data availability.

Read More:-How to Use Cursor IDE to Modernize Legacy PHP/Java Codebases

Conclusion

Machine learning algorithms are the backbone of modern artificial intelligence. Each algorithm covered in this blog serves a specific purpose. Linear regression predicts continuous values. Logistic regression classifies categories. Decision trees visualize decisions. Random forests combine many trees for accuracy. SVM finds optimal boundaries. KNN finds similar patterns. Naive Bayes handles text at speed. Gradient boosting wins structured data competitions. Neural networks achieve deep pattern recognition. K-means reveals hidden clusters.

Choosing the right machine learning algorithm requires understanding your data, your goal, and your constraints. There is no single best algorithm. The best practitioners experiment, evaluate, and iterate. They know when to use a simple model and when to deploy a complex one.

In 2026, the barrier to using machine learning algorithms has never been lower. Open-source libraries, cloud platforms, and pre-trained models give everyone access to powerful tools. The real competitive advantage lies in understanding which algorithm to use, how to prepare your data, and how to measure success.

Start with one algorithm. Build your understanding. Expand your toolkit gradually. The world of machine learning algorithms rewards consistent learning and hands-on practice. The ten algorithms in this blog are your best starting point for 2026 and beyond.