Introduction

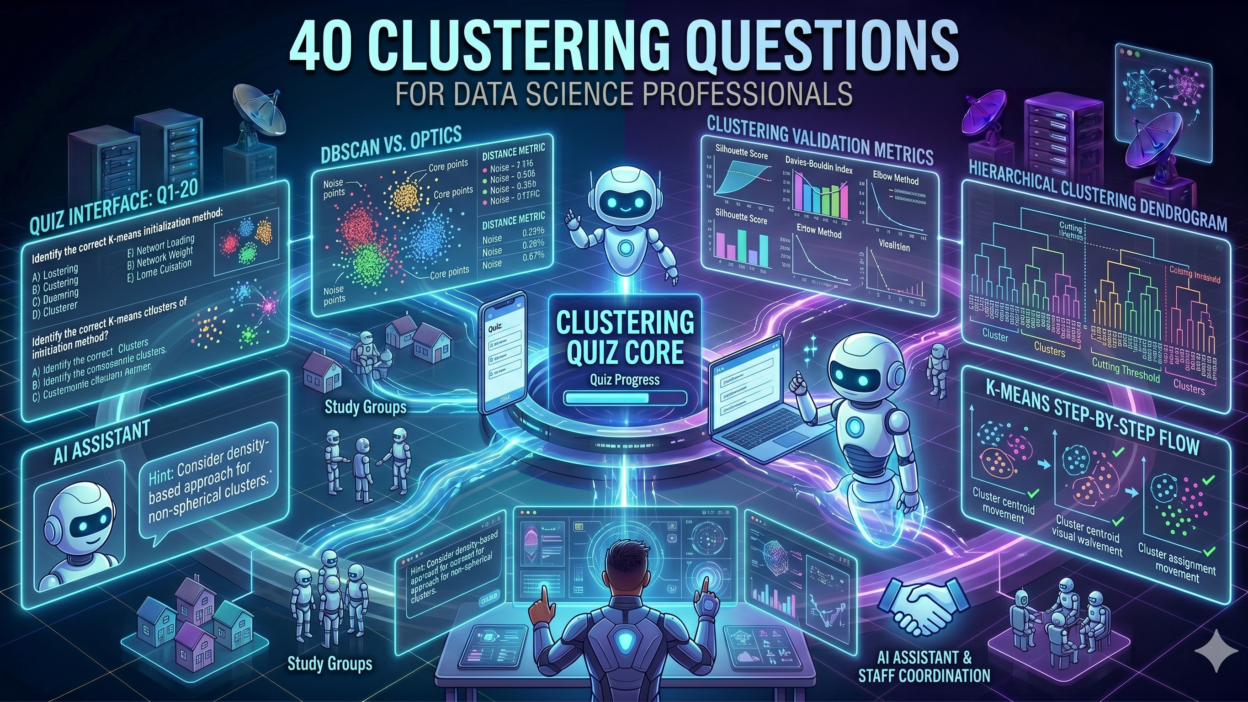

TL;DR Every serious data science interview includes questions on clustering techniques. Hiring managers rely on these questions to separate candidates who understand unsupervised learning from those who only memorize definitions. A strong grasp of clustering techniques signals analytical maturity, mathematical confidence, and practical experience.

Clustering techniques group unlabeled data based on similarity. No predefined labels guide the process. The algorithm discovers structure in raw data on its own. This unsupervised nature makes clustering one of the most intellectually demanding areas of machine learning to master.

This blog compiles 40 carefully chosen questions on clustering techniques. Each question reflects real interview scenarios across data science, machine learning engineering, and analytics roles. Every question includes a concise, accurate answer. Work through each one before your next technical interview.

The questions span foundational concepts, algorithmic mechanics, evaluation methods, and practical application. Whether you are preparing for a junior role or a principal data scientist position, these questions build genuine competence in clustering techniques.

Data science teams apply clustering techniques across industries every day. Retail companies segment customers. Healthcare providers group patient profiles. Financial institutions cluster transaction patterns for fraud detection. Marketers identify audience segments. Understanding clustering techniques deeply gives you a competitive advantage in every one of these domains.

Read each question. Write your own answer first. Compare it to the solution provided. Identify gaps. Fill them with hands-on experimentation on real datasets. That discipline transforms book knowledge into interview-ready expertise.

Table of Contents

Foundations of Clustering Techniques

Core Concepts Every Data Scientist Must Master

The first ten questions test your understanding of what clustering techniques are, why they exist, and how they differ from other machine learning approaches. These fundamentals appear in nearly every data science screen.

Q1. What are clustering techniques in machine learning?

Clustering techniques are unsupervised machine learning methods that group data points based on similarity. No labeled output guides the process. The algorithm identifies natural groupings within the data. Each group, called a cluster, contains data points more similar to each other than to points in other clusters.

Q2. How do clustering techniques differ from classification?

Classification is a supervised task. It predicts a predefined label using labeled training data. Clustering techniques operate without labels. The algorithm discovers group structure on its own. Classification produces categories defined by a human. Clustering produces categories defined by the data itself.

Q3. What is intra-cluster distance and why does it matter?

Intra-cluster distance measures how similar data points are within the same cluster. Smaller intra-cluster distances indicate tighter, more cohesive clusters. Good clustering techniques minimize intra-cluster distance while maximizing inter-cluster distance. This balance defines what makes a clustering result high quality.

Q4. What is inter-cluster distance?

Inter-cluster distance measures how different clusters are from each other. Larger inter-cluster distances mean clusters are well separated. Well-separated clusters are easier to interpret and more useful for downstream tasks. Strong clustering techniques maximize this separation alongside minimizing within-cluster spread.

Q5. What is the curse of dimensionality in clustering?

The curse of dimensionality refers to the degradation of distance measures in high-dimensional spaces. As dimensions increase, all data points become approximately equidistant from each other. Clustering techniques that rely on distance metrics like Euclidean distance lose their ability to distinguish similar from dissimilar points. Dimensionality reduction techniques like PCA often precede clustering in high-dimensional datasets.

Q6. What distance metrics do clustering techniques commonly use?

Euclidean distance is the most common metric. It measures straight-line distance between two points. Manhattan distance sums absolute differences across dimensions. Cosine similarity measures the angle between two vectors rather than their magnitude. This makes it ideal for text data where document length varies. The right distance metric depends on the data type and domain context.

Q7. What is a centroid in clustering?

A centroid is the central point of a cluster. It represents the average position of all data points within that cluster. Centroid-based clustering techniques like K-Means compute centroids iteratively. Each iteration reassigns data points to the nearest centroid and recalculates centroid positions based on the new assignments.

Q8. What is cluster cohesion?

Cluster cohesion measures how tightly grouped the points within a single cluster are. A high cohesion score means points within the cluster are very similar. Low cohesion means the cluster is spread out and loosely defined. Evaluating cohesion helps determine whether the chosen number of clusters and the algorithm itself fit the data well.

Q9. What is cluster separation?

Cluster separation measures how distinct different clusters are from one another. High separation means clusters occupy clearly different regions of the feature space. Low separation means clusters overlap. Overlapping clusters reduce interpretability and make downstream decisions less reliable. Good clustering techniques produce results with both high cohesion and high separation.

Q10. Can clustering techniques handle categorical data?

Most standard clustering techniques assume numerical data. Categorical data requires special handling. K-Modes is a variant of K-Means designed specifically for categorical variables. It uses modes instead of means. Gower distance handles mixed datasets containing both numerical and categorical features. Selecting the right approach for your data type is a key practical skill.

K-Means and Partitioning Methods

The Most Tested Clustering Algorithm Family

K-Means is the most commonly tested algorithm in clustering techniques interviews. These ten questions go deep into how it works, where it fails, and how practitioners fix its limitations. Master these answers and you cover the majority of what interviewers ask about clustering techniques.

Q11. How does the K-Means algorithm work?

K-Means initializes K cluster centroids randomly. It assigns each data point to the nearest centroid based on Euclidean distance. It then recalculates each centroid as the mean of all assigned points. The algorithm repeats assignment and recalculation until centroids stop moving significantly. The final result partitions the data into K non-overlapping clusters.

Q12. How do you choose the value of K in K-Means?

The elbow method plots inertia, which is the sum of squared distances from each point to its cluster centroid, against different values of K. The plot curves sharply at one point, forming an elbow. That K value balances compactness with simplicity. The silhouette score provides an alternative by measuring how similar each point is to its own cluster compared to neighboring clusters. Higher silhouette scores indicate better clustering.

Q13. What is the K-Means++ initialization method?

K-Means++ selects initial centroids strategically rather than randomly. The first centroid is chosen randomly from the data. Each subsequent centroid is selected with probability proportional to its squared distance from the nearest already-chosen centroid. This spread-out initialization leads to faster convergence and better final results. Most modern implementations of clustering techniques use K-Means++ by default.

Q14. What are the main limitations of K-Means?

K-Means requires you to specify K in advance. It assumes clusters are spherical and similarly sized. It is sensitive to outliers, which distort centroid positions. It performs poorly on clusters with irregular shapes or varying densities. The algorithm also finds local optima rather than a guaranteed global optimum. Multiple restarts with different initializations help mitigate this.

Q15. What is inertia in K-Means clustering?

Inertia is the sum of squared distances between each data point and its assigned cluster centroid. Lower inertia indicates tighter, more compact clusters. Inertia decreases as K increases. This is why adding more clusters always reduces inertia, even when more clusters do not genuinely improve the grouping. The elbow method uses this property to find a reasonable K.

Q16. How does K-Medoids differ from K-Means?

K-Medoids uses actual data points as cluster centers instead of computed means. The medoid is the data point within a cluster with the smallest average distance to all other points in that cluster. K-Medoids is more robust to outliers than K-Means because the center must be a real data point. It is computationally more expensive but produces more interpretable cluster centers.

Q17. Why does K-Means fail on non-spherical clusters?

K-Means minimizes Euclidean distance to centroids. This optimization naturally favors spherical, compact cluster shapes. Data with elongated, crescent, or ring-shaped clusters does not fit this assumption. K-Means will incorrectly split non-spherical clusters into multiple pieces. Density-based clustering techniques like DBSCAN handle arbitrary shapes more effectively.

Q18. What is the time complexity of K-Means?

The time complexity of K-Means is O(n * K * I * d) where n is the number of data points, K is the number of clusters, I is the number of iterations, and d is the number of dimensions. For large datasets, mini-batch K-Means processes random subsets in each iteration, reducing computational cost significantly while maintaining similar clustering quality.

Q19. How do you handle outliers before applying K-Means?

Outliers distort K-Means centroids and degrade cluster quality. Common approaches include removing statistical outliers using z-score or IQR filtering before clustering. Isolation Forest identifies and removes anomalous points. Using K-Medoids instead of K-Means provides inherent outlier resistance. Applying DBSCAN first to label noise points and then running K-Means on the clean data is another practical strategy.

Q20. What is the difference between hard and soft clustering?

Hard clustering assigns each data point to exactly one cluster. K-Means is a hard clustering technique. Soft clustering, also called fuzzy clustering, assigns each point a probability of belonging to each cluster. Fuzzy C-Means is the classic soft clustering algorithm. Soft clustering suits scenarios where data points genuinely belong to multiple groups, such as customers who fit multiple segments.

Hierarchical and Density-Based Clustering Techniques

Beyond K-Means: Advanced Algorithm Knowledge

Senior data science roles require deeper knowledge of clustering techniques beyond K-Means. This section covers hierarchical methods and density-based approaches. These questions separate candidates with surface-level familiarity from those with genuine algorithmic depth.

Q21. What is hierarchical clustering?

Hierarchical clustering builds a tree of clusters called a dendrogram. Agglomerative hierarchical clustering starts with each data point as its own cluster. It merges the two most similar clusters at each step. The process continues until all points belong to a single cluster. The dendrogram reveals the nested structure of clusters at every level of granularity.

Q22. What is a dendrogram and how do you read it?

A dendrogram is a tree diagram that shows the merging history of hierarchical clustering. The vertical axis represents the distance at which clusters merged. Horizontal cuts through the dendrogram at different heights produce different numbers of clusters. Cutting at a large distance gap produces fewer, more distinct clusters. Cutting lower produces more granular groupings.

Q23. What linkage criteria exist in hierarchical clustering?

Single linkage defines the distance between clusters as the minimum distance between any two points across the clusters. Complete linkage uses the maximum distance. Average linkage uses the mean distance between all pairs. Ward’s linkage minimizes the increase in total within-cluster variance at each merge step. Ward’s linkage generally produces the most compact and well-separated clusters.

Q24. What is DBSCAN and how does it work?

DBSCAN stands for Density-Based Spatial Clustering of Applications with Noise. It defines clusters as dense regions of data separated by sparse regions. Two parameters control the algorithm: epsilon, the radius of the neighborhood around each point, and MinPts, the minimum number of points required within that radius to form a dense region. Points in dense regions become core points. Points reachable from core points join the cluster. Points in sparse regions become noise.

Q25. What advantages does DBSCAN have over K-Means?

DBSCAN does not require specifying the number of clusters in advance. It discovers the number of clusters from the data structure itself. It handles clusters of arbitrary shapes, not just spherical ones. It identifies and labels noise points explicitly rather than forcing every point into a cluster. These properties make DBSCAN one of the most practical clustering techniques for real-world messy data.

Q26. What are the limitations of DBSCAN?

DBSCAN struggles with datasets where cluster density varies significantly. High-density and low-density clusters cannot both be captured with a single epsilon value. Parameter selection for epsilon and MinPts requires domain knowledge or systematic search. DBSCAN also scales poorly to very high-dimensional data because distance measures lose effectiveness in many dimensions.

Q27. What is HDBSCAN and how does it improve on DBSCAN?

HDBSCAN stands for Hierarchical DBSCAN. It builds a hierarchy of density-based clusters across a range of epsilon values rather than a single fixed value. This allows it to detect clusters of varying densities within the same dataset. It is more robust to parameter selection than standard DBSCAN and produces more stable cluster assignments. HDBSCAN is a preferred choice in modern data science workflows.

Q28. When would you choose hierarchical clustering over K-Means?

Choose hierarchical clustering when the number of clusters is unknown and you want to explore different granularities simultaneously. The dendrogram makes this exploration visual and intuitive. Choose hierarchical clustering also when cluster hierarchy itself has domain meaning, such as taxonomic classification or organizational structure analysis. K-Means scales better to large datasets. Hierarchical clustering suits smaller datasets where interpretability matters most.

Q29. What is OPTICS clustering?

OPTICS stands for Ordering Points To Identify the Clustering Structure. It is a generalization of DBSCAN that processes data points in a specific order based on their reachability distance. It produces a reachability plot rather than a direct cluster assignment. Analysts extract clusters of varying densities from this plot by identifying valleys. OPTICS handles variable density better than DBSCAN and provides a richer view of cluster structure.

Q30. What is mean shift clustering?

Mean shift clustering is a centroid-based algorithm that does not require specifying K in advance. It places a kernel window over each data point and shifts the window toward the region of highest data density iteratively. Each point converges to a local density peak. Points converging to the same peak belong to the same cluster. Mean shift works well for image segmentation and feature-space analysis.

Section 4 — Evaluation, Validation, and Advanced Topics

Clustering Techniques Evaluation and Expert-Level Questions

Evaluation is the most underappreciated skill in clustering techniques. Any algorithm can produce clusters. The real challenge is measuring whether those clusters are meaningful. These ten questions cover evaluation metrics, Gaussian Mixture Models, spectral clustering, and practical deployment considerations.

Q31. How do you evaluate clustering results without ground truth labels?

Internal validation metrics assess cluster quality using only the data itself. The silhouette score measures how similar each point is to its own cluster versus the nearest neighboring cluster. Davies-Bouldin index computes the average similarity between each cluster and its most similar cluster. Calinski-Harabasz index measures the ratio of between-cluster dispersion to within-cluster dispersion. Higher values are better for the last two metrics.

Q32. What is the silhouette score?

The silhouette score ranges from -1 to 1. A score near 1 means the point fits well within its cluster and poorly with neighboring clusters. A score near 0 means the point sits on the boundary between clusters. A negative score means the point may belong to the wrong cluster. Averaging silhouette scores across all points gives an overall measure of clustering techniques quality.

Q33. What is the Davies-Bouldin index?

The Davies-Bouldin index computes the average ratio of within-cluster scatter to between-cluster distance across all cluster pairs. Lower values indicate better-defined clusters. It penalizes clustering solutions where clusters are spread out and close together simultaneously. It is computationally inexpensive and easy to interpret, making it a practical choice for comparing clustering techniques on the same dataset.

Q34. How do external validation metrics work?

External validation metrics compare clustering results to known ground truth labels. The Adjusted Rand Index measures agreement between two cluster assignments while correcting for chance. Normalized Mutual Information measures shared information between cluster labels and true labels. The Fowlkes-Mallows Index measures the geometric mean of pairwise precision and recall. These metrics require labeled data, which is often unavailable in real clustering scenarios.

Q35. What are Gaussian Mixture Models and how do they relate to clustering techniques?

Gaussian Mixture Models (GMMs) assume the data comes from a mixture of multiple Gaussian distributions. Each Gaussian component represents one cluster. The Expectation-Maximization algorithm fits the model by iteratively estimating the parameters of each Gaussian and the probability that each point belongs to each component. GMMs produce soft cluster assignments. They handle elliptical cluster shapes that K-Means cannot capture.

Q36. What is spectral clustering?

Spectral clustering uses the eigenvalues of a similarity matrix derived from the data. It projects data points into a lower-dimensional space where traditional clustering techniques like K-Means can separate complex shapes. It handles non-convex clusters that confuse centroid-based methods. The approach requires computing pairwise similarities, which becomes expensive for very large datasets. It is highly effective on graph-structured data.

Q37. What is the Expectation-Maximization algorithm in the context of clustering?

The EM algorithm alternates between two steps. The Expectation step computes the probability that each data point belongs to each cluster given the current model parameters. The Maximization step updates the model parameters to maximize the likelihood of the data given these assignments. EM converges to a local maximum of the likelihood function. Multiple restarts help find better solutions for complex clustering techniques like GMMs.

Q38. How do you scale clustering techniques to very large datasets?

Mini-batch K-Means processes random subsets of the data in each iteration, dramatically reducing memory and computation requirements. BIRCH builds a compact summary tree of the data before clustering, enabling hierarchical clustering on large datasets. Approximate nearest neighbor methods speed up density-based clustering techniques. Distributed computing frameworks like Apache Spark implement clustering algorithms across clusters of machines for truly massive scale.

Q39. What is the role of feature scaling in clustering techniques?

Distance-based clustering techniques treat all features as equally important by default. Features with large numerical ranges dominate distance calculations. A feature ranging from 0 to 10,000 overwhelms a feature ranging from 0 to 1. Standardization scales each feature to zero mean and unit variance. Min-max normalization scales features to a fixed range. Always apply feature scaling before running distance-based clustering techniques unless the raw scale carries genuine domain meaning.

Q40. How do you interpret and communicate clustering results to non-technical stakeholders?

Start by naming each cluster based on its dominant characteristics. Use simple descriptive labels rather than technical terms. Visualize clusters using two-dimensional projections from PCA or UMAP. Show sample data points from each cluster to illustrate what each group looks like in practice. Quantify each cluster by size and key feature statistics. Connect the clustering results to a business outcome. Practical interpretation is where clustering techniques deliver real organizational value.

Unsupervised Learning Algorithms in Data Science

Clustering techniques fall under the broader category of unsupervised learning. Other unsupervised methods include dimensionality reduction techniques like PCA, t-SNE, and UMAP, as well as association rule mining and anomaly detection. Data science interview preparation benefits from understanding how clustering techniques fit within the full landscape of unsupervised methods and when each approach applies.

Customer Segmentation Using Clustering

Customer segmentation is the most commercially prominent application of clustering techniques. Retail and e-commerce companies group customers by purchase behavior, frequency, and monetary value using RFM analysis combined with K-Means or hierarchical clustering. Marketing teams use segments to personalize campaigns. Product teams use them to prioritize features. Understanding this application strengthens both technical interviews and business-facing discussions.

Dimensionality Reduction Before Clustering

High-dimensional data degrades the performance of most clustering techniques. PCA reduces dimensionality while preserving maximum variance. UMAP preserves local and global data structure and visualizes clusters in two or three dimensions. t-SNE is popular for visualization but should not be used as a preprocessing step for actual clustering. Always apply dimensionality reduction before clustering when features number in the dozens or more.

Clustering Techniques in Natural Language Processing

Text data requires specialized preprocessing before applying clustering techniques. Document embeddings from models like BERT, Sentence Transformers, or Word2Vec convert text to numerical vectors. K-Means and HDBSCAN then group similar documents. Topic modeling with Latent Dirichlet Allocation offers an alternative probabilistic approach. News categorization, customer feedback analysis, and content recommendation all rely on text clustering techniques at scale.

Frequently Asked Questions on Clustering Techniques

Which clustering technique is best for beginners?

K-Means is the best starting point for beginners learning clustering techniques. It is simple to understand, easy to implement, and available in every major data science library. The elbow method for choosing K is intuitive. Scikit-learn’s KMeans class lets beginners apply the algorithm with just a few lines of Python. Building proficiency with K-Means first makes learning advanced clustering techniques much faster.

Do clustering techniques require labeled data?

No. Clustering techniques work without any labels. This is their defining characteristic. The algorithm discovers groupings purely from the structure of the input features. Labeled data is only needed when you want to evaluate clustering quality using external validation metrics like Adjusted Rand Index. For all actual clustering work, labels are neither required nor used.

How many clusters should I use?

No universally correct answer exists. The elbow method, silhouette scores, and domain knowledge together guide the choice. The elbow method identifies a K where adding more clusters produces diminishing improvements in compactness. The silhouette score measures cluster cohesion and separation. Domain knowledge often provides the most practical guidance. A marketing team may know that three customer segments make business sense regardless of what the algorithm suggests.

What is the difference between DBSCAN and K-Means?

K-Means requires specifying the number of clusters in advance. DBSCAN discovers the number automatically from data density. K-Means assumes spherical clusters. DBSCAN handles arbitrary shapes. K-Means assigns every point to a cluster. DBSCAN labels sparse points as noise rather than forcing them into a group. For clean, well-separated spherical data, K-Means is faster. For messy, irregularly shaped data, DBSCAN is more effective.

Can clustering techniques be used for anomaly detection?

Yes. Clustering techniques identify outliers as points that do not belong to any dense cluster. DBSCAN labels these points as noise directly. K-Means identifies anomalies as points with very high distance to their nearest centroid. Isolation Forest and Local Outlier Factor are purpose-built anomaly detection algorithms, but clustering techniques offer an alternative approach that integrates naturally into exploratory data analysis workflows.

Read More:-A Complete Python Tutorial to Learn Data Science from Scratch

Conclusion

These 40 questions cover every important dimension of clustering techniques. The progression moves from foundational concepts to algorithm mechanics, from evaluation metrics to real-world application. Work through every question honestly. Your weakest answers reveal exactly where to invest your study time next.

Clustering techniques reward practitioners who go beyond memorization. Run K-Means on a real dataset. Visualize the results. Try DBSCAN on the same data and compare outputs. Apply hierarchical clustering and read the dendrogram. Hands-on experience transforms theoretical knowledge into confident interview performance.

The best data scientists view clustering techniques as a discovery tool, not just an algorithm. They ask what the clusters reveal about the business problem. They question whether the clusters generalize. They challenge their own assumptions about what makes a good grouping. That analytical mindset impresses senior interviewers more than perfect algorithm recall.

Data science in 2026 demands both breadth and depth. Clustering techniques sit at the intersection of statistics, machine learning, and domain expertise. Mastering them opens doors in every industry. Customer analytics, fraud detection, healthcare, genomics, and natural language processing all need professionals who understand data grouping at a deep level.

Use this blog as a study anchor. Return to it before every interview. Share it with colleagues preparing for data science roles. The 40 questions here represent the core of what the industry tests. Master every answer. Build on each concept with real projects. Clustering techniques will become one of your strongest professional assets.