Introduction

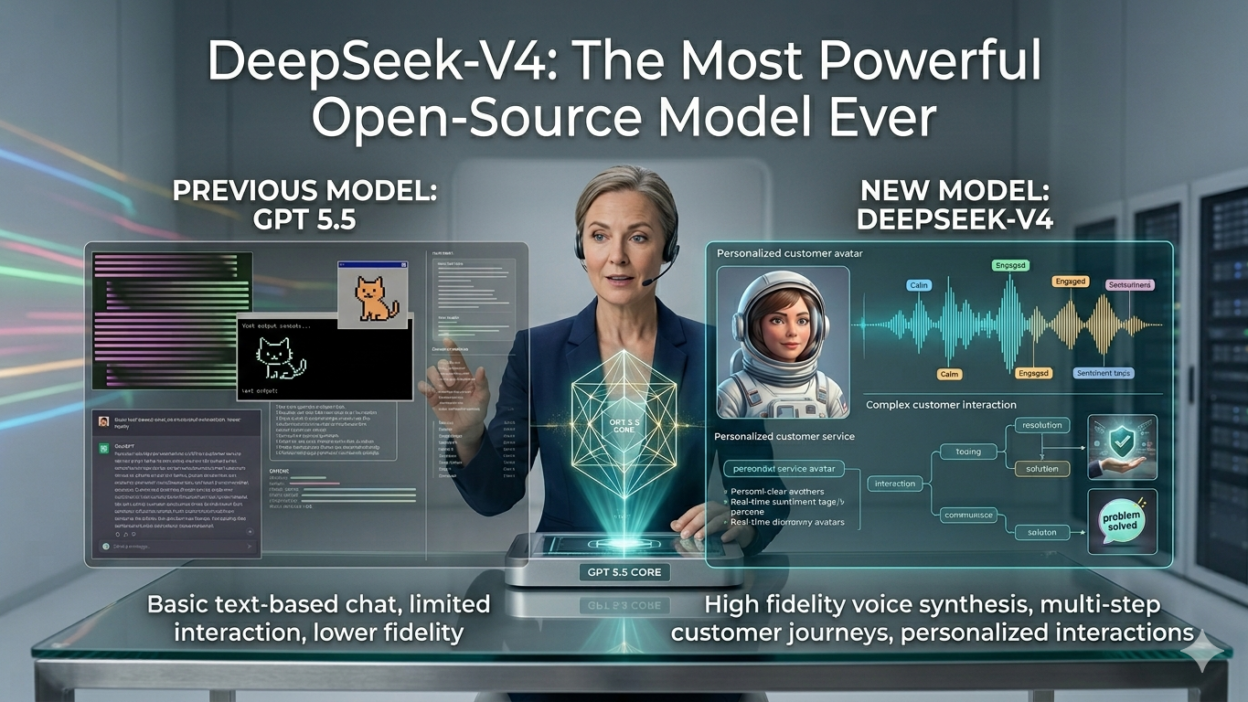

TL;DR The open-source AI world just shifted. DeepSeek-V4 arrived and quietly rewrote what people believed free models could do. This is a full breakdown of what it is, how it works, and why it matters.

Table of Contents

The Open-Source AI World Has a New Leader

Most AI breakthroughs arrive wrapped in hype. Companies hold launch events. Influencers post reaction videos. Benchmarks get cherry-picked. The actual product often disappoints. DeepSeek-V4 broke that pattern entirely.

No giant keynote accompanied its release. No celebrity endorsements filled the feed. A research paper dropped. A model checkpoint followed. Within days, developers, researchers, and engineers across the world started testing it. The verdict came back consistent: this model is genuinely different.

DeepSeek-V4 comes from DeepSeek AI, a Chinese research lab backed by the quantitative trading firm High-Flyer Capital. The lab operates with a serious research focus. Their previous releases showed promise. This one delivers at a level the open-source community had never seen before from any freely available model.

The model competes directly with frontier closed models. It handles reasoning, coding, mathematics, and multilingual tasks at levels that rival GPT-5 and Claude 3.7 Sonnet on key benchmarks. It does all of this while remaining open-source. That combination redefines what the open-source AI ecosystem can achieve.

This post covers everything you need to know about DeepSeek-V4. Architecture, benchmarks, practical use cases, pricing, limitations, and the bigger picture all get proper attention.

What Exactly Is DeepSeek-V4?

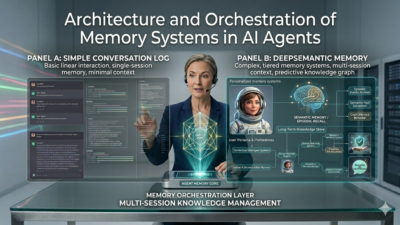

DeepSeek-V4 is a large language model built on a Mixture-of-Experts (MoE) architecture. The full model contains 671 billion parameters. Only 37 billion parameters activate during any single inference pass. That design keeps computation efficient without sacrificing output quality.

MoE architecture is not new. What makes DeepSeek-V4 unique is how efficiently it implements this approach. DeepSeek AI developed a Multi-head Latent Attention mechanism. This mechanism reduces the memory required during inference significantly. Running the model at scale becomes more practical for organizations without massive GPU clusters.

Training used 14.8 trillion tokens. That volume exceeds most publicly known training runs for open-source models. The dataset spans code, mathematics, science, general web text, and multilingual content. That breadth gives the model strong generalization across domains.

The training process also introduced an innovative approach to reinforcement learning. DeepSeek AI refined the model’s reasoning capabilities through a multi-stage post-training pipeline. This pipeline improves instruction following, reduces harmful outputs, and sharpens accuracy on complex tasks.

Total parameters 671B

Active per inference 37B

Training tokens 14.8T

Context window 128K

The context window reaches 128,000 tokens. That length supports long document processing, multi-file code analysis, and extended research conversations without losing coherence. The model handles all of this within a single session without truncation issues on most supported deployments.

All weights for DeepSeek-V4 are publicly available. You can download them. You can run them locally. You can fine-tune them on your own data. That openness represents a fundamental shift in how frontier-level AI capability can spread across the research and developer communities.

Benchmark Performance: How Good Is It Really?

Reasoning and mathematics

Mathematical reasoning benchmarks separate serious models from capable ones. DeepSeek-V4 scores 90.2 on MATH-500, one of the most demanding math reasoning benchmarks available. That score places it above GPT-4o and within range of frontier-class reasoning models.

AIME 2024 results tell a similar story. This benchmark uses competition-level mathematics problems designed for high school students at the national championship level. DeepSeek-V4 solves these problems at a rate that matches or exceeds all publicly available open-source models. Several closed commercial models also fall below its performance here.

Multi-step reasoning chains stay coherent. The model does not lose track of intermediate steps in complex derivations. That consistency across long reasoning chains is a significant achievement.

Coding tasks and software engineering

Developers care most about code. DeepSeek-V4 scores 65.2 on HumanEval, a standard benchmark for code generation accuracy. It also scores 49.6 on SWE-Bench Verified, which tests the model’s ability to resolve real GitHub issues in large software repositories.

Those numbers translate to real-world performance. The model generates correct, runnable code on moderately complex tasks at a high rate. It handles Python, JavaScript, TypeScript, Rust, C++, and SQL with strong competence. Multi-file reasoning and architecture-level code suggestions also work at a level that surprises experienced engineers.

Code review tasks perform equally well. Paste a function and ask for bugs. The model finds them. Ask for optimization suggestions. The suggestions reflect genuine software engineering knowledge, not surface-level pattern matching.

Language understanding and knowledge

MMLU measures broad knowledge across 57 academic subjects. DeepSeek-V4 scores 88.5. That places it in direct competition with top closed models. The breadth of subject coverage matters here. History, biology, law, economics, and engineering all get handled with the same reliability.

Multilingual performance is a particular strength. The model handles Chinese, English, French, German, Spanish, and several other languages with high accuracy. DeepSeek AI’s Chinese-language training data gives it an edge in Chinese-language tasks that many Western-developed models cannot match.

“On benchmark after benchmark, DeepSeek-V4 lands where no open-source model has landed before — right next to the best closed models in the world.”

Architecture Deep Dive: What Makes It Work

Mixture-of-Experts design

MoE architecture routes each token through a selected subset of expert networks. Not all parameters activate for every token. DeepSeek-V4 uses 256 routed experts with 8 experts active per token during inference. That routing strategy keeps inference costs manageable despite the massive total parameter count.

The router network learned to allocate expert capacity intelligently during training. Different experts specialize in different domains. Mathematical reasoning activates different experts than creative writing. Code generation draws on yet another expert cluster. This specialization improves output quality on domain-specific tasks.

Multi-head Latent Attention

Standard attention mechanisms in large models consume significant memory at inference time. DeepSeek AI developed Multi-head Latent Attention specifically to reduce this bottleneck. The mechanism compresses the key-value cache used during attention computation. Memory requirements drop without degrading the model’s ability to attend to long contexts.

This innovation matters practically. Running DeepSeek-V4 locally on high-end consumer hardware becomes more feasible. Organizations without access to expensive cloud GPU instances can still deploy the model at reasonable quality levels using quantized versions.

FP8 training and efficiency innovations

DeepSeek AI trained DeepSeek-V4 using FP8 mixed precision. This approach reduces the computational cost per training step significantly. The entire training run cost approximately $5.5 million USD. That figure is remarkable. Training runs for comparable closed models cost an estimated $50 to $100 million or more. The efficiency gap is extraordinary.

This cost efficiency has implications beyond just one model. It demonstrates that frontier-level AI training is achievable at a fraction of previously assumed costs. That changes the competitive dynamics of AI development globally.

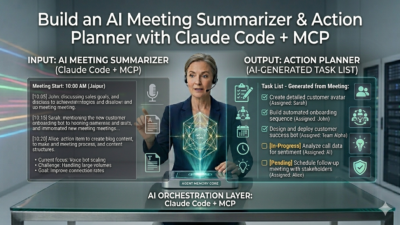

Real-World Use Cases for DeepSeek-V4 ~450 words

Software development and engineering

Engineering teams adopt DeepSeek-V4 for code generation, review, documentation, and debugging. The model handles large codebases with context-aware suggestions. It understands project structure when given sufficient context. Teams report fewer hallucinated APIs and more runnable first-attempt outputs compared to previous open-source alternatives.

Self-hosted deployment appeals to security-conscious organizations. Companies that cannot send source code to external APIs use DeepSeek-V4 on private infrastructure. The open weights enable full control over data handling. That privacy advantage drives adoption in finance, healthcare, and government contexts.

Research and academic work

Researchers use DeepSeek-V4 for literature review summarization, hypothesis generation, and data analysis support. The 128K context window allows processing entire research papers within a single session. Multi-paper synthesis tasks produce coherent cross-source summaries. That capability saves significant time on literature review tasks.

Scientific domain knowledge runs deep in the model. Physics, chemistry, biology, and computer science questions get handled with accuracy that competes with specialized domain models. Graduate-level questions receive graduate-level responses at a consistency that surprises most academic users on first encounter.

Enterprise content and knowledge work

Knowledge workers use DeepSeek-V4 for drafting reports, creating summaries, answering complex internal queries, and processing documents. Self-hosting allows enterprises to connect the model to internal databases and document stores without sending proprietary data to third-party servers.

Retrieval-augmented generation setups pair naturally with the model. Connect a document store. Add a retrieval layer. The model synthesizes retrieved information into coherent, accurate responses. Internal knowledge management applications built on this architecture perform strongly.

Education and tutoring applications

Educators and EdTech companies explore DeepSeek-V4 for intelligent tutoring systems. The model explains concepts at adjustable complexity levels. It generates practice problems, provides step-by-step solutions, and adapts explanation depth to follow-up questions. Mathematics and science tutoring use cases benefit most from the model’s strong quantitative reasoning capabilities.

Accessing and Running DeepSeek-V4

DeepSeek’s own API and chat interface

DeepSeek AI provides direct API access to DeepSeek-V4 through their platform at api.deepseek.com. The pricing is aggressive. Input tokens cost $0.27 per million. Output tokens cost $1.10 per million. Those rates sit significantly below comparable closed-model APIs. Developers building applications find the cost economics very attractive.

A web chat interface at chat.deepseek.com allows non-technical users to interact with the model without any setup. Free access is available with rate limits. Paid API access removes limits and adds additional controls. The chat interface supports code rendering, mathematical notation, and document uploads.

Self-hosting on your own infrastructure

The full 671B parameter model requires significant GPU memory for deployment. Running FP16 weights demands approximately 1.3TB of GPU VRAM across a cluster. That scale suits large organizations with serious infrastructure. Smaller deployments use quantized versions. INT8 quantization brings memory requirements down to a more manageable range for teams with 8 to 16 high-end GPUs.

Tools like vLLM, Ollama, and LM Studio support deployment of quantized DeepSeek-V4 variants. Smaller distilled versions of the model also exist. DeepSeek AI released distilled models down to 1.5B parameters. These smaller models sacrifice some capability but run on consumer hardware including high-end laptops and workstations.

Third-party API providers

Multiple third-party providers host DeepSeek-V4 on their infrastructure. Together AI, Fireworks AI, and Groq all offer API access. Groq’s LPU architecture delivers notably fast inference speeds. For latency-sensitive applications, Groq-hosted DeepSeek-V4 variants can produce responses significantly faster than GPU-based deployments.

Limitations Worth Knowing

Honest coverage of any model requires examining its limits. DeepSeek-V4 has clear strengths. It also has areas where caution applies.

Content moderation differs from Western models. The model reflects training choices made by a Chinese research lab. Certain political topics receive responses shaped by those choices. Users working in politically sensitive domains should evaluate outputs carefully and understand this context before deployment.

Real-time information does not exist without external tooling. Like all base language models, DeepSeek-V4 has a knowledge cutoff date. Web browsing requires RAG setups or tool-use integrations. The base model cannot fetch current news or live data independently.

Full model deployment demands serious infrastructure. The compute requirements for running the 671B parameter full model exclude most individuals and small organizations. Quantized distilled versions provide accessible entry points but represent meaningfully reduced capability compared to the full model.

Creative writing at the literary fiction level shows the same ceiling other large models hit. Technical tasks, reasoning, and factual content stay strong. Highly stylized creative prose does not consistently reach the level of top human writers. For commercial creative content, the model produces solid work. Literary experimentation may disappoint.

Why DeepSeek-V4 Changes the AI Landscape

The release of DeepSeek-V4 sent a clear signal to the entire AI industry. Frontier-level capability no longer requires a closed commercial model. Open weights, available to anyone, can now compete with the best proprietary systems in the world on most benchmarks that matter.

That shift carries enormous implications. Organizations previously locked into expensive API dependencies now have a credible alternative. Researchers who could not afford commercial model access now work with a tool of similar capability. Governments building sovereign AI infrastructure have a viable open foundation to build upon.

The training cost story deserves particular attention. Achieving this quality at $5.5 million total training cost challenges every assumption about the capital requirements for frontier AI development. Smaller well-funded research labs and universities now sit closer to the capability frontier than anyone expected two years ago.

Competition benefits everyone. Closed model providers face direct pressure. Pricing across commercial APIs has already moved lower in response to DeepSeek-V4‘s release. That competitive pressure accelerates improvement and reduces costs for every developer and organization building with AI tools.

Frequently Asked Questions

Is DeepSeek-V4 truly open-source?

The model weights are publicly available for download and use. The license permits research and commercial deployment with some restrictions. It is open-weight rather than fully open-source in the purest sense. Training code and full dataset details are not completely public. For most practical purposes, developers treat it as open-source and self-host it freely.

How does DeepSeek-V4 compare to GPT-5?

On many benchmark tasks including mathematics, coding, and general knowledge, DeepSeek-V4 performs at a comparable level to GPT-5. On certain reasoning-heavy tasks, GPT-5 still holds an edge. On cost and accessibility, DeepSeek-V4 wins clearly. The practical performance gap for most business and developer use cases is much smaller than the gap in accessibility and pricing.

Can I run DeepSeek-V4 on my personal computer?

The full 671B parameter model requires enterprise-grade GPU infrastructure. Smaller distilled versions are available. DeepSeek AI released distilled models starting from 1.5B parameters up through 70B parameters. The 7B and 14B distilled versions run on high-end consumer GPUs. The 1.5B version runs on most modern laptops using tools like Ollama or LM Studio.

How much does DeepSeek-V4 API access cost?

DeepSeek AI charges $0.27 per million input tokens and $1.10 per million output tokens through their official API. Those rates are significantly lower than comparable closed-model APIs. Third-party providers offer varying rates. Groq-hosted versions prioritize speed. Together AI and Fireworks AI offer competitive pricing with high availability. All options undercut major commercial providers on cost.

Is DeepSeek-V4 safe to use for enterprise applications?

For technical tasks like coding, data analysis, and document processing, DeepSeek-V4 performs reliably and safely. Content moderation differs from Western commercial models in politically sensitive areas. Organizations should evaluate outputs in their specific domain before wide deployment. Self-hosting eliminates data privacy concerns related to external API transmission. A staged rollout with human review at critical steps serves enterprise deployments well.

What programming languages does DeepSeek-V4 support?

DeepSeek-V4 handles Python, JavaScript, TypeScript, Java, C, C++, Rust, Go, Ruby, PHP, Swift, Kotlin, and SQL with strong competence. Python and JavaScript performance rates highest based on benchmark data and community reports. Less common languages also receive reasonable support. For production code generation in any major language, verification and testing remain essential steps regardless of model capability.

Does DeepSeek-V4 support multimodal inputs?

The base DeepSeek-V4 language model is text-focused. DeepSeek AI also released DeepSeek-VL2, a separate multimodal model that handles image inputs. Some deployment platforms combine these capabilities within a single interface. Check the specific deployment you use for multimodal support. The language model alone handles text, code, and document inputs at the highest capability level.

How frequently does DeepSeek update its models?

DeepSeek AI has maintained an active release cadence. Major model releases arrived in late 2023, 2024, and 2025. Each release brought substantial capability improvements over the prior version. The research team publishes detailed technical reports with each release. Following their official channels provides the earliest notice of new releases and capability updates.

Read More:-GPT 5.5 vs Opus 4.7: Which Is the Best AI Model Today?

Conclusion :The open-source era of frontier AI starts here

DeepSeek-V4 represents a genuine turning point. Not a marginal upgrade. Not a marketing story. A real capability leap that changes the practical options available to developers, researchers, and organizations worldwide.

The architecture choices are smart and efficient. MoE design with Multi-head Latent Attention keeps inference practical. FP8 training proved frontier capability does not require astronomical compute budgets. Those technical achievements make DeepSeek-V4 both impressive in output and accessible in deployment.

Benchmark results back the claims. Mathematics, coding, language understanding, and multi-step reasoning all land at levels no open-source model reached before. Direct comparisons with GPT-5 and Claude 3.7 Sonnet show DeepSeek-V4 operating at the same tier on most evaluated tasks.

Real-world use cases span software engineering, academic research, enterprise content work, and education. Self-hosting capability gives security-conscious organizations full data control. Aggressive API pricing gives developers a cost-efficient path to production deployment.

Limitations exist and deserve acknowledgment. Content moderation differences, infrastructure demands for the full model, and real-time data constraints all apply. Knowing these limits helps organizations deploy the model appropriately rather than expecting universal perfection.

The competitive impact is already visible. API pricing across the industry moved lower after DeepSeek-V4‘s release. Research labs worldwide recalibrated their expectations about training cost requirements. The entire AI development landscape shifted because one lab built something exceptional and shared it freely.

If you work with AI in any capacity, DeepSeek-V4 belongs on your radar. Test it on your actual tasks. The results will speak for themselves.