Introduction

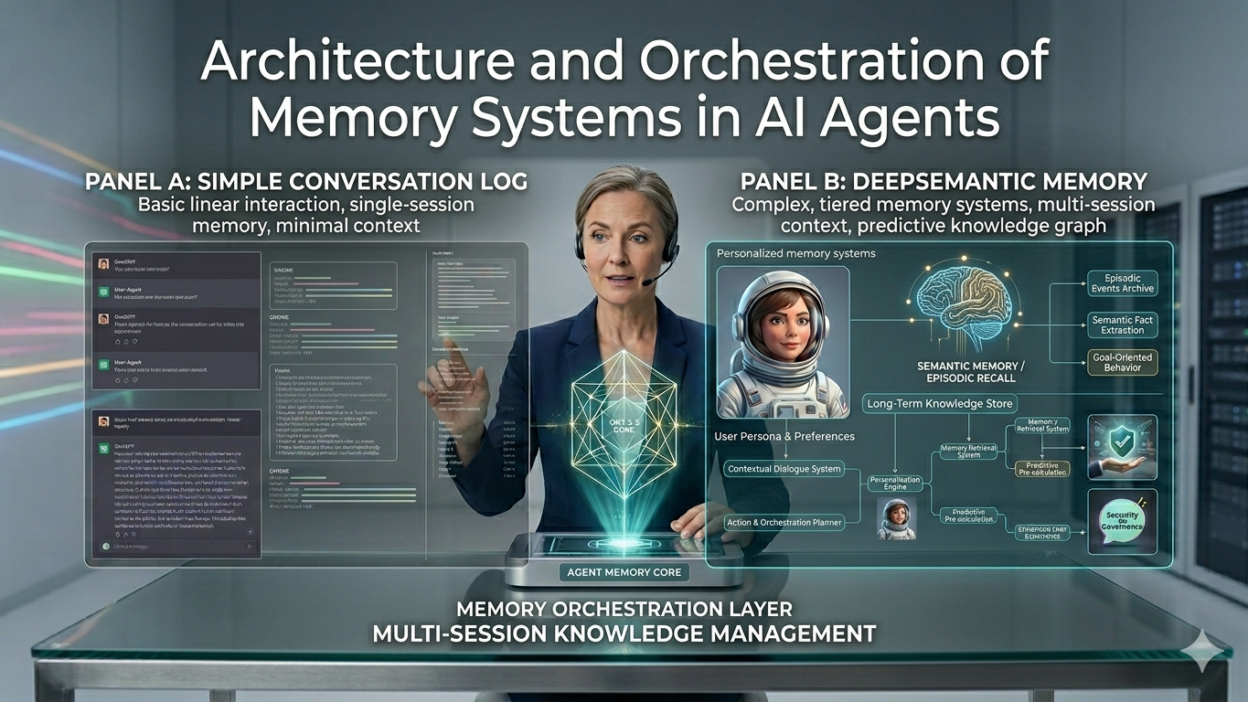

TL;DR AI agents that forget are agents that fail. The difference between a useful agent and a powerful one comes down to how it stores, retrieves, and applies information. This post explains exactly how memory works inside modern AI agents.

Table of Contents

Why Memory Defines Agent Intelligence

Every intelligent system needs memory. Humans rely on memory to carry context across days, weeks, and years. AI agents face the same requirement. Without well-designed memory systems in AI agents, every conversation starts from zero. Every task loses continuity. Every action happens without knowledge of what came before.

Early language models had no persistent memory at all. Each conversation existed as a completely isolated session. The model forgot everything the moment the context window ended. That limitation made agents frustrating for multi-step tasks requiring continuity.

Modern agent architectures changed this. Engineers now build dedicated memory layers into agent systems. These layers store information beyond the immediate context window. They retrieve relevant knowledge at runtime. They update knowledge as new information arrives. The result is an agent that learns, adapts, and improves with use.

Understanding memory systems in AI agents matters enormously for anyone building production-grade agents. The architecture you choose determines how well your agent handles long conversations, repeated user interactions, multi-step workflows, and domain-specific knowledge. Get memory right and the agent becomes genuinely useful. Get it wrong and the agent stays a novelty.

This post covers the full picture. Memory types, architectural patterns, retrieval strategies, orchestration approaches, and practical tooling all receive proper attention. Technical depth meets practical clarity throughout.

The Four Core Memory Types in AI Agents

Researchers and engineers classify memory systems in AI agents into four distinct types. Each serves a different function. Each operates at a different timescale. Understanding all four is essential before designing any agent memory architecture.

Sensory memory

Sensory memory holds raw inputs for a very short period. In the context of AI agents, this maps to the immediate input buffer. It captures what the agent receives right now: the current user message, the current tool output, the current retrieved document chunk. Sensory memory does not persist. It feeds directly into the working memory layer for immediate processing.

Agent designers rarely build explicit sensory memory components. The context window itself handles this function in most implementations. Understanding it as a conceptual layer helps when reasoning about information flow through the full memory stack.

Working memory

Working memory holds the agent’s active processing state. The context window of the underlying language model represents this layer. Everything currently in context — the conversation history, active task description, tool outputs, and retrieved knowledge — exists in working memory. The agent reasons across all of it during each inference step.

Context window size directly limits working memory capacity. A 128K token context window gives an agent more working memory than a 32K window. That difference matters significantly for complex tasks requiring many documents, long conversation histories, and multiple active tool outputs within a single reasoning pass.

Engineers design prompt structures carefully to manage working memory. What goes into context at each step, how it gets formatted, and what gets compressed or summarized before inclusion all affect reasoning quality. Working memory management is one of the most critical engineering decisions in agent design.

Episodic memory

Episodic memory stores records of past experiences. For an AI agent, this means records of past conversations, task executions, decisions made, and outcomes observed. Episodic memory persists beyond individual sessions. The agent can retrieve and reference past interactions when handling new ones.

A customer service agent with episodic memory remembers that a specific user reported the same issue last month. A coding agent remembers what libraries a developer prefers. A research agent remembers what papers a researcher already reviewed. That contextual awareness across time makes agents dramatically more useful in repeated-use scenarios.

Vector databases commonly implement episodic memory in production memory systems in AI agents. Each past interaction gets embedded as a vector. Retrieval pulls semantically similar past episodes based on the current context. The agent receives relevant history without loading the entire interaction log into context.

Semantic memory

Semantic memory stores general world knowledge and domain facts. For a language model, a large portion of semantic memory lives within the model weights themselves. Everything the model learned during training represents compressed semantic memory. This knowledge cannot be updated without retraining or fine-tuning.

External semantic memory extends this. Knowledge bases, structured databases, documentation repositories, and fact stores represent external semantic memory that agents access at runtime. Retrieval-augmented generation connects agents to this external knowledge layer. The agent queries the knowledge store, retrieves relevant facts, and incorporates them into its reasoning without those facts needing to exist in the model weights.

Architectural Patterns for Memory Orchestration

Knowing the memory types is the foundation. Designing how they work together is the real engineering challenge. Several architectural patterns emerge consistently across well-built memory systems in AI agents.

The retrieval-augmented generation pattern

RAG remains the most widely deployed memory architecture for AI agents. The pattern separates knowledge storage from model inference. Documents, facts, and knowledge chunks store in an external vector database. At inference time, the agent’s query retrieves the most semantically relevant chunks. Those chunks inject into the context window before the language model generates its response.

RAG excels for large static knowledge bases. Legal document stores, medical knowledge repositories, company documentation systems, and technical reference libraries all suit RAG architectures well. The knowledge base can grow to millions of documents without affecting inference cost or context pressure.

Chunking strategy critically affects RAG quality. How you split documents into retrievable chunks determines retrieval precision. Fixed-size chunks are simple but miss semantic boundaries. Semantic chunking splits at natural content boundaries. Hierarchical chunking preserves parent-child relationships between document sections and individual passages. Each strategy suits different knowledge structures.

The hierarchical memory pattern

Hierarchical memory organizes information across multiple abstraction levels. Raw interaction logs sit at the lowest level. Summaries of those logs sit at a higher level. Distilled insights and learned preferences sit at the highest level. The agent queries different levels depending on the granularity of information it needs.

This pattern mirrors how human memory compresses experience over time. You do not remember every word of every conversation. You remember key facts, patterns, and preferences derived from many interactions. Hierarchical memory systems in AI agents apply the same compression logic computationally.

Automatic summarization runs on episodic memory logs at defined intervals. A conversation log from yesterday compresses into a summary by the next session. A week of summaries compresses into key user preference notes. The compression chain keeps retrieval efficient while preserving the most useful historical knowledge.

The working memory management pattern

Context windows are finite. Every token of conversation history, retrieved document, tool output, and system instruction consumes context budget. Without active management, context fills with low-value information and crowds out high-value content.

Working memory management patterns address this explicitly. Rolling window approaches drop the oldest conversation turns as new ones arrive. Summarization approaches compress older turns into shorter summaries that preserve key information with fewer tokens. Selective retention approaches score each context element by relevance and drop low-scoring elements when context pressure rises.

Production-quality memory systems in AI agents combine these approaches. Different content types receive different treatment. Tool outputs from completed subtasks compress. Recent conversation turns stay in full. Retrieved knowledge chunks rotate out after use. The agent maintains a rich, relevant context throughout long task execution.

The memory write pattern

Agents need to write new memories, not just read them. The write pattern determines what information persists, when it persists, and how it gets structured for future retrieval. Poor write patterns create noisy, redundant memory stores. Well-designed write patterns create clean, useful, efficiently retrievable knowledge.

Trigger-based writing stores memories at defined events: task completion, user confirmation, explicit “remember this” instructions, or detected preference signals. Background summarization processes run asynchronously to compress and store distilled knowledge without blocking the agent’s primary reasoning loop. Both approaches appear in robust memory systems in AI agents.

Vector Databases and Embedding Strategies

Vector databases power most long-term memory implementations in modern agent systems. Understanding how they work clarifies how memory systems in AI agents retrieve relevant information efficiently at scale.

How vector storage works

Text chunks convert into numerical vectors using embedding models. These vectors capture semantic meaning in a high-dimensional space. Chunks with similar meaning produce vectors that sit close together geometrically. Retrieval finds the vectors nearest to the query vector. Those nearest vectors correspond to the most semantically relevant stored chunks.

Embedding model choice affects retrieval quality significantly. General-purpose embedding models work well for broad knowledge bases. Domain-specific embedding models trained on specialized corpora produce better retrieval precision for technical domains. Fine-tuned embeddings on your specific data often outperform both on narrow retrieval tasks.

Approximate nearest neighbor search

Exact nearest neighbor search across millions of vectors is computationally expensive. Approximate nearest neighbor algorithms trade perfect precision for dramatically faster retrieval. FAISS, HNSW, and IVF index structures all implement ANN search with different trade-offs between speed, memory usage, and recall accuracy.

Production memory systems pair ANN retrieval with metadata filtering. A user ID filter restricts retrieval to memories belonging to the current user. A date range filter prioritizes recent memories. A source type filter separates episodic memories from semantic knowledge. Combined filtering plus ANN search makes retrieval both fast and precise.

Popular vector database options

Pinecone offers managed vector storage with strong scaling properties and simple API integration. Weaviate provides an open-source option with hybrid search combining vector and keyword retrieval. Chroma targets local development and smaller deployments with a lightweight footprint. Qdrant offers strong filtering capabilities with competitive performance at scale. Each option suits different deployment contexts within agent memory systems in AI agents.

Pinecone Managed, scalable

Weaviate Hybrid search

Chroma Local-first

Qdrant Rich filtering

Orchestrating Memory Across Agent Workflows

Multi-step agent workflows create unique orchestration challenges. A single agent task might span dozens of reasoning steps, multiple tool calls, and retrieved documents from several knowledge sources. Orchestrating memory systems in AI agents across these complex workflows requires deliberate design.

Memory in single-agent loops

ReAct-style agent loops interleave reasoning and action steps. Each step produces new information: observations from tool calls, intermediate reasoning, and partial results. This information must stay accessible across the full loop without overloading context. Memory systems handle the accumulation.

At each loop iteration, the orchestration layer decides what to inject into the next context. Recent tool outputs get high priority. Older intermediate results compress or summarize. Retrieved knowledge chunks relevant to the current step load fresh. The working context stays focused on what the agent needs right now.

Memory in multi-agent systems

Multi-agent architectures add another orchestration dimension. Multiple specialized agents collaborate on complex tasks. Each agent may maintain its own local memory. Shared memory stores allow agents to pass information to each other without repeated retrieval or redundant processing.

Shared memory in multi-agent systems requires careful access control. Agents should read and write only the memory partitions relevant to their function. A researcher agent and a writer agent working on the same task share a document summary store but maintain separate conversation memory partitions. Well-scoped memory access prevents interference between agents.

Blackboard architectures implement shared memory as a central store that all agents read from and write to. Agents publish results to the blackboard. Other agents retrieve published results when they become relevant to downstream tasks. This pattern works well for complex analytical workflows with clear handoff points between specialized agents.

Memory in long-horizon planning

Tasks spanning hours, days, or weeks need persistent memory across execution sessions. An agent planning a research project cannot hold the full plan in context across multiple working sessions. External memory stores the plan state, completed steps, pending tasks, and intermediate findings. Each session retrieves the current plan state before continuing execution.

Checkpointing strategies ensure long-horizon tasks survive interruptions. The agent periodically writes its full state to external memory. Restoration from a checkpoint brings the agent back to exactly where it left off. Without checkpointing, a failure mid-task requires starting over from the beginning. Robust memory systems in AI agents treat checkpointing as a core reliability feature, not an optional addition.

“The agent that remembers where it left off is the agent that actually finishes the job.”

Memory Freshness, Forgetting, and Relevance Decay

Not all memories age equally. Recent information often matters more than old information. Outdated facts can actively mislead an agent. Good memory systems in AI agents handle staleness and relevance decay deliberately.

Timestamp metadata on every stored memory enables freshness-aware retrieval. Retrieval scoring combines semantic similarity with recency weighting. A highly relevant but six-month-old memory scores lower than a moderately relevant but recent one. The agent favors fresher information without completely discarding older knowledge.

Explicit expiration policies remove memories that should not persist indefinitely. User session memories expire after a defined period of inactivity. Outdated product information expires when new versions release. Task-specific scratch memory expires when the task completes. Automatic expiration keeps the memory store clean without manual curation.

Forgetting is not failure. Strategic forgetting keeps memory systems efficient and accurate. An agent that never forgets accumulates noise alongside signal. Agents that forget intelligently maintain high retrieval precision as the memory store grows. Designing forgetting policies is as important as designing storage policies.

Tools and Frameworks for Building Agent Memory

Several mature frameworks accelerate memory implementation for agent builders. Knowing the available options saves significant engineering time when designing memory systems in AI agents from scratch.

LangChain provides extensive memory abstractions. ConversationBufferMemory holds the raw conversation history. ConversationSummaryMemory automatically summarizes older turns. ConversationVectorStoreMemory combines vector retrieval with conversation context. These pre-built abstractions cover common memory patterns with minimal configuration overhead.

LlamaIndex focuses specifically on knowledge retrieval architectures. Its indexing and query engine capabilities build sophisticated RAG pipelines. Multi-step retrieval, hierarchical indexing, and cross-document synthesis all receive strong framework support. LlamaIndex pairs naturally with any vector database for production RAG deployments.

MemGPT introduced the concept of virtual context management. It mimics operating system memory paging to handle contexts far larger than the model’s native window. Information pages in and out of the active context based on relevance scoring. This approach enables effectively unlimited context depth for extremely long agent tasks.

Mem0 provides a purpose-built memory layer for AI applications. It handles memory storage, retrieval, and management with a simple API. Support for user-specific memory partitioning, automatic preference extraction, and memory deduplication makes it a practical choice for production memory systems in AI agents that need to scale across many users.

Frequently Asked Questions

What are the main types of memory in AI agents?

AI agents use four main memory types. Sensory memory holds immediate inputs briefly. Working memory spans the active context window during a reasoning step. Episodic memory stores records of past interactions and experiences persistently. Semantic memory holds general world knowledge and domain facts, both within model weights and in external knowledge stores. Well-designed agents orchestrate all four layers together.

Why does memory matter so much for AI agents?

Memory enables continuity. Without it, every agent interaction starts from zero. The agent cannot recall past user preferences, completed task steps, or relevant domain knowledge from previous sessions. Memory transforms a stateless question-answering system into an agent that learns, adapts, and improves with repeated use. Long-horizon tasks are simply impossible without persistent memory.

What is the difference between RAG and fine-tuning for agent memory?

RAG retrieves external knowledge at runtime without changing model weights. It suits dynamic, updatable knowledge that changes frequently. Fine-tuning bakes knowledge into model weights during training. It suits stable domain knowledge that rarely changes. Production agent systems often combine both. The model gets fine-tuned on core domain concepts while RAG handles current, specific, and user-specific knowledge.

How do multi-agent systems share memory?

Multi-agent systems implement shared memory through central stores accessible to all agents in the system. Blackboard architectures let agents publish results that other agents retrieve. Shared vector databases allow all agents to query the same knowledge base. Access controls scope each agent’s read and write permissions to prevent interference. Careful partition design keeps agent-specific memory separate from shared task memory.

What is MemGPT and how does it handle memory?

MemGPT implements virtual context management inspired by operating system memory paging. Information moves between main context and external storage based on relevance scoring. When context pressure rises, low-relevance content pages out to storage. When needed again, it pages back in. This architecture enables effectively unlimited context depth far beyond the model’s native context window limit.

How should agents handle memory staleness?

Timestamp metadata on stored memories enables freshness-aware retrieval scoring. Retrieval algorithms combine semantic similarity with recency weighting. Explicit expiration policies automatically remove memories past their useful lifespan. Outdated product facts, expired session data, and completed task scratchpads all benefit from automatic expiration. Strategic forgetting maintains retrieval precision as memory stores grow over time.

Which vector database should I use for agent memory?

The best choice depends on your deployment context. Pinecone suits teams wanting a fully managed solution with minimal infrastructure overhead. Weaviate suits teams needing hybrid keyword and vector retrieval. Chroma suits local development and smaller deployments. Qdrant suits teams needing rich metadata filtering with strong performance. Evaluate each against your scale, latency, and operational complexity requirements before committing.

Can an AI agent have too much memory?

Yes. Unlimited memory accumulation creates noise that degrades retrieval precision. An agent that stores everything retrieves everything, including irrelevant information that crowds out useful content. Intentional forgetting policies, expiration rules, and relevance-weighted retrieval keep memory stores clean and retrievals accurate. Memory quality matters more than memory volume for agent performance.

Read More:-GPT 5.5 vs Opus 4.7: Which Is the Best AI Model Today?

Conclusion: Memory is the architecture that makes agents real

Memory systems in AI agents sit at the core of every serious agent design. The four memory types — sensory, working, episodic, and semantic — each play a distinct role. Understanding all four before designing an agent architecture prevents costly redesigns later.

Architectural patterns like RAG, hierarchical memory, working memory management, and strategic memory writing give engineers concrete implementation blueprints. These patterns solve real problems that every production agent system eventually encounters. Knowing them accelerates development and improves reliability.

Vector databases, embedding models, and ANN retrieval form the technical foundation of most long-term memory implementations. Choosing the right combination for your scale, latency requirements, and knowledge structure determines how well your agent retrieves the right information at the right moment.

Orchestration across single-agent loops, multi-agent collaborations, and long-horizon planning tasks each demand different memory management strategies. A single-agent customer support bot needs different memory orchestration than a multi-agent research pipeline running over several days. Design for your specific use case from the start.

Freshness, forgetting, and relevance decay deserve as much design attention as storage and retrieval. Memory systems in AI agents that grow without cleanup degrade over time. Smart expiration policies and recency-aware retrieval scoring maintain quality as memory stores scale.

Frameworks like LangChain, LlamaIndex, MemGPT, and Mem0 reduce implementation effort significantly. Use them. Build on proven abstractions. Reserve custom engineering for the specific requirements your framework does not cover.

An agent without memory reacts. An agent with well-designed memory systems in AI agents reasons, learns, and improves. That difference separates tools from genuine intelligence.