Introduction

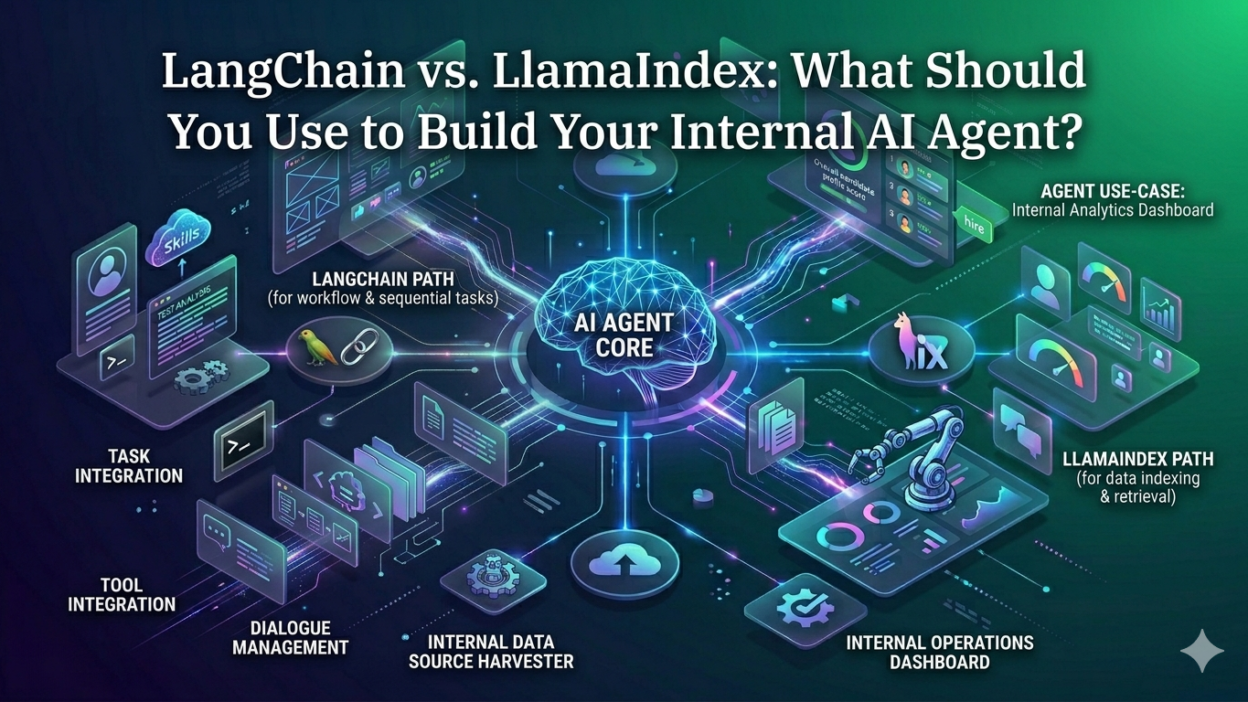

TL;DR Every engineering team building internal AI tools hits the same fork in the road. You need a framework to connect your language model to company data, tools, and workflows. Two names dominate the conversation. LangChain and LlamaIndex both promise to solve this problem. Both have large communities. Both ship updates constantly. Both work with the major LLM providers.

Choosing the wrong one costs weeks of rework. Choosing the right one saves months of development time. The decision matters more than most teams realize at the start.LangChain vs LlamaIndex for AI agents is not a question with one universal answer. The right choice depends on what your agent needs to do, how your data is structured, and how much orchestration complexity your use case requires. This blog gives you the complete picture. You will understand exactly what each framework does best, where each falls short, and which one fits your specific internal agent project.

Table of Contents

What Is LangChain?

The Framework Built for Orchestration

LangChain launched in late 2022 and grew rapidly into one of the most widely used AI development frameworks in the world. Its core design philosophy centers on composability. LangChain gives developers a modular system of components — chains, agents, tools, memory, and retrievers — that connect together to build complex AI workflows.

The framework treats the LLM as one component in a larger system rather than as the system itself. An LLM in a LangChain application calls tools, queries databases, reads documents, manages conversation memory, and routes decisions based on outputs. LangChain provides the connective tissue that makes all of this happen in coordinated sequence.

LangChain vs LlamaIndex for AI agents debates often start here. LangChain was built for agent orchestration from the beginning. Its agent abstractions, tool integrations, and multi-step reasoning patterns reflect years of community iteration on exactly this problem. If your internal agent needs to take actions, call APIs, coordinate multiple steps, and decide what to do next based on intermediate results, LangChain was designed for this exact profile.

Key Components of LangChain

LangChain organizes its capabilities into several core abstractions. Chains are sequences of LLM calls and tool invocations that execute in a defined order. Agents are more dynamic structures where the LLM decides at runtime which tools to call and in what sequence. Tools are integrations with external systems — databases, APIs, search engines, calculators, code executors — that the agent can invoke. Memory systems persist conversation context and intermediate results across steps.

LangChain Expression Language (LCEL) provides a declarative interface for composing these components into coherent pipelines. Developers define what should happen and in what order using a readable syntax that makes complex orchestration logic understandable and maintainable. The component library includes hundreds of pre-built integrations that reduce the custom code required to connect LangChain to your existing infrastructure.

LangSmith and the Development Ecosystem

LangChain has built a broader ecosystem around the core framework. LangSmith provides observability, debugging, and evaluation tools that make development and production monitoring practical. LangGraph extends LangChain’s agent capabilities with graph-based workflow orchestration that handles complex multi-agent coordination and stateful long-running processes.

This ecosystem depth matters for internal enterprise agent development. Debugging AI agents is notoriously difficult without proper visibility into what the agent decided, which tools it called, and what intermediate outputs it produced. LangSmith addresses this directly and integrates natively with LangChain workflows.

What Is LlamaIndex?

The Framework Built for Data

LlamaIndex launched around the same time as LangChain but with a distinctly different design focus. Where LangChain emphasizes orchestration, LlamaIndex emphasizes data. The framework was built specifically to solve the problem of connecting LLMs to complex, heterogeneous enterprise data sources and making that data genuinely accessible to AI systems.

LlamaIndex provides sophisticated data ingestion, indexing, querying, and retrieval capabilities that go significantly deeper than what most general-purpose frameworks offer. It handles the hard parts of RAG (retrieval-augmented generation) with precision that matters when your internal agent needs to reason accurately over large volumes of proprietary company data.

LangChain vs LlamaIndex for AI agents comparisons consistently note that LlamaIndex handles data complexity more elegantly. Its indexing abstractions — vector stores, knowledge graphs, document hierarchies, and hybrid indexes — provide retrieval precision that flat vector search cannot match. For agents whose primary job is accurate information retrieval from company knowledge bases, LlamaIndex starts with a meaningful architectural advantage.

Core LlamaIndex Concepts

LlamaIndex organizes its functionality around data structures and retrieval patterns. Documents are the raw data inputs — PDFs, databases, APIs, spreadsheets, code repositories, and web content. Nodes are processed chunks of document content with metadata attached. Indexes are structured representations of nodes optimized for different types of queries.

Query engines translate natural language questions into structured retrieval operations against these indexes. Retrievers handle the matching logic that identifies which nodes are most relevant to a given query. Response synthesizers take retrieved nodes and compose coherent answers using LLM generation. The entire pipeline is optimized for accuracy and relevance of information retrieval.

LlamaIndex also supports agent patterns through its ReAct agent implementation and tool abstractions. Agents built on LlamaIndex can query multiple indexes, call external tools, and synthesize information across sources. The data retrieval quality that defines LlamaIndex’s core strength remains the agent’s primary capability.

The LlamaIndex Data Ecosystem

LlamaIndex has developed a rich connector library called LlamaHub that provides pre-built data loaders for hundreds of data sources. Notion, Confluence, Google Drive, Slack, GitHub, Salesforce, databases, and dozens of other enterprise systems connect through standardized loaders that handle the complexity of each platform’s data format and API.

This connector breadth matters enormously for internal enterprise AI agents. Enterprise data does not live in one place. It lives across dozens of tools and platforms accumulated over years of organizational growth. LlamaIndex’s investment in data connectivity reflects a genuine understanding of enterprise data reality.

Head-to-Head: LangChain vs LlamaIndex for AI Agents

Agent Orchestration Capability

LangChain holds a clear advantage in agent orchestration complexity. Its agent abstractions, tool-calling patterns, and LangGraph extension provide mature, battle-tested infrastructure for building agents that take sequences of actions, handle errors gracefully, manage state across long-running processes, and coordinate multiple specialized sub-agents.

An internal agent that needs to process a user request, pull data from multiple sources, call an external API, generate a report, and send a notification is an orchestration problem. LangChain vs LlamaIndex for AI agents in orchestration-heavy scenarios consistently favors LangChain. The framework’s design makes complex multi-step workflows easier to express, debug, and maintain.

LlamaIndex has improved its agent capabilities significantly over its development history. Its tool use and ReAct agent patterns work well. They work best when the agent’s primary task is sophisticated data retrieval with some additional tool calling around it. Pure orchestration complexity is not where LlamaIndex’s architecture shines brightest.

Retrieval Augmented Generation Quality

LlamaIndex holds a clear advantage in RAG quality. Its indexing flexibility, retrieval precision, and response synthesis quality reflect deep investment in solving exactly this problem. Sub-document retrieval, recursive summarization, hybrid search combining semantic and keyword matching, and knowledge graph integration all emerge from LlamaIndex’s data-first design.

An internal agent that answers employee questions from HR policy documents, product specification sheets, internal wikis, and past project documentation needs high-quality retrieval. Inaccurate retrieval produces inaccurate answers. Inaccurate answers in an internal enterprise context erode trust rapidly. LangChain vs LlamaIndex for AI agents in retrieval-heavy scenarios consistently favors LlamaIndex.

LangChain provides retriever and vector store integrations that work adequately for straightforward RAG use cases. When retrieval complexity grows — multi-document hierarchies, mixed modalities, precision requirements for regulated content — LlamaIndex’s retrieval architecture provides capabilities that LangChain’s retriever abstractions do not match.

Integration Breadth and Ecosystem

LangChain has a broader ecosystem of integrations with LLM providers, vector databases, external tools, and enterprise systems. Its tool library covers more use cases out of the box. Its community has contributed more diverse integration patterns across more domains.

LlamaIndex has comparable or superior integration depth specifically in the enterprise data source category. Its LlamaHub connector library covers data sources that matter most for internal knowledge base applications. For the specific problem of connecting an enterprise agent to enterprise data, LlamaIndex’s integration library is deep where it matters.

LangChain vs LlamaIndex for AI agents on ecosystem breadth is essentially a tie across the full integration landscape, with LangChain winning on tool and action integrations and LlamaIndex winning on data source integrations.

Developer Experience and Learning Curve

LangChain’s API surface is large. The framework offers many ways to accomplish similar goals. This flexibility enables powerful custom configurations. It also creates cognitive overhead for new users trying to determine which abstraction to use for a given problem. Debugging LangChain agents without LangSmith can be frustrating because the call stack through multiple abstraction layers is difficult to follow.

LlamaIndex’s API is more focused. The data-centric design philosophy creates a more coherent mental model for developers building retrieval applications. The path from data ingestion to index creation to query engine to agent is well-defined and consistently organized. New developers working on retrieval-focused agents often find LlamaIndex easier to reason about initially.

LangChain vs LlamaIndex for AI agents on developer experience depends heavily on the agent type. Orchestration-focused development favors experienced developers who leverage LangChain’s composability. Retrieval-focused development favors teams who appreciate LlamaIndex’s focused API and clear data pipeline structure.

Production Readiness and Observability

Both frameworks have matured significantly in production readiness. LangSmith gives LangChain a meaningful advantage in observability tooling. Tracing agent decisions, monitoring tool call latency, evaluating retrieval quality, and debugging unexpected behaviors are all significantly easier with LangSmith’s native integration.

LlamaIndex integrates with third-party observability platforms including Arize, Weights and Biases, and OpenTelemetry-compatible tools. The observability story is less native but entirely functional for production deployments. Teams with existing observability infrastructure find LlamaIndex’s open integration approach fits well with established monitoring practices.

Use Cases Where LangChain Wins Clearly

Multi-Step Internal Process Automation

An internal agent that handles expense report processing needs to extract data from uploaded receipts, validate against policy rules, query the approval workflow system, route to the appropriate approver, and update the finance system on completion. This is a multi-step process automation problem with clear orchestration requirements.

LangChain vs LlamaIndex for AI agents in process automation scenarios points clearly to LangChain. Its chain and agent abstractions map naturally to process flows. LangGraph handles the stateful, conditional routing that complex processes require. The tool integration library covers the enterprise systems involved in most internal process workflows.

Customer-Facing and Employee-Facing Chatbots with Complex Actions

Internal chatbots that do more than answer questions — that actually take actions, update systems, trigger workflows, and coordinate across multiple backend services — need orchestration sophistication. An IT helpdesk agent that creates tickets, escalates to specialists, checks knowledge bases, and updates status in multiple systems requires the action-oriented architecture LangChain provides.

Research and Analysis Workflows

An internal competitive intelligence agent that searches web sources, pulls internal sales data, queries market research databases, synthesizes findings, and produces structured reports involves both tool calling and data retrieval. LangChain’s flexible orchestration handles the coordination between these diverse capabilities effectively.

Use Cases Where LlamaIndex Wins Clearly

Internal Knowledge Base Agents

The single most common internal enterprise AI agent use case is a knowledge base agent. Employees ask questions. The agent retrieves relevant information from internal documents, policies, procedures, and past work. The agent synthesizes accurate answers with appropriate citations.

LangChain vs LlamaIndex for AI agents in this use case points clearly to LlamaIndex. Its retrieval architecture handles the document complexity, metadata filtering, hybrid search requirements, and response synthesis precision that a high-quality knowledge base agent requires. The quality difference in retrieval accuracy is meaningful and directly affects user trust in the agent.

Document Analysis and Due Diligence

Legal teams reviewing contracts, finance teams analyzing financial statements, and product teams evaluating technical specifications all need agents that reason accurately over complex documents. LlamaIndex’s document processing, hierarchical summarization, and precise retrieval enable agents that handle document complexity reliably.

Multi-Source Data Synthesis

An internal agent that pulls from engineering documentation in Confluence, customer data in Salesforce, product specifications in Notion, and project histories in Jira to answer cross-functional questions needs sophisticated multi-source retrieval. LlamaIndex’s index composition capabilities and data source connectors address this pattern more elegantly than any other framework.

The Case for Using Both Together

Complementary Architectures

LangChain and LlamaIndex are not mutually exclusive. Many production enterprise AI agents use both frameworks together. LlamaIndex handles data ingestion, indexing, and retrieval. LangChain handles agent orchestration and tool coordination. Each framework contributes what it does best.

LangChain provides native support for using LlamaIndex query engines as retriever components within LangChain agents. LlamaIndex supports integration as a tool within LangChain’s agent framework. The combination gives internal enterprise agents LlamaIndex’s retrieval quality within LangChain’s orchestration architecture.

LangChain vs LlamaIndex for AI agents becomes a false choice for teams building sophisticated enterprise agents. The real question becomes which framework owns the agent loop and which framework handles data retrieval.

When Integration Adds Complexity

Using both frameworks adds integration complexity, dependency management overhead, and conceptual surface area for the development team. For simpler agent architectures, this overhead outweighs the benefit of combining frameworks. Teams should evaluate whether their use case genuinely requires capabilities from both frameworks before committing to integration complexity.

Start with the framework that best matches your primary use case. Add the complementary framework only when specific capability gaps create clear problems that cannot be addressed within the primary framework.

Factors to Consider When Making Your Decision

Your Team’s Technical Background

Teams with strong Python development backgrounds and experience with complex software architectures typically adapt to LangChain’s flexibility faster. Teams with less software engineering depth often find LlamaIndex’s more focused API easier to work with productively. The right framework for your project is partly the right framework for your team’s specific capability profile.

Your Data Architecture

Enterprise data that lives primarily in structured databases and requires precise, filtered retrieval benefits from LlamaIndex’s indexing sophistication. Enterprise data that requires complex transformations and processing before it is useful often fits better within LangChain’s pipeline architecture. Assess your actual data situation honestly before selecting a framework.

Your Agent’s Primary Job

Answering questions accurately points to LlamaIndex. Taking actions across multiple systems points to LangChain. A mix of both capabilities with roughly equal weight suggests evaluating both frameworks and potentially combining them. LangChain vs LlamaIndex for AI agents decisions anchored to the agent’s actual primary function produce better outcomes than decisions based on hype or community momentum alone.

Your Long-Term Maintenance Requirements

Both frameworks evolve rapidly. APIs change between major versions. Integrations deprecate and rebuild. Teams maintaining internal agents over multiple years need to budget for framework upgrade work regularly. LangChain’s larger community creates more upgrade documentation and community support resources. LlamaIndex’s more focused scope means fewer breaking changes in the data retrieval components that form its core.

FAQs About LangChain vs LlamaIndex for AI Agents

Which framework is more popular in 2026?

Both frameworks maintain large and active communities in 2026. LangChain has a larger total GitHub star count and broader community activity across diverse use cases. LlamaIndex has a more concentrated and deeply engaged community around RAG and enterprise data retrieval specifically. LangChain vs LlamaIndex for AI agents in popularity terms shows LangChain with wider reach and LlamaIndex with deeper specialization.

Can LlamaIndex build agents or is it only for RAG?

LlamaIndex builds capable agents through its ReAct agent implementation and tool abstractions. These agents handle tool calling, multi-step reasoning, and external API integration effectively. LlamaIndex’s agent capabilities are strongest when data retrieval is central to the agent’s function. Pure orchestration complexity without significant retrieval requirements is better served by LangChain’s richer agent infrastructure.

Is LangChain harder to learn than LlamaIndex?

LangChain’s larger API surface creates a steeper initial learning curve for developers new to AI frameworks. LlamaIndex’s focused data-centric design produces a more coherent learning experience for teams building retrieval-focused applications. LangChain vs LlamaIndex for AI agents on learning curve depends heavily on what the agent does. Both frameworks have good documentation and active communities that support the learning process.

Do large enterprises use these frameworks in production?

Yes. Both frameworks see production deployment at large enterprises globally. LangChain appears more frequently in enterprise orchestration and process automation contexts. LlamaIndex appears more frequently in enterprise knowledge management and document intelligence contexts. Both have enterprise support options and security documentation that enterprise procurement and security teams require.

Which framework works best with private LLM deployments?

Both frameworks support private LLM deployments through Azure OpenAI, AWS Bedrock, locally hosted models via Ollama, and similar private inference options. LangChain’s broader integration library includes more private deployment configuration patterns from community contributions. LlamaIndex connects to private LLMs equally well for its retrieval workflows. LangChain vs LlamaIndex for AI agents on private deployment support is essentially equivalent in capability.

How do these frameworks handle security and data privacy?

Neither framework inherently stores your data. Both process data in memory and pass it to whichever LLM provider you configure. Security depends on your infrastructure choices — which LLM provider, which vector database, which cloud environment — rather than on the framework itself. Both frameworks support configurations that keep data entirely within your private infrastructure. Internal enterprise agents handling sensitive data should implement appropriate encryption, access controls, and audit logging regardless of framework choice.

Should I choose based on the framework’s roadmap?

Both LangChain and LlamaIndex ship updates frequently and have public roadmaps indicating continued investment. Choosing based on current roadmap announcements is less reliable than choosing based on current capability match to your use case. Frameworks can pivot, slow down, or accelerate development unpredictably. LangChain vs LlamaIndex for AI agents decisions grounded in current capability fit your actual requirements will serve you better than bets on future roadmap delivery.

Read More:-Gemini 1.5 Pro vs. Llama 3: Best Models for Large-Scale Document Processing

Conclusion

The LangChain vs LlamaIndex for AI agents question does not have a universal answer. Both frameworks are genuinely excellent at what they were designed to do. Both have active communities, real production deployments, and continuing investment behind them.

LangChain wins when your internal agent needs to orchestrate complex multi-step processes, coordinate tools and APIs, handle conditional routing, and manage stateful long-running workflows. Its composable architecture, mature agent abstractions, and LangGraph extension make complex orchestration achievable and maintainable.

LlamaIndex wins when your internal agent needs to retrieve accurate information from large, complex, heterogeneous enterprise data sources. Its indexing flexibility, retrieval precision, and data connector ecosystem make knowledge base agents that employees actually trust possible to build.

The honest answer for many internal enterprise AI agents is that both frameworks are worth evaluating seriously. The retrieval quality that LlamaIndex delivers combined with the orchestration sophistication that LangChain provides creates capabilities that neither achieves alone. Many mature enterprise agent architectures use both together.

Start by defining what your agent primarily needs to do. If it answers questions from internal data, start with LlamaIndex. If it takes actions and coordinates workflows, start with LangChain. Build a focused proof of concept that tests your specific use case requirements against real data and real workflows. The right framework will reveal itself through practical evaluation faster than any theoretical comparison.

LangChain vs LlamaIndex for AI agents is ultimately a productive question to ask carefully and answer empirically. Build something small. Measure what matters. Choose based on evidence from your actual use case. Then build the agent your organization genuinely needs.