Introduction

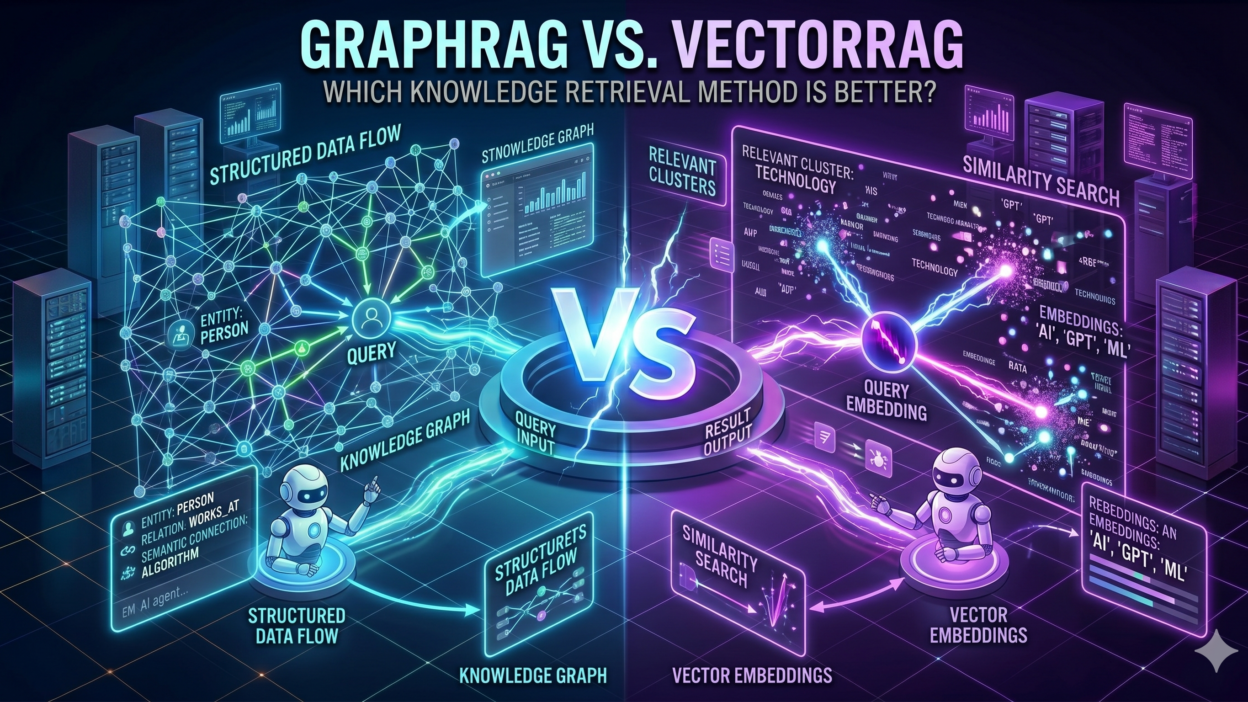

TL;DR AI systems today are only as smart as the knowledge they can retrieve. Retrieval-Augmented Generation, or RAG, sits at the heart of modern AI applications. It lets language models pull in real-world information before generating a response. Two major retrieval approaches have emerged in this space. The GraphRAG vs VectorRAG debate has become one of the most important conversations in AI development circles.

Both methods solve the same core problem. They help AI models find relevant information from large knowledge bases. The way each method does this, though, is fundamentally different. GraphRAG uses knowledge graphs and relationships between entities. VectorRAG relies on vector embeddings and semantic similarity. Each has strengths. Each has limitations.

This blog breaks down exactly what separates these two methods. You will understand how each one works, where each one shines, and which one suits your specific use case. Whether you build enterprise AI tools or evaluate AI platforms, this guide gives you what you need to make a smart choice.

Table of Contents

What Is VectorRAG? Understanding the Vector-Based Retrieval Approach

VectorRAG is the more established of the two methods. It converts text data into numerical representations called vector embeddings. Each piece of text gets mapped to a point in high-dimensional space. Texts with similar meanings end up close together in that space. When a user asks a question, the system converts the query into a vector and searches for the nearest matching vectors in the database.

This semantic search approach works remarkably well for many tasks. It captures meaning beyond exact keyword matching. A question about car maintenance can find relevant content about vehicle upkeep even if those exact words never appear together. The model understands context and retrieves accordingly.

How VectorRAG Stores and Retrieves Information

VectorRAG systems use vector databases like Pinecone, Weaviate, or Qdrant to store embeddings. Documents get chunked into smaller segments. Each chunk receives an embedding from a model like OpenAI’s text-embedding-ada-002 or a similar encoder. These embeddings store alongside the original text in the vector database.

At query time, the system embeds the user’s question and runs an approximate nearest neighbor search. The top-k most similar chunks return to the language model as context. The model then generates a response grounded in those retrieved documents. The entire process happens fast, often in milliseconds.

Where VectorRAG Works Best

VectorRAG handles document search and question answering exceptionally well. Customer support systems that retrieve from FAQs love VectorRAG. Legal research tools that scan case libraries rely on it heavily. Any application where single-document relevance matters more than cross-document reasoning fits VectorRAG well.

The method also scales impressively. You can index millions of documents and still get fast retrieval times. Setup is relatively straightforward. Many cloud providers offer managed vector search services. Development teams can build a VectorRAG pipeline in days rather than weeks.

What Is GraphRAG? Understanding the Graph-Based Retrieval Approach

GraphRAG takes a fundamentally different approach to knowledge retrieval. Instead of treating documents as isolated chunks of text, it builds a knowledge graph. This graph maps out entities and the relationships between them. A person connects to a company. A company connects to a product. A product connects to a category. Every connection carries meaning.

Microsoft Research popularized GraphRAG with their 2024 paper on the subject. They demonstrated that graph-based retrieval outperforms vector-based methods on complex reasoning tasks, particularly those requiring synthesis across multiple documents. The GraphRAG vs VectorRAG comparison gained serious academic and industry attention from that point forward.

How GraphRAG Builds and Traverses Knowledge Graphs

GraphRAG systems first extract entities and relationships from raw documents. Large language models often handle this extraction step. The system identifies named entities like people, places, organizations, and events. It then identifies how these entities relate to one another. These relationships form the edges of the knowledge graph.

When a user queries the system, GraphRAG traverses the graph rather than searching for similar vectors. It starts from relevant nodes and follows relationship paths to gather connected information. This traversal can span multiple documents and multiple hops. The context delivered to the language model reflects the connected web of knowledge, not just the closest matching text chunks.

Community Summaries and Global Context in GraphRAG

One of GraphRAG’s most distinctive features is community detection. The system groups related entities into communities. It generates summary descriptions for each community. These summaries give the language model high-level context about entire topic clusters, not just individual facts.

This global context capability sets GraphRAG apart from VectorRAG in a meaningful way. When a question requires understanding how different parts of a knowledge base relate to each other, GraphRAG’s community summaries provide that big-picture view. VectorRAG lacks this cross-document synthesis by design.

GraphRAG vs VectorRAG: A Direct Head-to-Head Comparison

Retrieval Mechanism

The retrieval mechanism is the most fundamental difference in the GraphRAG vs VectorRAG debate. VectorRAG finds semantically similar text chunks. GraphRAG traverses structured relationship paths. VectorRAG answers the question: what text is most like this query? GraphRAG answers the question: what entities and relationships are relevant to this query?

For factual lookups, VectorRAG often wins on speed. For multi-hop reasoning, GraphRAG wins on accuracy. A question like ‘Who are the key investors in companies that compete with OpenAI?’ requires multiple relationship traversals. GraphRAG handles this naturally. VectorRAG struggles without significant prompt engineering.

Knowledge Representation

VectorRAG represents knowledge as dense numerical vectors. The meaning lives in the geometry of the vector space. GraphRAG represents knowledge as nodes and edges in an explicit graph. The meaning lives in the labeled relationships between entities.

Vector representations are implicit. They capture meaning without stating it directly. Graph representations are explicit. They name the relationships clearly. This explicitness makes GraphRAG more interpretable. You can trace exactly why the system retrieved a particular piece of information by following the graph path.

Reasoning Capability

Reasoning is where GraphRAG vs VectorRAG differences become most dramatic. VectorRAG excels at single-hop retrieval. One query finds one relevant passage. GraphRAG excels at multi-hop reasoning. One query triggers a chain of relationship traversals that assembles a complete picture from multiple sources.

Complex analytical questions benefit enormously from GraphRAG’s reasoning capabilities. Summarizing themes across an entire document corpus requires cross-document synthesis. Understanding how one event caused another requires causal relationship traversal. VectorRAG cannot do this reliably. GraphRAG is designed precisely for this purpose.

Scalability and Performance

VectorRAG scales more easily than GraphRAG. Vector databases handle billions of embeddings with well-understood infrastructure. Query latency stays low even at massive scale. The technology is mature. Tooling is abundant. Teams with standard engineering skills can operate VectorRAG systems confidently.

GraphRAG requires more sophisticated infrastructure. Knowledge graph databases like Neo4j or Amazon Neptune handle the graph storage. Graph traversal at scale demands careful optimization. Construction of the knowledge graph itself is computationally intensive. This makes GraphRAG more expensive and complex to build and maintain.

Setup Complexity and Cost

VectorRAG wins on ease of implementation. Embedding documents, storing vectors, and running similarity search is a well-documented process. Many open-source libraries like LangChain and LlamaIndex offer ready-made VectorRAG pipelines. A developer can have a working system in hours.

GraphRAG requires more upfront investment. Building a quality knowledge graph demands entity extraction, relationship mapping, and graph construction. Each of these steps needs careful design. The payoff is higher quality retrieval for complex queries. Teams must decide whether the query complexity they face justifies that investment.

When to Choose GraphRAG and When to Choose VectorRAG

Use Cases That Favor VectorRAG

VectorRAG fits best when your queries are straightforward and document-level. An internal knowledge base for customer support works well with VectorRAG. Employees ask questions. The system finds the closest matching FAQ or policy document. The answer is clear and direct. No multi-hop reasoning is necessary.

E-commerce product search also suits VectorRAG well. A shopper describes what they want in natural language. The system finds semantically similar product descriptions. Recommendations appear instantly. The relationship between products matters less than the similarity between query and description.

Academic paper search represents another strong VectorRAG use case. Researchers enter a topic. The system finds the most semantically related abstracts and papers. Quick relevance ranking is the goal. Deep relational reasoning is not required. VectorRAG delivers fast and accurate results for this purpose.

Use Cases That Favor GraphRAG

GraphRAG shines in intelligence analysis and research synthesis. An analyst studying geopolitical events needs to understand how actors, organizations, and incidents connect across thousands of documents. GraphRAG maps these relationships explicitly and retrieves connected information efficiently. VectorRAG would miss critical cross-document links.

Healthcare knowledge management is another powerful GraphRAG application. Medical knowledge is deeply relational. A drug interacts with a condition that affects a patient population that responds to a treatment protocol. Traversing these relationships requires a graph. GraphRAG gives clinical decision support systems the relational reasoning they need.

Financial fraud detection benefits from GraphRAG’s relationship traversal. Fraud patterns often involve networks of entities. A person connects to a shell company that connects to a suspicious transaction that connects to another person. Graph traversal exposes these networks naturally. VectorRAG would require piecing together these connections manually.

Hybrid Approaches: Combining GraphRAG and VectorRAG

Many production AI systems today do not choose one method exclusively. The GraphRAG vs VectorRAG question often resolves to a hybrid answer. Smart architectures use both methods together. Each method handles the tasks it does best.

A hybrid system might use VectorRAG for initial candidate retrieval. The system pulls the most semantically relevant text chunks first. It then uses GraphRAG to enrich those results with relational context. The language model receives both semantically similar passages and graph-connected entity relationships. The resulting response reflects richer context than either method alone would provide.

How Hybrid RAG Architectures Work

In a hybrid architecture, the query router decides which retrieval method handles each query type. Simple factual questions route to the vector search pipeline. Complex relational questions route to the graph traversal pipeline. Queries requiring both approaches activate both pipelines simultaneously.

The results from both pipelines merge before reaching the language model. A context assembly layer ranks and deduplicates information. The language model receives a coherent, comprehensive context window. This approach delivers the speed of VectorRAG and the depth of GraphRAG within a single system.

Tools Supporting Hybrid RAG

Several platforms now support hybrid retrieval approaches. Microsoft’s GraphRAG open-source library integrates naturally with Azure AI Search. Neo4j offers vector search alongside graph traversal in a single database. LangChain provides abstractions for combining multiple retrieval strategies. These tools lower the barrier to building hybrid systems significantly.

GraphRAG vs VectorRAG Performance Benchmarks and Research Findings

Academic research has begun to clarify where each method excels. Microsoft’s original GraphRAG paper showed significant improvements on global question answering tasks. Queries requiring synthesis across an entire corpus showed win rates above 70% in favor of GraphRAG over naive VectorRAG approaches.

VectorRAG, however, consistently outperforms GraphRAG on local, specific queries. When the answer exists cleanly within a single document or passage, vector similarity search finds it faster and with higher precision. The GraphRAG vs VectorRAG performance gap narrows or reverses when query complexity decreases.

Metrics That Matter in RAG Evaluation

Evaluating RAG systems requires looking at multiple metrics together. Retrieval precision measures how many retrieved chunks actually contain relevant information. Recall measures how many relevant chunks the system successfully retrieved. Faithfulness measures whether the generated answer accurately reflects the retrieved content. Answer relevance measures how well the final answer addresses the original question.

GraphRAG tends to score higher on faithfulness for complex queries. Its structured retrieval provides more accurate relational context. VectorRAG tends to score higher on retrieval speed and precision for simple queries. Choosing the right evaluation metrics for your use case is essential before declaring a winner in the GraphRAG vs VectorRAG comparison.

Practical Guide to Implementing GraphRAG vs VectorRAG

Getting Started with VectorRAG

Starting a VectorRAG project requires four main decisions. First, pick an embedding model. OpenAI’s text-embedding-3-small offers good quality at low cost. Second, choose a vector database. Pinecone works well for managed infrastructure. Chroma works well for local development. Third, define your chunking strategy. Chunks of 512 tokens with 50-token overlaps work for most document types. Fourth, set your similarity threshold. This filters out low-relevance results before they reach the language model.

Indexing is straightforward. Load documents, chunk them, embed each chunk, and store the embeddings. Query time involves embedding the user query and searching for top-k nearest neighbors. The retrieved chunks feed into the language model’s context window along with the original query.

Getting Started with GraphRAG

GraphRAG implementation starts with entity extraction. Feed your documents to a language model with a prompt designed to identify entities and relationships. Store these in a graph database. Neo4j is the most popular choice for this purpose. Define relationship types clearly. Vague relationship labels produce a messy graph that retrieves poorly.

Microsoft’s open-source GraphRAG library accelerates this process significantly. It handles entity extraction, graph construction, community detection, and summary generation automatically. The library integrates with Azure OpenAI and supports local model deployment too. Run the indexing pipeline on your document corpus and the graph builds progressively.

Choosing the Right Architecture for Your Team

Your team’s skills matter as much as the use case. A team comfortable with databases and semantic search should start with VectorRAG. It has a gentler learning curve and faster time to production. A team with graph database experience and complex data relationships should explore GraphRAG seriously.

Budget also plays a role. GraphRAG indexing uses significantly more LLM tokens than VectorRAG indexing. For large document corpora, these costs add up quickly. Plan your budget for initial indexing and ongoing updates. Hybrid systems cost more to build and maintain than either approach alone.

Frequently Asked Questions About GraphRAG vs VectorRAG

What is the main difference between GraphRAG vs VectorRAG?

The core difference is how each method represents and retrieves knowledge. VectorRAG uses numerical vector embeddings to find semantically similar text chunks. GraphRAG uses a structured knowledge graph to traverse relationships between entities. VectorRAG is better for simple, document-level retrieval. GraphRAG is better for complex, multi-hop reasoning across many documents.

Which is faster — GraphRAG or VectorRAG?

VectorRAG is generally faster at query time. Vector similarity search is a well-optimized operation that runs in milliseconds at scale. GraphRAG involves graph traversal, which can be slower, especially for deep multi-hop queries. However, GraphRAG’s pre-computed community summaries can speed up global queries significantly compared to naive approaches.

Is GraphRAG more expensive than VectorRAG?

Yes. GraphRAG costs more to build and operate. The indexing process requires many LLM API calls for entity extraction and summary generation. VectorRAG indexing only requires embedding API calls, which are much cheaper. At runtime, GraphRAG may require additional LLM calls for query decomposition and graph traversal. Budget planning is essential before choosing GraphRAG for large corpora.

Can you use GraphRAG and VectorRAG together?

Absolutely. Hybrid architectures that combine both methods are growing in popularity. VectorRAG handles fast semantic retrieval for simple queries. GraphRAG handles relational reasoning for complex queries. A query router directs each question to the appropriate pipeline. The results merge before reaching the language model. This approach captures the strengths of both methods.

What types of applications benefit most from GraphRAG?

Applications involving complex knowledge relationships benefit most from GraphRAG. Intelligence analysis, healthcare knowledge management, financial risk assessment, legal research, and scientific literature synthesis all involve deeply relational data. These domains require reasoning across multiple connected entities, which is exactly what GraphRAG is designed to do.

What secondary keywords relate to GraphRAG vs VectorRAG?

Related search terms include knowledge graph retrieval, vector database search, retrieval-augmented generation, semantic search AI, knowledge graph AI, RAG architecture comparison, LLM knowledge retrieval, enterprise AI search, graph neural networks RAG, and multi-hop question answering. These terms frequently appear alongside GraphRAG vs VectorRAG in technical content.

Read More:-AI for Real Estate: Automating Lead Follow-ups While Staying “Human”

Conclusion

The GraphRAG vs VectorRAG debate does not have a single winner. Both methods are powerful. Both serve real needs. The right choice depends entirely on what your application demands.

VectorRAG is the practical starting point for most teams. It is fast, affordable, and well-supported. If your use case involves straightforward document search or question answering, VectorRAG delivers excellent results with manageable complexity. Build it, deploy it, and iterate quickly.

GraphRAG is the right choice when complexity demands it. If your users ask questions that require reasoning across many documents and entities, GraphRAG earns its higher cost. Intelligence platforms, medical knowledge tools, and financial analysis systems all justify the investment. The quality improvement for relational queries is real and measurable.

Hybrid systems represent the future of production RAG architectures. As tooling matures and costs decrease, combining GraphRAG and VectorRAG will become the standard approach for serious AI applications. The GraphRAG vs VectorRAG distinction may eventually matter less than how well each method is integrated into a cohesive retrieval strategy.

Start by mapping your query types. Identify how often users ask simple factual questions versus complex relational ones. Let that ratio guide your architecture decision. Prototype with VectorRAG first. Add graph capabilities where your data and queries demand it. Build smart, test rigorously, and evolve your retrieval strategy as your application grows.

The best knowledge retrieval system is the one that consistently delivers accurate, relevant, and timely answers to your users. Whether that is GraphRAG, VectorRAG, or a hybrid of both depends on your data, your users, and your resources. Make the decision deliberately and revisit it as the technology continues to evolve.