Introduction

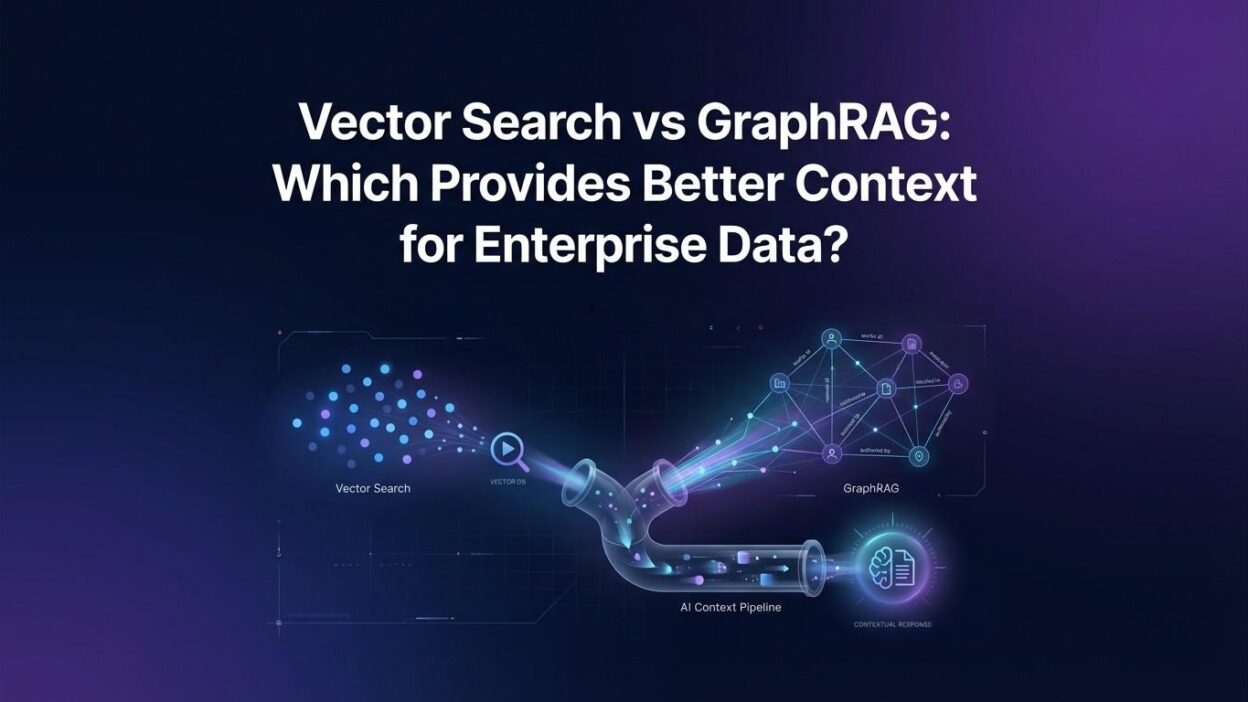

TL;DR Enterprise data is exploding. Documents, contracts, product records, support tickets, emails, wikis — it piles up fast. Finding the right information at the right moment is a real operational challenge. Traditional keyword search falls short. Semantic retrieval tools are filling the gap. Two approaches dominate the conversation right now: vector search and GraphRAG.

The debate around Vector Search vs GraphRAG is not just academic. It shapes how enterprises build AI assistants, knowledge bases, and decision-support tools. Each approach retrieves information differently. Each has real strengths and genuine limitations. The wrong choice can cost months of engineering time and produce a system that frustrates users.

This blog breaks down both technologies clearly. It explains how each one works, where each one excels, and which scenarios favor one over the other. By the end, you will have a practical framework for choosing the right approach for your enterprise.

Table of Contents

Setting the Stage: What Is Retrieval-Augmented Generation?

Before comparing Vector Search vs GraphRAG, it helps to understand why retrieval matters at all. Large language models (LLMs) like GPT-4 or Claude are powerful. But they have a fixed knowledge cutoff. They do not know your company’s internal documents. They cannot access your proprietary data unless you give it to them.

Retrieval-Augmented Generation (RAG) solves this. It retrieves relevant content from your data sources. It passes that content to the LLM as context. The LLM generates a response grounded in your actual data. The quality of the retrieval step determines the quality of the final answer.

Vector search is the most common retrieval method in RAG systems today. GraphRAG is an emerging alternative that retrieves context differently. Understanding the difference between them is the key to building AI systems that actually serve enterprise needs.

What Is Vector Search?

Vector search converts text into numerical representations called embeddings. An embedding is a dense list of numbers. It captures the semantic meaning of a piece of text. Similar meanings produce embeddings that sit close together in a high-dimensional space. Dissimilar meanings produce embeddings that sit far apart.

When a user asks a question, the system converts that question into an embedding. It then searches a vector database for documents whose embeddings are closest to the query embedding. The closest matches are returned as the most relevant results. The LLM receives these results and generates a response.

Vector databases like Pinecone, Weaviate, Qdrant, and pgvector power this process. They store millions of embeddings efficiently. They return nearest-neighbor results in milliseconds. This speed makes vector search practical at enterprise scale.

Strengths of Vector Search

Vector search handles semantic similarity extremely well. A query about “employee compensation” matches documents discussing “staff salaries” even if the exact words differ. This semantic flexibility makes it far more powerful than keyword matching. It works across languages with multilingual embedding models. It scales to billions of documents with the right infrastructure.

Setup is relatively straightforward. You choose an embedding model, process your documents, store the vectors, and build a query pipeline. Many cloud platforms offer managed vector search services. Teams can build a working RAG system in days, not months.

Vector search performs well for document retrieval tasks. FAQ systems, customer support bots, policy lookup tools, and semantic search interfaces are natural fits. Most enterprise AI assistants built today use vector search as their retrieval backbone.

Limitations of Vector Search

Vector search retrieves isolated chunks of text. It does not understand relationships between pieces of information. A document chunk about a product recall and a chunk about the supplier responsible for that recall are two separate items in the vector database. Vector search may return one but not the other. The LLM never sees the connection.

Multi-hop reasoning is difficult. If answering a question requires combining information from three different documents, vector search may retrieve only one or two of them. The context the LLM receives is incomplete. The answer the LLM generates is shallow or incorrect.

Enterprise data is deeply interconnected. Contracts reference vendors. Vendors connect to orders. Orders link to shipment records. Vector search ignores this web of relationships. That is a significant limitation when enterprise questions require traversing these connections to produce accurate answers.

What Is GraphRAG?

GraphRAG is a retrieval approach that combines knowledge graphs with language model generation. It represents information as a graph of entities and relationships. Nodes represent entities — people, products, organizations, concepts. Edges represent relationships between them — owns, manages, relates to, caused by.

Microsoft Research published a paper on GraphRAG in 2024. It demonstrated that graph-based retrieval produces richer context for complex queries than standard vector search. The approach attracted significant attention across the enterprise AI community. The Vector Search vs GraphRAG conversation intensified after that publication.

GraphRAG builds a knowledge graph from source documents. It uses an LLM to extract entities and relationships from text during ingestion. The resulting graph captures the structure of your knowledge base, not just its contents. When a query arrives, GraphRAG traverses relevant subgraphs. It retrieves entities and relationships that answer the question. It passes this structured context to the LLM.

Strengths of GraphRAG

GraphRAG handles complex, multi-hop queries far better than vector search. A question like “Which suppliers have had quality issues that affected shipments to our top three customers in the last year?” requires traversing multiple entity relationships. GraphRAG navigates that graph directly. It assembles the relevant connected facts and delivers them as context.

It produces global summaries of a topic. Vector search retrieves local document chunks. GraphRAG can synthesize information from across the entire knowledge graph. This is a major advantage for strategic questions that require a holistic view of a domain.

GraphRAG also reduces hallucination risk. The LLM receives structured, verified facts from the graph. It has less room to fabricate connections because the connections are already mapped. In regulated industries where accuracy is non-negotiable, this property is valuable.

Explainability improves with GraphRAG. You can trace exactly which nodes and edges informed a given answer. That audit trail matters in compliance, legal, and financial contexts. Vector search retrieval is harder to explain because it operates on geometric similarity in high-dimensional space.

Limitations of GraphRAG

GraphRAG is expensive to build. Constructing the knowledge graph requires LLM calls for entity extraction during ingestion. Processing a large document corpus costs significant compute and money. Updating the graph when documents change requires re-extraction. This operational overhead is non-trivial.

Graph quality depends on extraction quality. If the LLM misidentifies entities or misses relationships during ingestion, the graph is wrong. Garbage in, garbage out applies here just as much as anywhere else in data engineering. Maintaining graph quality over time requires ongoing curation.

Latency can be higher than vector search for simple queries. Traversing a graph is computationally different from doing a nearest-neighbor lookup. For high-volume, low-complexity queries, vector search returns results faster. GraphRAG adds overhead that may not be justified for simple factual lookups.

The tooling ecosystem is less mature. Vector search has years of production hardening behind it. GraphRAG tools like Microsoft’s open-source GraphRAG library and Neo4j integrations are newer. Enterprise teams may encounter rougher edges in production compared to mature vector search stacks.

Vector Search vs GraphRAG: A Direct Comparison

The Vector Search vs GraphRAG debate comes down to what kind of context your enterprise needs most. Both retrieve information. They retrieve it differently. They serve different query patterns better.

Query Complexity

Vector search handles simple factual queries exceptionally well. “What is our refund policy?” or “Summarize the Q3 earnings report” are perfect use cases. The query maps to a small set of relevant document chunks. The LLM produces an accurate answer. Speed is fast. Cost is low.

GraphRAG handles complex analytical queries much better. “Which executive decisions in 2023 contributed to the supply chain disruptions mentioned in Q1 2024 board minutes?” requires cross-document reasoning over interconnected entities. GraphRAG navigates this naturally. Vector search would likely miss critical connections.

Data Structure and Representation

Vector search treats documents as flat chunks of text. It does not care about structure. A paragraph is a paragraph. It has a semantic embedding. That is enough. This simplicity makes vector search easy to implement across any document type.

GraphRAG treats knowledge as a network. Every piece of information connects to other pieces through typed relationships. This structure reflects how the real world actually works. Organizations are networks. Products exist within ecosystems. Events have causes and effects. GraphRAG models that reality.

Cost and Operational Complexity

Vector search wins on simplicity and cost for most standard use cases. Embedding generation is cheap. Storage is efficient. Managed services reduce operational burden. A small team can maintain a vector search RAG system with minimal effort.

GraphRAG demands more investment. Graph construction, storage, traversal, and curation all require dedicated attention. The infrastructure is more complex. The expertise required is broader. Teams need skills in graph databases, ontology design, and LLM-based extraction pipelines. The payoff is justified for high-complexity use cases. It is not justified for every enterprise scenario.

Accuracy and Hallucination Risk

Both approaches reduce hallucination compared to using an LLM with no retrieval at all. But they do so in different ways. Vector search grounds the LLM in relevant text passages. The LLM still has to reason across those passages. Errors occur when passages are incomplete or contradict each other.

GraphRAG grounds the LLM in structured facts and verified relationships. The LLM reasons over a more curated and connected context. This reduces the chance that it invents connections that do not exist. For enterprise scenarios where a wrong answer carries serious consequences, GraphRAG offers a meaningful accuracy advantage.

When to Choose Vector Search for Your Enterprise

Vector search is the right choice in specific situations. Choose it when your queries are mostly simple and factual. Choose it when your document corpus is large but your questions do not require cross-document reasoning. Customer support systems, internal FAQ bots, policy lookup tools, and product documentation search are natural fits.

Choose vector search when speed is the priority. High-throughput systems that handle thousands of queries per minute need fast retrieval. Vector nearest-neighbor search is hard to beat on latency at scale. Choose it when your team is small and you need to ship quickly. The tooling is mature, the tutorials are abundant, and the path to production is well-documented.

Many enterprises start with vector search and expand later. This is a smart strategy. Get a working system into users’ hands quickly. Learn what queries they ask. Identify where the system falls short. That data will tell you whether GraphRAG is worth the investment.

When to Choose GraphRAG for Your Enterprise

GraphRAG becomes the right answer when your enterprise data is deeply interconnected and your users ask complex analytical questions. Intelligence agencies, financial institutions, pharmaceutical companies, and legal firms all deal with data where relationships carry critical meaning. The Vector Search vs GraphRAG choice becomes clear in these environments — GraphRAG wins.

Choose GraphRAG when explainability is a requirement. Regulated industries cannot simply say “the AI said so.” They need to trace every answer back to verifiable facts and relationships. GraphRAG provides that audit trail. Vector search does not.

Choose GraphRAG when your domain knowledge has strong ontological structure. Medical knowledge graphs, legal citation networks, and supply chain relationship maps are natural fits. The investment in building the graph pays dividends across many queries and many years.

Choose GraphRAG when your users ask “why” and “how” questions, not just “what” questions. Strategic decision-makers need to understand causality, attribution, and chain-of-effect. GraphRAG retrieves that kind of context. Vector search retrieves facts but not the web of relationships that give those facts meaning.

Hybrid Approaches: Combining Vector Search and GraphRAG

The smartest enterprise teams are not choosing sides in the Vector Search vs GraphRAG debate. They are combining both approaches. A hybrid architecture uses vector search for fast, broad retrieval and GraphRAG for deep, relationship-aware context enrichment.

One common pattern uses vector search to identify the most relevant document clusters. It then uses GraphRAG to expand context by traversing the knowledge graph from the nodes mentioned in those clusters. The LLM receives both the raw text passages and the structured relationship context. Answer quality improves significantly.

Another pattern routes queries. Simple factual queries go to the vector search pipeline. Complex analytical queries go to the GraphRAG pipeline. A query classifier sits at the front of the system. It decides which path each query takes. This routing approach controls cost while maximizing answer quality across query types.

Hybrid systems require more engineering investment upfront. But they avoid the single-approach trap. Neither vector search alone nor GraphRAG alone serves the full range of enterprise queries optimally. A thoughtfully designed hybrid system does.

Key Concepts You Need to Understand

Knowledge Graph vs Vector Database

A vector database stores numerical embeddings of text. It retrieves based on geometric similarity. A knowledge graph stores entities and their typed relationships. It retrieves based on graph traversal. Both are data stores for AI retrieval. They store fundamentally different representations of the same underlying information. The Vector Search vs GraphRAG choice partly comes down to which representation serves your queries best.

Chunking Strategy in Vector Search

Vector search requires you to split documents into chunks before embedding them. Chunk size affects retrieval quality significantly. Chunks that are too small lose context. Chunks that are too large dilute relevance. Finding the right chunking strategy for your document types takes experimentation. Overlap between chunks helps preserve context at boundaries. This chunking problem does not exist in GraphRAG because knowledge is stored as structured entities, not raw text segments.

Entity Extraction and Ontology Design in GraphRAG

GraphRAG quality depends heavily on entity extraction quality. You must define what entities matter in your domain. You must define what relationships connect them. This is ontology design. It requires domain expertise. A healthcare knowledge graph defines patients, conditions, treatments, and providers as entity types. A financial knowledge graph defines companies, executives, transactions, and regulatory filings. Getting the ontology right from the start saves significant rework later.

Choosing the Right Embedding Model

Vector search quality depends on the embedding model you choose. General-purpose models like OpenAI’s text-embedding-3-large work well for broad enterprise use cases. Domain-specific models fine-tuned on legal, medical, or financial text outperform general models in those verticals. Model choice affects both retrieval accuracy and embedding cost. Evaluate several models on your actual data before committing to one.

Frequently Asked Questions

Is GraphRAG always better than vector search for enterprise AI?

No. GraphRAG is better for complex, relationship-heavy queries. Vector search is better for simple, high-volume factual queries. The best system for your enterprise depends entirely on what questions your users ask most often. Evaluate both approaches against real queries from real users before making a final decision.

How long does it take to build a GraphRAG system?

A basic GraphRAG proof of concept takes two to four weeks with a focused team. A production-ready enterprise system with a well-curated knowledge graph takes three to nine months. Graph construction, quality validation, curation pipelines, and retrieval optimization all add time. Plan accordingly. Do not underestimate the ontology design phase — it is the most intellectually demanding part of the project.

Can I migrate from vector search to GraphRAG later?

Yes. Many enterprises start with vector search and add GraphRAG capabilities incrementally. Your existing document corpus already provides the raw material for graph construction. A well-designed system architecture keeps retrieval logic modular. You can swap or augment the retrieval layer without rebuilding the entire AI application. Plan for this possibility from the start even if you begin with vector search only.

What graph databases work best with GraphRAG?

Neo4j is the most widely used graph database for GraphRAG implementations. It has strong LLM integration support through LangChain and LlamaIndex connectors. Amazon Neptune suits AWS-centric enterprises. Microsoft Azure Cosmos DB for Apache Gremlin works well in Microsoft-heavy environments. TigerGraph handles very large-scale graph workloads efficiently. Your existing cloud infrastructure often guides the best choice.

How does vector search handle multilingual enterprise data?

Multilingual embedding models like multilingual-e5-large or Cohere’s multilingual embed model handle cross-language retrieval well. A query in English can match documents in French, Spanish, or German. Vector search naturally extends to multilingual corpora with the right model. GraphRAG multilingual support depends on your entity extraction pipeline. LLMs handle multilingual extraction, but graph quality validation across languages requires careful testing.

What is the cost difference between vector search and GraphRAG at scale?

Vector search costs are primarily storage and query costs. These are well-understood and predictable. GraphRAG adds ingestion costs because LLM calls are needed to extract entities during document processing. These costs can be significant for large corpora. Graph storage and traversal add infrastructure costs on top. A rough estimate suggests GraphRAG total cost of ownership runs three to five times higher than vector search for equivalent document volumes. The business value of better answers must justify that premium.

Is the Vector Search vs GraphRAG choice permanent?

No decision in enterprise software is permanent. The Vector Search vs GraphRAG landscape will keep evolving. New tools, better models, and improved architectures will change what is practical and affordable. Build your systems modularly. Use abstraction layers between your application and your retrieval layer. That flexibility lets you adopt better approaches as they mature without rebuilding everything from scratch.

Real-World Enterprise Use Cases

Financial Services

A global investment bank uses vector search to power a research assistant. Analysts query a corpus of 50,000 research reports. The system returns the most semantically similar reports to their question. Answer quality is good for straightforward lookups. When analysts need to understand how a regulatory change affects a specific company given its supply chain relationships, vector search falls short. GraphRAG fills that gap. The bank uses a hybrid system — vector search for report discovery, GraphRAG for relationship-aware analysis.

Healthcare and Life Sciences

A pharmaceutical company builds a clinical trial assistant using GraphRAG. The knowledge graph connects drug compounds, trial phases, patient populations, adverse events, and regulatory outcomes. Researchers ask questions like “Which compounds in Phase 2 trials show similar adverse event profiles to Drug X?” GraphRAG traverses the graph and assembles a complete, relationship-aware answer. Vector search would retrieve isolated trial documents but miss the compound-adverse event-trial connections that give the answer meaning.

Legal and Compliance

A law firm uses vector search for contract review. Paralegals search thousands of contracts for specific clause language. Speed matters. Semantic similarity works well for this task. The same firm evaluates GraphRAG for case law research. Legal reasoning depends heavily on precedent relationships — which case cites which, which rulings overturned which earlier decisions. GraphRAG models that citation network. The Vector Search vs GraphRAG choice in legal settings depends on the specific task.

The Road Ahead: Where Both Technologies Are Heading

Vector search will keep improving. Better embedding models capture more nuanced semantics. Quantization techniques reduce storage costs. Hybrid dense-sparse retrieval combines vector similarity with keyword matching. These advances make vector search stronger for a wider range of queries over time.

GraphRAG tools will mature rapidly. Automated graph construction pipelines will reduce the manual work of ontology design. Graph quality monitoring tools will make curation easier. Lower-cost LLMs will reduce ingestion costs. These trends will bring GraphRAG into the reach of mid-market enterprises, not just large organizations with large AI budgets.

The Vector Search vs GraphRAG debate will likely resolve into a unified retrieval layer. Future AI data platforms will manage both vector and graph representations automatically. Engineers will specify what kind of context they need, and the platform will choose the right retrieval strategy. We are not there yet. But the trajectory is clear.

Agentic AI systems are already pushing both technologies forward. AI agents that plan multi-step research tasks need richer retrieval than a single-shot chatbot. Agents need to navigate between documents, facts, relationships, and external APIs. GraphRAG’s graph traversal capability aligns naturally with agentic retrieval patterns. Vector search will serve as the fast lookup layer within those pipelines.

Building Your Evaluation Framework

Do not choose between Vector Search vs GraphRAG based on vendor marketing. Build an evaluation framework based on your actual enterprise needs. Start by categorizing your top fifty user queries by type. Classify each as simple factual, multi-hop relational, summarization, or comparative. Count how many fall into each category. That distribution tells you which technology deserves the most investment.

Run both approaches against a representative sample of real queries. Measure answer accuracy, relevance, and completeness. Measure latency and cost per query. Survey users on answer quality. Let real data guide your architecture decision. Intuition is a starting point. Evidence is the deciding factor.

Define success metrics before you build. What accuracy threshold do your users require? What latency is acceptable? What is your monthly budget for retrieval infrastructure? Clear metrics prevent scope creep. They also help you demonstrate value to business stakeholders when the system goes live.

Read More:-How AI Agents Are Replacing Traditional Middleware in Enterprise Tech

Conclusion

The Vector Search vs GraphRAG question does not have a universal answer. Both technologies solve real problems. Both have genuine limitations. The right answer depends on your data, your users, and your questions.

Vector search is fast, affordable, and well-suited to high-volume factual retrieval. It is the right foundation for most enterprise AI assistants getting started today. GraphRAG delivers richer context for complex, relationship-dependent queries. It is the right investment for enterprises where connected knowledge drives critical decisions.

The smartest path for most enterprises is to start with vector search. Ship something useful quickly. Learn from real usage. Add GraphRAG capabilities where the data shows they are needed. Build a hybrid architecture over time that serves the full range of user needs.

Enterprise data is your competitive advantage. The retrieval layer that sits between that data and your AI applications determines how much of that advantage you actually unlock. Make the Vector Search vs GraphRAG choice deliberately. Base it on evidence. Build modularly so you can adapt as the technology evolves.