Introduction

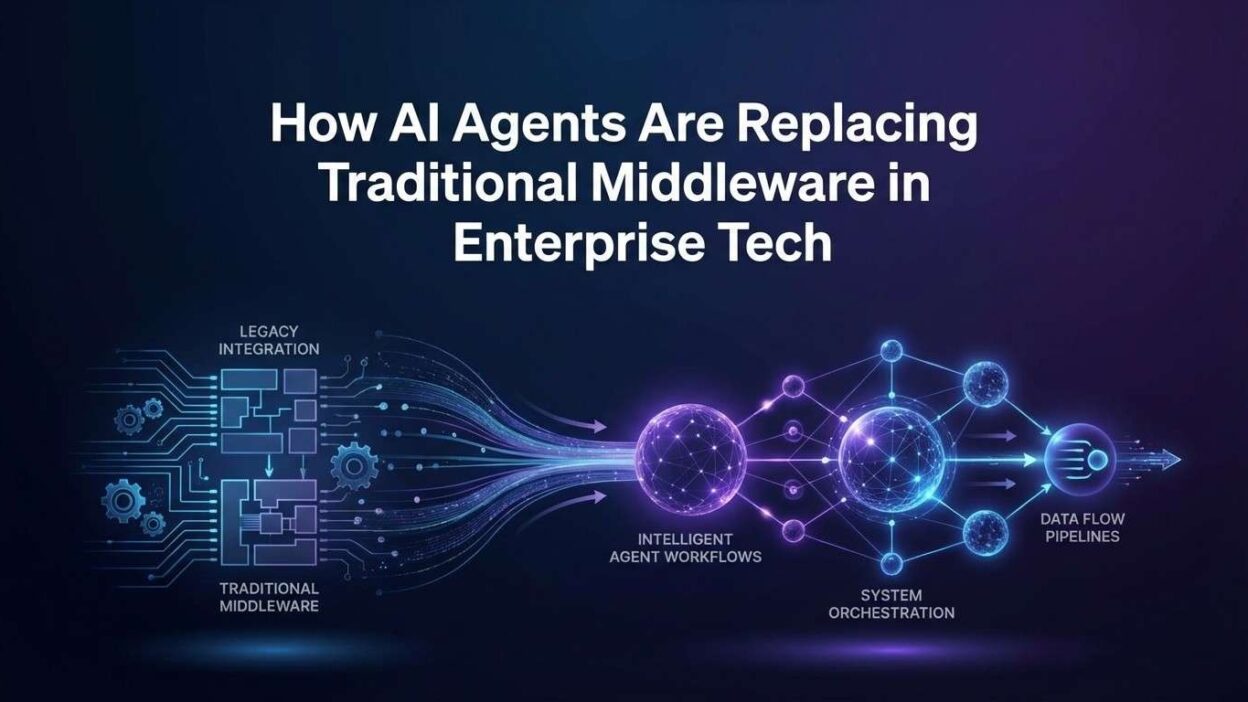

TL;DR Enterprise architecture is shifting fast. The layers of software that once connected systems, transformed data, and routed messages are facing a serious challenge. AI agents replacing traditional middleware is no longer a theoretical debate. It is happening inside real organizations right now.

Middleware has served enterprises well for decades. ESBs, message brokers, API gateways, and ETL pipelines became the connective tissue of modern IT. But these tools carry a heavy cost. They need specialist teams to configure them. They break in ways that are hard to debug. They slow down change.

AI agents work differently. They reason, adapt, and act without rigid configuration. They handle exceptions that would crash a traditional pipeline. They learn from context rather than following a fixed rule set. That flexibility is exactly what modern enterprises need as data volumes grow and integration complexity explodes.

This blog explains the full picture. You will understand what middleware does today, why AI agents outperform it in critical areas, how the shift unfolds in practice, and what enterprise architects should do to prepare. Every section is grounded in current engineering reality and practical strategy.

Table of Contents

What Traditional Middleware Actually Does

Middleware sits between applications. It connects systems that cannot speak directly to each other. Without middleware, every application in an enterprise would need custom code to talk to every other application. That problem grows exponentially as organizations add new systems.

Enterprise Service Buses handle message routing and transformation. API gateways manage authentication, rate limiting, and protocol translation. ETL pipelines extract data from one system, transform its format, and load it into another. Message queues decouple producers and consumers so that one slow system does not block another. These tools each solve a real problem.

The challenge is that middleware needs to know every rule in advance. A developer writes transformation logic before any data flows. A configuration file defines every routing decision. An exception that falls outside that configuration requires manual intervention and a code change.

The Hidden Cost of Middleware Complexity

Middleware sprawl is real in large enterprises. A Fortune 500 company might run dozens of middleware products simultaneously. Each one has its own interface, its own failure modes, and its own team of specialists. Coordinating changes across that landscape takes months. Integration projects routinely run over budget and behind schedule.

Maintenance costs compound over time. Middleware versions require upgrades. Upgrades break dependencies. Dependencies need testing. That cycle consumes engineering capacity that could go toward product development instead. The total cost of ownership for traditional middleware far exceeds the initial license or implementation cost.

This is the environment where AI agents replacing traditional middleware starts to look not just attractive but necessary. The problems are structural. The old tools were not designed for the scale and dynamism of modern enterprise data.

Understanding AI Agents in an Enterprise Context

An AI agent is a software system that perceives its environment, reasons about it, and takes actions to achieve a goal. That definition sounds abstract. In an enterprise integration context, it becomes concrete fast.

An AI agent can receive a message from one system, understand its meaning and intent, determine the correct destination and format, transform the data appropriately, and route it — all without a developer pre-defining every rule. The agent reasons through each decision rather than matching it against a lookup table.

Large language models power the reasoning layer of most current enterprise AI agents. These models understand natural language, structured data formats, API schemas, and business logic described in plain text. They handle ambiguity and exceptions gracefully. A traditional ETL pipeline cannot read the intent of a malformed record. An LLM-powered agent can infer what the record was supposed to contain and act accordingly.

Agentic Architectures vs. Rule-Based Systems

Traditional middleware follows rules. An AI agent follows intent. Rules break when reality deviates from the assumptions embedded in them. Intent scales because the agent reasons from first principles about each new situation.

Agentic architectures typically combine an LLM for reasoning, a set of tools the agent can call (APIs, databases, external services), and a memory or context layer that stores relevant history. The agent orchestrates these components autonomously. It decides which tool to call, how to handle the response, and what to do next.

This is why AI agents replacing traditional middleware is a structural shift and not just a feature upgrade. The underlying architectural philosophy is different. Rules give way to reasoning. Configuration gives way to comprehension.

Where AI Agents Outperform Traditional Middleware

The performance gap between AI agents and traditional middleware shows up in specific, measurable areas. Understanding where AI agents replacing traditional middleware delivers the clearest advantage helps enterprise architects prioritize their modernization efforts.

70%Reduction in integration configuration time reported in early adopter surveys

3×Faster exception handling compared to rule-based pipeline fallbacks

40%Lower middleware maintenance cost in organizations piloting agentic integration

85%Of integration failures stem from edge cases middleware rules did not anticipate

| Capability | Traditional Middleware | AI Agent |

|---|---|---|

| Exception Handling | Fails or routes to dead letter queue | Reasons through exception, infers correct action |

| Schema Changes | Breaks pipeline, requires manual update | Adapts to new schema with minimal reconfiguration |

| Business Logic | Hardcoded in config or code | Described in natural language, interpreted at runtime |

| New Data Sources | Weeks of integration work | Hours with API schema and natural language description |

| Audit and Explainability | Log-based, hard to read | Natural language reasoning traces available |

| Maintenance Burden | High — specialist teams required | Lower — prompt updates replace code changes |

| Cost at Scale | License + operations + specialists | API usage + oversight tooling |

The table shows a clear pattern. Traditional middleware wins where rules are perfectly stable and data is perfectly predictable. AI agents win everywhere else. Modern enterprise data is rarely stable or predictable, which explains the momentum behind AI agents replacing traditional middleware across industries.

The Evolution from ESB to AI Agent: A Timeline

The move toward AI agents replacing traditional middleware did not happen overnight. Enterprise integration architecture evolved through distinct phases. Each phase solved problems the previous one created.

1990s – 2005

Enterprise Service Bus (ESB) era. Organizations centralized all integration logic in a single bus. This solved point-to-point connection sprawl but created a new bottleneck. The ESB became the single point of failure for every integration in the enterprise.

2005 – 2015

SOA and Web Services. Service-Oriented Architecture decentralized logic into discrete services with defined interfaces. SOAP and WSDL standardized communication. Integration remained configuration-heavy and brittle at the edges.

2015 – 2022

API-first and microservices. REST APIs, API gateways, and event streaming platforms like Kafka distributed integration further. Speed improved but operational complexity grew. Hundreds of microservices created hundreds of integration points to manage.

2023 – Present

Agentic integration. LLM-powered AI agents handle routing, transformation, and orchestration. Intent replaces configuration. AI agents replacing traditional middleware moves from experiment to production deployment across enterprise technology stacks.

Each era addressed the failures of the one before it. The agentic era addresses the fundamental limitation of all previous approaches: the inability to handle what was not anticipated at configuration time.

Real-World Use Cases Driving the Shift

Abstract architecture debates matter less than concrete use cases. Here is where AI agents replacing traditional middleware creates the most tangible business impact in organizations deploying these systems today.

Financial Services: Intelligent Transaction Routing

Banks process millions of transactions daily. Each transaction type carries different routing requirements based on amount, jurisdiction, counterparty, and regulatory status. Traditional middleware handles this through massive rule trees that take months to update when regulations change.

An AI agent understands transaction context semantically. It reads the transaction, reasons about applicable rules, and routes correctly — even for transaction types the rule tree has never seen before. Regulatory updates arrive as natural language policy descriptions. The agent incorporates them immediately without a code deployment.

Healthcare: Cross-System Patient Data Orchestration

Healthcare organizations run dozens of disparate systems. EHRs, lab systems, billing platforms, and patient portals each store overlapping data in different formats. Integrating them with traditional ETL requires custom mapping for every field in every system pair.

An AI agent orchestrates patient data flows by understanding clinical meaning rather than field mappings. It recognizes that a “patient identifier” in one system corresponds to a “member ID” in another. It handles missing fields by inferring likely values or flagging them for review. That semantic understanding cuts integration development time dramatically.

Retail: Dynamic Inventory and Order Orchestration

Retail inventory systems face constant schema changes as suppliers update their APIs. A traditional integration breaks every time a supplier changes a field name or adds a required parameter. An AI agent reads the updated API schema and adapts its transformation logic without manual intervention.

During peak sales periods, an AI agent managing order routing outperforms static middleware by adapting to real-time fulfillment capacity signals. It reasons about which warehouse to route each order to based on current stock, shipping cost, and delivery window — decisions that previously required custom orchestration logic for every scenario.

Pattern across industries: The organizations moving fastest toward AI agents replacing traditional middleware share one characteristic. They face high-volume, high-variability integration problems where rule-based systems fail at the edges. Every industry has those edges. The question is how expensive those failures are.

What AI Agents Still Cannot Replace (Yet)

Honest analysis requires acknowledging where traditional middleware still holds advantages. AI agents replacing traditional middleware is a directional trend, not a complete substitution today.

High-frequency, ultra-low-latency message processing remains a strength of purpose-built middleware. A Kafka cluster processing 10 million messages per second outperforms any LLM-based agent on raw throughput. The inference time of an LLM adds latency that some real-time use cases cannot absorb.

Guaranteed delivery and exactly-once semantics are mature in message brokers like RabbitMQ and Kafka. These guarantees are harder to implement cleanly in an agentic architecture where the agent itself could fail mid-process. Enterprise architects must account for this carefully in financial and compliance-critical workflows.

Compliance and Audit Requirements

Regulated industries need deterministic, reproducible audit trails. A rule-based system produces the same output for the same input every time. An LLM-based agent can produce slightly different reasoning on the same input across runs. That nondeterminism is a concern for compliance teams even when the final output is correct.

The practical answer is a hybrid architecture. Traditional middleware handles guaranteed-delivery, high-throughput, and deterministic-audit use cases. AI agents handle complex routing, exception management, schema adaptation, and orchestration use cases. The two layers complement each other rather than one replacing the other completely.

This nuance matters for enterprise architects planning modernization roadmaps. The goal of AI agents replacing traditional middleware is not wholesale elimination. It is targeted replacement where the agent’s strengths align with the integration problem’s characteristics.

Architecture Patterns for Agentic Integration

Building systems around AI agents replacing traditional middleware requires new architecture patterns. Three patterns appear most often in enterprise deployments today.

Pattern 1: The Agent-as-Orchestrator

The AI agent sits at the center of an integration flow. It receives events from multiple sources. It reasons about each event, determines which downstream systems need to act, and calls the appropriate APIs in the correct sequence. Traditional middleware in this pattern becomes a simple message bus, stripped of routing and transformation logic.

This pattern works well for complex business process orchestration where the sequence of steps depends on the content of the data. Order fulfillment, claims processing, and onboarding workflows benefit significantly from this approach.

Pattern 2: The Agent-as-Adapter

Each external system gets an AI agent wrapper. The agent understands that system’s API, data model, and behavior. Other systems interact with a standardized interface. The agent handles all translation between the standardized interface and the external system’s actual requirements.

This pattern dramatically reduces the cost of onboarding new data sources. Adding a new supplier, partner system, or internal service requires defining the agent’s understanding of that system — not writing custom adapter code for every integration point.

Pattern 3: The Hybrid Layer

Traditional middleware handles high-volume, low-complexity message flows. AI agents handle low-volume, high-complexity exception and orchestration flows. A routing layer at the entry point directs each incoming message to the appropriate handler based on complexity signals.

This hybrid approach lets enterprises modernize incrementally. They do not need to replace all middleware immediately. They deploy AI agents where the value is highest and leave reliable middleware in place where it performs well. Most large enterprises will live in this hybrid model for the next three to five years.

Enterprise Adoption Challenges and How to Address Them

Moving toward AI agents replacing traditional middleware surfaces real organizational and technical challenges. Acknowledging them directly produces better outcomes than treating adoption as straightforward.

Governance and Control

Enterprise IT leaders are responsible for every system action. A middleware rule is auditable and deterministic. An AI agent’s reasoning is probabilistic. Governance frameworks need to adapt. Every agentic action should carry a reasoning trace. Human approval gates should sit before high-impact automated actions. Audit logs need to capture agent decisions in readable form.

Organizations building strong governance frameworks for AI integration now are positioning themselves to scale agentic systems confidently. Organizations that skip governance design create compliance risk and operational blind spots.

Team Skills and Culture

Traditional middleware specialists understand transformation languages, configuration schemas, and routing rules. Agentic integration requires different skills. Prompt engineering, LLM evaluation, vector database management, and agent behavior testing are new competencies for most integration teams.

Retraining existing middleware teams is more efficient than replacing them. Integration specialists understand the domain deeply. They know the business rules, the data quirks, and the failure modes. Adding AI tooling to that domain expertise creates the strongest possible combination for agentic integration development.

Adoption principle: Start with the integration problems that cause the most pain today. Pick one high-exception workflow where traditional middleware fails repeatedly. Build an AI agent for that specific workflow. Measure the outcome. Use that proof point to secure investment for the next phase. Incremental wins build the organizational confidence that broad adoption requires.

Tools and Platforms Enabling the Shift

The ecosystem supporting AI agents replacing traditional middleware matured significantly in 2024 and 2025. Enterprise architects now have production-ready options at every layer of the stack.

LLM providers like Anthropic, OpenAI, and Google offer enterprise API tiers with data privacy guarantees, SLAs, and compliance certifications. These remove the data sovereignty barriers that blocked enterprise adoption in 2023. Claude by Anthropic specifically handles long context windows well, making it suitable for complex multi-step integration reasoning.

Orchestration frameworks like LangChain, LlamaIndex, and Microsoft AutoGen provide the scaffolding for building agents that call tools, manage memory, and handle multi-step reasoning. These frameworks are production-tested and carry active enterprise support ecosystems.

Integration-specific AI platforms are emerging. MuleSoft has added AI capabilities to its integration platform. Boomi launched AI-powered integration design tools. Workato uses AI to suggest and build integration recipes. These platforms let integration teams adopt AI capabilities without building agent infrastructure from scratch.

Vector databases like Pinecone, Weaviate, and pgvector give agents persistent memory of past integration decisions, schema mappings, and business rules. That memory layer is what separates a general-purpose LLM from a domain-specific integration agent with genuine organizational knowledge.

What Enterprise Architects Should Do Now

The trend toward AI agents replacing traditional middleware is accelerating. Enterprise architects who prepare now will lead their organizations through this transition. Those who wait will manage it reactively under pressure.

Audit your current middleware landscape first. Map every integration in your enterprise by complexity, failure rate, and maintenance cost. High-complexity, high-failure-rate integrations are the strongest candidates for agentic replacement. Low-complexity, stable integrations should stay on traditional middleware for now.

Build internal AI integration competency before you need it at scale. Assign two or three engineers to build a proof-of-concept agentic integration on a non-critical workflow. Give them six weeks and a clear success metric. The knowledge that team builds is worth more than any vendor briefing.

Engage your security and compliance teams early. AI agents handling sensitive data need the same data handling controls as any other enterprise system. Bringing compliance stakeholders into the architecture conversation from the start prevents costly redesigns later.

Evaluate vendors critically. Many middleware vendors are rebranding existing products as AI agents without fundamental architectural changes. Insist on demonstrating actual reasoning capability on your specific integration problems. A genuine AI agent handles an exception gracefully. A rebranded rule engine fails the same way it always did.

The enterprise technology landscape is moving toward agentic integration at every layer. AI agents replacing traditional middleware is the defining architectural shift of this decade. Understanding it deeply is now a core competency for every enterprise technology leader.

Frequently Asked Questions

These questions surface most often when enterprise leaders evaluate the shift toward AI agents replacing traditional middleware. Each answer addresses the underlying concern directly.

Will AI agents replacing traditional middleware eliminate the need for integration teams?

No. Integration teams become more important, not less. AI agents require domain experts who understand business rules, data quality standards, and integration failure modes. Those experts now work at a higher level of abstraction — defining agent behavior in natural language rather than writing transformation code. The team’s leverage increases dramatically. Its size may shrink modestly over time, but the expertise becomes more valuable, not less.

How much does an AI agent-based integration cost compared to traditional middleware?

Total cost of ownership is typically lower for AI agents on complex integration use cases. Traditional middleware carries license costs, specialist salary premiums, and ongoing maintenance overhead. AI agents carry LLM API costs plus oversight tooling. For simple, stable integrations, traditional middleware remains more cost-effective. For complex, high-exception integrations, AI agents reduce cost significantly by eliminating manual exception handling and configuration maintenance.

How do AI agents handle data security in enterprise integration flows?

Security requires deliberate design. Logs must be scrubbed of PII before reaching the LLM. Enterprise API tiers from major LLM providers offer data processing agreements and regional data residency. For highly sensitive data, self-hosted open-source models keep all data inside the organization’s network boundary. Security is a solvable problem in agentic integration, but it requires intentional architecture decisions, not assumptions.

Which industry is furthest ahead in adopting AI agents replacing traditional middleware?

Financial services leads adoption, driven by the high cost of integration failures and regulatory pressure to modernize legacy systems. Healthcare follows closely, motivated by interoperability mandates and the complexity of clinical data exchange. Retail is third, pushed by the need to integrate constantly changing supplier APIs and real-time inventory systems. All three industries share high integration complexity and high failure costs — exactly the conditions where AI agents deliver the most value.

How long does it take to replace a traditional middleware integration with an AI agent?

A well-scoped integration replacement takes two to six weeks for a team with basic LLM API experience. That timeline includes building the agent, populating its knowledge base with domain context, testing against historical edge cases, and deploying in shadow mode before going live. The equivalent traditional middleware implementation on the same integration typically takes three to six months. Speed of deployment is one of the clearest advantages AI agents carry over traditional approaches.

What is the biggest mistake enterprises make when adopting AI agents to replace middleware?

The most common mistake is starting too broadly. Organizations attempt to replace all middleware simultaneously rather than targeting one high-pain integration first. That approach overwhelms teams, creates governance gaps, and produces failures that slow down the entire program. The organizations that succeed start narrow, demonstrate value, build organizational confidence, and expand from there. Scope discipline in the first 90 days determines the success of the entire program.

Read More:-How to use Function Calling to connect AI to your internal SQL database

Conclusion

Enterprise integration is at an inflection point. The tools that connected systems for three decades are no longer sufficient for the complexity, speed, and variability that modern organizations demand. AI agents replacing traditional middleware is the architectural response to that reality.

The shift is not about chasing technology trends. It is about solving problems that traditional middleware cannot solve cost-effectively. Exception handling, schema adaptation, semantic data transformation, and dynamic orchestration — these are the gaps where AI agents replacing traditional middleware delivers immediate and measurable value.

The transition will not happen overnight. Traditional middleware will remain part of enterprise architecture for years, particularly where throughput, latency, and deterministic audit trails matter most. The smart path is a hybrid architecture that places AI agents where they excel and retains proven middleware where it performs well.

Enterprise architects who build agentic integration competency now gain a structural advantage. Integration speed becomes a competitive weapon. The ability to connect a new data source in hours rather than months creates real business agility. That agility is the ultimate return on investment for AI agents replacing traditional middleware programs done well.

Start with your most painful integration. Build one agent. Measure the outcome honestly. The data will tell you the next step. The organizations shaping the future of enterprise technology are already doing exactly this — one agentic integration at a time.