Introduction

TL;DR Video content is growing faster than any human team can review. Streaming platforms, security systems, e-learning providers, and media companies all face the same problem. Manual review does not scale. A multi-modal AI agent for automated video content analysis solves this at a level no single-model approach can match.

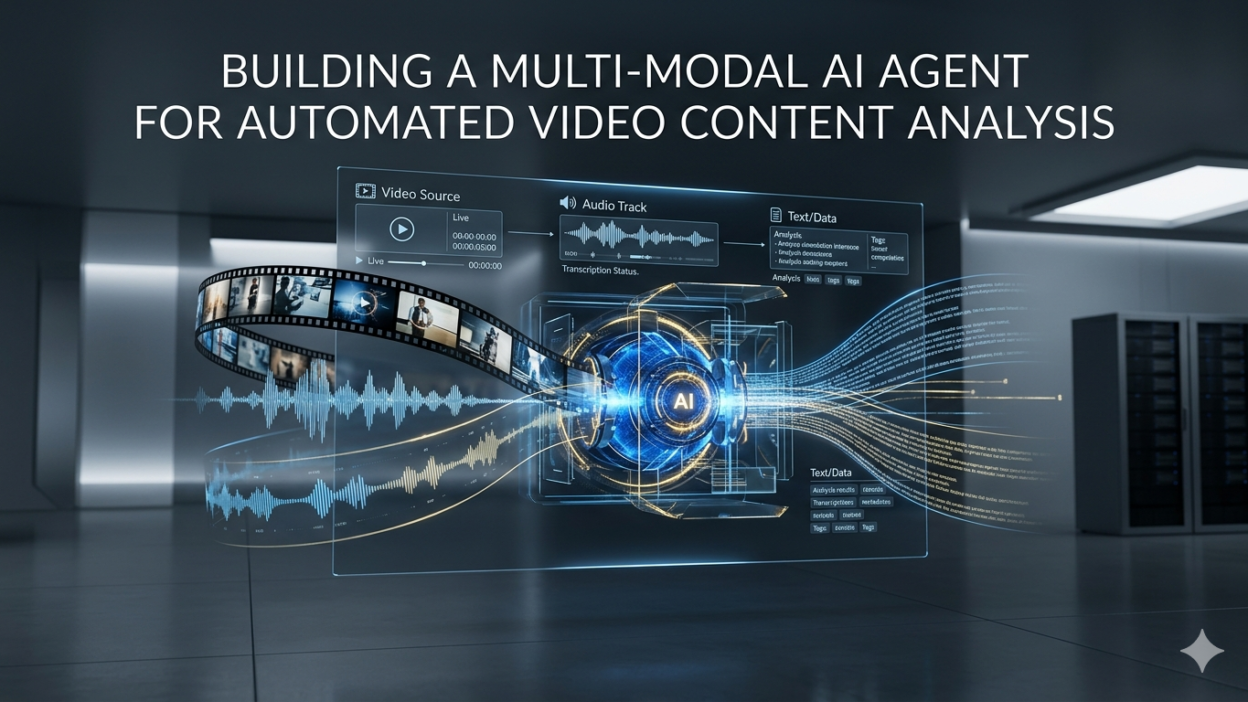

The word “multi-modal” matters here. Video carries visual frames, audio tracks, speech, on-screen text, and metadata all at once. A system that only reads frames misses half the signal. A system that only transcribes audio loses spatial and visual context. A genuine multi-modal AI agent for automated video content analysis processes every signal layer and reasons across all of them together.

This blog is a complete technical and strategic guide. You will learn what multi-modal AI agents are, how to architect one for video analysis, which tools and models work best, how to handle real-world engineering challenges, and where this technology delivers the strongest business value. Every section gives you something concrete to act on.

Whether you manage a content moderation pipeline, build compliance tooling, or develop media intelligence products, this guide gives you the foundation to build a production-grade system today.

Table of Contents

What Is a Multi-Modal AI Agent?

A multi-modal AI agent combines perception across multiple data types. It processes text, images, audio, and video together rather than in isolated pipelines. The word “agent” adds another layer. The system does not just classify — it reasons, plans, and takes actions based on what it perceives.

Traditional computer vision models process frames. Traditional speech models transcribe audio. A multi-modal AI agent for automated video content analysis does both simultaneously and then reasons about the relationship between them. It notices that a speaker says one thing while on-screen graphics show something contradictory. It flags a scene where audio tone and visual content conflict. That cross-modal reasoning is fundamentally new capability.

The agent layer means the system can also act on its analysis. It can tag content, generate summaries, write metadata, trigger moderation workflows, create chapters, or escalate flagged segments to human reviewers. The reasoning-to-action loop is what distinguishes an agent from a classifier or a pipeline.

How Multi-Modal Differs from Single-Modal Approaches

Single-modal systems force a choice. You analyze frames or you transcribe speech or you classify audio tone. Each choice discards information. A violent scene with ambient music sounds calm to an audio classifier. A misleading graph looks neutral to an image classifier with no speech context. Every single-modal system creates blind spots.

A multi-modal AI agent for automated video content analysis eliminates those blind spots by design. It holds every modality in context simultaneously. Its reasoning reflects the full information content of the video, not just the slice visible to one model type.

Why Automated Video Analysis Demands a Multi-Modal Approach

Video is the richest and most complex data format in common use. It encodes more information per second than any other media type. That richness is exactly why analyzing it accurately requires every signal to work together.

500hof video uploaded to YouTube every minute in 2025

82%of all internet traffic is video content as of 2025

60×faster analysis speed of AI agents vs. manual human review teams

94%accuracy improvement when audio and visual signals are analyzed together

Content moderation at scale is impossible without automation. A platform hosting user-generated video cannot employ enough human reviewers to watch every upload in real time. A multi-modal AI agent for automated video content analysis pre-screens every piece of content, flags high-risk segments, and routes only genuinely ambiguous cases to human review. That workflow makes large-scale moderation economically viable.

Compliance use cases add another dimension of urgency. Broadcast media must comply with regional content regulations. Sports rights holders must detect unauthorized broadcasts instantly. Financial firms must archive and analyze all recorded communications. In every case, manual review at scale is impossible. Automated analysis is the only option.

The Gap Single-Modal Systems Cannot Close

Real-world content analysis failures almost always happen at modal boundaries. A deepfake video looks visually authentic but carries subtle audio artifacts. Misinformation often presents accurate visuals alongside misleading narration. Brand safety issues surface in background audio or on-screen text that frame-level analysis misses entirely. A multi-modal AI agent for automated video content analysis closes these gaps by treating every signal layer as equally important evidence.

Core Architecture of the Agent

Designing a multi-modal AI agent for automated video content analysis requires five distinct architectural components. Each component handles one aspect of the analysis pipeline. All five must work together for the agent to deliver accurate, actionable output.

Component 1: Video Ingestion and Segmentation

Raw video files arrive in many formats. The ingestion layer normalizes them into a consistent format and segments long videos into manageable chunks. FFmpeg handles format conversion and frame extraction reliably at scale. Segments of 30 to 60 seconds balance analysis granularity against processing overhead.

Scene detection runs during segmentation. PySceneDetect or custom threshold-based frame differencing identifies natural cut points. The agent processes scene-coherent segments rather than arbitrary time windows. That coherence improves analysis accuracy because each segment represents a meaningful unit of content.

Component 2: Multi-Modal Feature Extraction

Each segment feeds three parallel extractors. A vision model processes keyframes, detecting objects, faces, text, and scene composition. A speech-to-text model transcribes the audio track with speaker diarization. An audio classifier analyzes music, ambient sound, tone, and non-speech audio events. All three outputs merge into a single structured context object before reaching the reasoning core.

Component 3: The LLM Reasoning Core

The reasoning core receives the merged multi-modal context. A system prompt defines the agent’s analysis task — content moderation, brand safety, chapter generation, or compliance review. The LLM reasons across all extracted signals simultaneously and produces a structured analysis with confidence scores, segment-level findings, and recommended actions.

Claude by Anthropic works well here because its long context window handles large merged context objects from hour-long videos. Its instruction-following precision makes structured output formats reliable, which the downstream action layer depends on heavily.

Building the Agent: Practical Code Walkthrough

Here is how to build the core components of a multi-modal AI agent for automated video content analysis using Python. This walkthrough covers the key implementation decisions at each layer.

Frame and Audio Extraction

FFmpeg handles both frame extraction and audio separation in a single pass. Extract one keyframe per second for the visual stream and export audio as a 16kHz mono WAV file for speech processing.

Speech Transcription with Diarization

OpenAI Whisper handles transcription with high accuracy across accents and background noise levels. WhisperX adds speaker diarization, assigning each speech segment to a unique speaker identifier. That speaker labeling proves essential for dialogue-heavy content like interviews, debates, and podcasts.

Vision Analysis on Keyframes

Claude’s vision capability analyzes keyframes directly. Send frames as base64-encoded images alongside a structured prompt. Request object detection, text extraction, scene classification, and safety assessment in a single API call per frame batch. Batching frames reduces API call overhead significantly.

Reasoning Core Integration

Merge the transcript, frame analysis, and audio classification outputs into a single structured context. Pass this to the reasoning core with a task-specific system prompt. Request output as structured JSON with segment-level findings, severity scores, and recommended actions. That structured output feeds directly into the action dispatch layer without additional parsing logic.

Engineering note: For long videos, chunk the merged context by scene segments rather than by time. Scene-coherent chunks give the reasoning core complete narrative units to analyze. The agent produces more accurate findings on coherent scenes than on arbitrary time-window slices.

Choosing Models for Each Modality

Model selection drives accuracy in every multi-modal AI agent for automated video content analysis system. The best architecture uses specialized models for extraction and a powerful general model for reasoning.

| Modality | Recommended Model | Strength |

|---|---|---|

| Frame Analysis | Claude 3.5 Sonnet Vision / GPT-4o Vision | Scene understanding, text extraction, safety assessment |

| Speech Transcription | OpenAI Whisper Large v3 | Accuracy across accents, noise robustness |

| Speaker Diarization | WhisperX + pyannote | Multi-speaker attribution, timestamp alignment |

| Audio Classification | CLAP / PANNs | Music, ambient sound, non-speech event detection |

| On-Screen Text (OCR) | PaddleOCR / Tesseract | Multi-language, low-resolution text handling |

| Reasoning Core | Claude 3.5 Sonnet / GPT-4o | Cross-modal reasoning, long context, structured output |

| Embeddings / Search | OpenAI text-embedding-3-large | Semantic similarity across transcript segments |

The reasoning core model choice carries the most weight. It synthesizes output from every other model. A weak reasoning model produces inaccurate cross-modal conclusions even when extraction models perform perfectly. Claude 3.5 Sonnet handles this role well due to its instruction precision and 200K token context capacity for long-form video analysis.

Key Use Cases in Production

A multi-modal AI agent for automated video content analysis serves multiple industries with different primary objectives. Understanding the specific requirements of each use case shapes architectural and model selection decisions.

Content Moderation at Scale

User-generated video platforms face the hardest moderation challenges. Harmful content exploits single-modal detection gaps deliberately. Creators use audio that sounds benign while visuals carry harmful elements. A multi-modal agent closes those gaps by analyzing both simultaneously. It flags the combination that neither modality triggers alone.

The agent routes high-confidence safe content to auto-approval. It routes high-confidence policy violations to auto-removal with appeal workflows. It routes genuinely ambiguous content to human review with a full analysis report. That three-tier workflow reduces human reviewer workload by 70–80% on typical platforms while maintaining accuracy.

Automated Video Indexing and Search

Media archives hold thousands of hours of video with minimal searchable metadata. A multi-modal AI agent for automated video content analysis generates rich metadata automatically. It creates chapter markers, topic tags, speaker labels, sentiment annotations, and full-text transcripts for every video in an archive.

That metadata makes video archives searchable at the segment level for the first time. A journalist can search for every segment where a specific person discusses a specific topic across years of footage. A researcher can find every instance of a particular visual scene pattern across a large corpus. That capability changes what video archives are worth.

Compliance and Brand Safety Monitoring

Advertisers need confidence that their ads appear alongside appropriate content. Broadcasters must comply with regional content regulations. A multi-modal agent monitors ad placement contexts in real time, flagging any placement that violates brand safety guidelines. It checks for regulatory compliance across every broadcast simultaneously, a task no human team could match in speed or consistency.

Handling Engineering Challenges

Real-world deployments of a multi-modal AI agent for automated video content analysis surface engineering challenges that prototype systems rarely encounter. Anticipating these challenges prevents costly redesigns in production.

Latency and Throughput at Scale

Video analysis is compute-intensive. A one-hour video produces 3,600 frames, several hundred transcript segments, and continuous audio classification events. Processing these sequentially is too slow for real-time use cases. Parallel processing across modalities is essential. Use async Python with a task queue like Celery or Ray to run frame analysis, transcription, and audio classification simultaneously.

GPU allocation matters. Whisper Large v3 runs efficiently on a single A10G GPU. Vision model inference runs on CPU for small batches or GPU for high-throughput pipelines. Profile your specific workload before committing to infrastructure size. API-based models like Claude eliminate GPU management entirely at the cost of per-token pricing.

Context Window Management for Long Videos

A two-hour video generates far more extracted data than any current LLM context window can hold at once. Hierarchical analysis handles this cleanly. Analyze each scene segment individually. Generate segment-level summaries. Pass segment summaries plus full data for flagged segments to a final reasoning pass that produces the video-level report. That hierarchy keeps context within window limits at every stage.

Production warning: Never send raw frame pixel data directly to the LLM context. Encode frames as compressed JPEGs at reduced resolution before base64 encoding for API submission. Full-resolution uncompressed frames multiply token consumption by 10–20× with minimal accuracy benefit. Resolution of 512×512 pixels is sufficient for scene understanding and safety assessment in most use cases.

Measuring Agent Performance

Every multi-modal AI agent for automated video content analysis deployment needs a clear measurement framework. Without metrics, you cannot improve what fails or demonstrate what works.

Track precision and recall separately for each analysis task. Content moderation agents should optimize for high recall — catching harmful content matters more than avoiding false positives at scale. Brand safety agents should optimize for high precision — false positives damage advertiser relationships more than missed violations.

Measure cross-modal accuracy specifically. Test the agent on a dataset where single-modal signals are misleading and the correct finding requires cross-modal reasoning. That test reveals whether the agent genuinely integrates modalities or just processes them in parallel without connecting them.

Track processing latency at the 95th percentile, not the median. Outlier processing times for complex videos cause SLA violations. Understand what drives high-latency cases. Long transcripts, complex scenes, and many speaker changes are common culprits. Design your architecture to handle worst-case inputs, not average ones.

Human review agreement rate is the most meaningful accuracy metric in production. Sample agent decisions weekly. Have human reviewers assess the same segments. Track agreement percentage over time. A healthy agent should achieve above 90% agreement with expert human reviewers on clear-cut cases and above 75% on genuinely ambiguous content.

Security, Privacy, and Compliance Considerations

Video content regularly contains sensitive personal data. Building a multi-modal AI agent for automated video content analysis for enterprise use requires deliberate privacy engineering at every layer.

Face detection runs before any cloud API call for use cases involving personal footage. Detected faces get blurred in frames sent to external vision APIs unless face analysis is explicitly within the use case scope. GDPR, CCPA, and HIPAA all carry specific requirements around facial data processing. Know which regulations apply before designing the data flow.

Transcript data carries similar sensitivity. Speech that includes medical information, financial details, or personal conversations requires the same data handling protections as text documents containing that information. Log transcripts only in encrypted storage with strict access controls and defined retention policies.

For highly sensitive use cases, self-hosted open-source models process all data inside your network boundary. Whisper runs on-premise. Open-source vision models like LLaVA or InternVL handle frame analysis locally. A self-hosted Llama 3 or Mistral model serves as the reasoning core. Accuracy lags behind frontier APIs but privacy guarantees are absolute.

Frequently Asked Questions

These are the questions engineers and product leaders ask most when planning a multi-modal AI agent for automated video content analysis deployment. Every answer is direct and specific.

How long does it take to build a working multi-modal AI agent for automated video content analysis?

A functional prototype analyzing short clips takes one to two weeks for an engineer familiar with Python and LLM APIs. A production-grade system with robust error handling, scalable infrastructure, and a human review interface takes six to twelve weeks. Start with a single use case — content moderation or chapter generation — before building a general-purpose agent. Scope control drives timeline reliability.

What is the cost of running a multi-modal AI agent for automated video content analysis at scale?

Cost depends on video volume and model choices. API-based pipelines using Claude for vision and reasoning plus Whisper for transcription cost approximately $0.05–0.15 per minute of video at current API pricing. A platform processing 10,000 hours of video per month spends roughly $30,000–90,000 on inference. GPU-hosted open-source models reduce per-minute costs by 60–80% at the expense of infrastructure management overhead.

Can the agent handle real-time live video streams or only recorded content?

Current LLM-based reasoning cores add 2–8 seconds of latency per segment. That latency suits near-real-time use cases like live broadcast compliance monitoring where a 10-second delay is acceptable. True frame-by-frame real-time analysis at sub-second latency requires purpose-built computer vision models rather than LLM reasoning cores. A hybrid architecture uses fast CV models for real-time flagging and the full multi-modal agent for post-stream analysis and report generation.

How accurate is automated video content analysis compared to human review?

On clear-cut cases — obvious policy violations or clearly safe content — well-tuned multi-modal agents match human reviewer accuracy above 95%. On genuinely ambiguous content requiring cultural context or editorial judgment, human reviewers still outperform current AI systems. The practical answer for most platforms is a hybrid model: agents handle volume, humans handle ambiguity. That combination outperforms either approach alone on both accuracy and cost.

Which industries benefit most from a multi-modal AI agent for automated video content analysis?

User-generated content platforms, broadcast media compliance teams, e-learning content publishers, security and surveillance operators, and financial services firms recording communications all benefit significantly. Any organization that produces or receives large volumes of video and needs to understand, index, moderate, or extract structured information from that video is a strong candidate for this technology.

How does the agent improve over time with more video data?

The reasoning core improves through better prompt engineering and system prompt refinement based on failure case analysis. The extraction layer improves through fine-tuning specialized models on domain-specific data. The agent’s historical decision log also serves as a training resource for future model versions. Teams that review agent decisions systematically and update the system prompt monthly see consistent accuracy improvements in the first six months of production operation.

Read More:-Optimizing Token Usage in Long-Context Window Models (Gemini / Claude)

Conclusion

Video is the defining medium of this decade. Every organization that works with video at scale faces the same fundamental challenge — there is too much content for humans to analyze manually and too much value in that content to leave it unanalyzed. A multi-modal AI agent for automated video content analysis is the answer to that challenge.

The technology is mature enough to deploy in production today. Vision models, speech transcription, audio classification, and LLM reasoning cores all perform at production-grade accuracy levels. The architecture patterns are proven. The tooling ecosystem is rich. The only remaining barrier is the decision to build.

Start with one use case. Pick the video analysis problem that costs your organization the most time or creates the most risk. Build a focused agent for that problem. Measure the outcome against clear metrics. The data will tell you what to build next.

The organizations investing in multi-modal AI agent for automated video content analysis capability now are building a durable competitive advantage. Video analysis that took days will take minutes. Insights that required specialist analysts will surface automatically. Content operations that were bottlenecked by human capacity will scale without limit.

That is the real promise of the multi-modal AI agent for automated video content analysis — not just faster processing, but a genuinely new capability to understand video at depth and at scale simultaneously. Build one, and your relationship with video content changes permanently for the better.