Introduction

TL;DR The AI industry spent years chasing one metric above all others. Scale. The assumption was simple. Larger models with more parameters always win. Enterprises lined up to access the biggest foundation models available. They paid premium API fees. They accepted latency trade-offs. They handed sensitive data to third-party cloud providers. All because bigger felt safer.

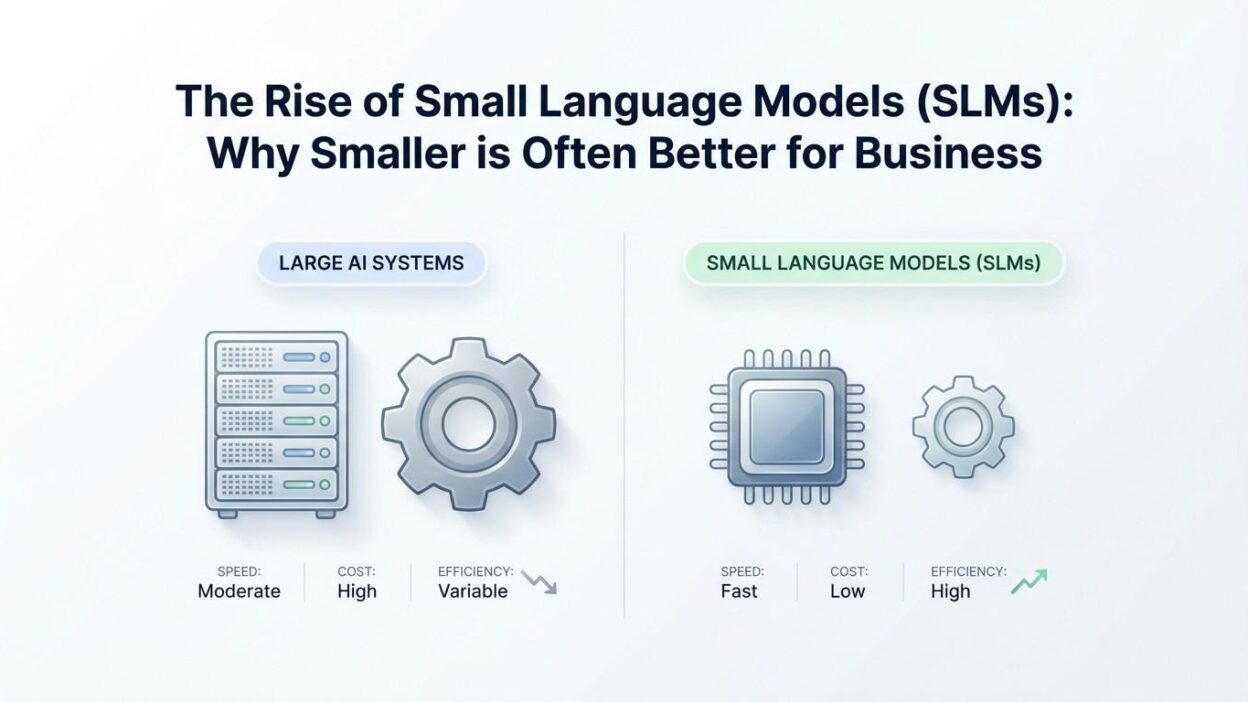

That assumption is cracking. Small language models SLMs for business are proving that parameter count is not the most important variable in enterprise AI deployment. Task fit, domain accuracy, deployment speed, infrastructure cost, and data control matter far more for real business outcomes.

SLMs are compact, focused, and fast. They run on standard hardware. They deploy on-premises. They fine-tune on domain-specific data in days rather than months. They answer queries in milliseconds. They cost a fraction of frontier model API fees. For the majority of practical business tasks, small language models SLMs for business do not just compete with large models. They win.

This blog digs into the full picture. We explore what SLMs are, how they outperform frontier models on real business tasks, which models lead the market, where ROI shows up fastest, and how your team can start building with SLMs today. If you manage AI strategy, product development, or technology operations, this guide is built for you.

Table of Contents

Defining Small Language Models: What They Are and What Sets Them Apart

A small language model is a neural network language model designed for efficiency at the cost of breadth. SLMs typically contain between one billion and thirteen billion parameters. The most widely deployed business SLMs sit in the three-billion to seven-billion parameter range. This compares to frontier models like GPT-4, Claude, and Gemini, which contain hundreds of billions of parameters.

Small language models SLMs for business earn their name from what they give up relative to giants. They do not know everything. They do not excel at every task. They have narrower knowledge bases and more limited reasoning breadth. What they gain in return is significant. Speed, cost efficiency, hardware accessibility, and domain focus all improve dramatically as model size shrinks.

The distinction between SLMs and traditional machine learning models matters. SLMs are still language models. They generate text, understand context, follow instructions, and reason through problems. They simply do so within tighter parameter budgets. The engineering challenge behind a high-quality SLM is actually greater than training a massive model. Getting strong performance from fewer parameters demands better training data, better architecture decisions, and more careful optimization.

Microsoft Phi-3 Mini at 3.8 billion parameters scores above many 30-billion-parameter models on standard benchmarks. Google Gemma 2 at 9 billion parameters competes with models twice its size. Apple’s on-device models run entirely on iPhone hardware with no cloud dependency. These achievements signal that small language models SLMs for business have moved well past compromise territory into genuine competitiveness.

Why the Business Case for SLMs Is Stronger Than Ever

Enterprise AI leaders now face pressure from multiple directions. Finance teams demand cost control on AI API budgets that grew unchecked during experimentation phases. Compliance teams push back on sensitive data leaving corporate infrastructure. Product teams demand AI features with latency profiles that cloud APIs cannot reliably deliver. Operations teams need AI running in locations without reliable internet connectivity.

Small language models SLMs for business address every one of these pressures simultaneously. No other single architectural decision delivers cost reduction, compliance improvement, latency gains, and deployment flexibility in one package.

The Economics of SLM Deployment

Cloud API pricing for frontier models charges per token processed. Input tokens and output tokens both accumulate cost. A business application processing one million queries monthly, each with a 500-token prompt and 300-token response, consumes 800 million tokens per month. At GPT-4 class pricing, that volume costs between $40,000 and $120,000 monthly depending on the specific model tier.

A self-hosted seven-billion-parameter SLM on a single GPU server handles equivalent volume for infrastructure costs of $2,000 to $6,000 monthly. Initial fine-tuning investment adds a one-time cost of $10,000 to $30,000. Payback occurs within one to two months. Savings compound indefinitely at scale. The financial argument for small language models SLMs for business at production volume is overwhelming.

Cost advantages grow stronger as usage increases. API costs scale linearly with query volume. Infrastructure costs grow only when hardware capacity requires expansion. A business doubling its AI query volume doubles its API spend. The same business running an SLM on-premises might add a second GPU at a fraction of that cost increase. Scale economics favor SLMs decisively.

Latency Advantages That Change User Experience

User adoption of AI features lives and dies on response speed. Research shows that users abandon interactive AI features when responses take more than two seconds to begin. Enterprise applications operating AI-powered search, coding assistance, or document analysis need responses in the 200 to 800 millisecond range.

Cloud API calls introduce network round-trip latency before the model even begins generating. Token generation speed at large model scale adds additional delay. First-token latency for frontier models through public APIs often runs two to five seconds under normal conditions and longer under peak load.

Small language models SLMs for business eliminate network latency entirely when deployed on-premises or on-device. Smaller models generate tokens faster than larger ones on equivalent hardware. First-token latency drops to 100 to 400 milliseconds on modest GPU hardware. This speed difference changes the UX category from tolerable to delightful. Delightful AI tools get used. Tolerable ones get abandoned.

Data Sovereignty and Compliance Control

Regulated industries face genuine legal exposure when sending sensitive data to external AI APIs. Healthcare organizations sending patient records, diagnoses, or treatment histories to third-party API providers face HIPAA compliance risk. Financial institutions sending transaction data, client portfolios, or internal analysis to cloud AI services face regulatory scrutiny across multiple jurisdictions.

Data breaches involving AI API calls create complex liability questions. Who is responsible when sensitive data sent to an AI API gets exposed? Legal exposure compounds operational risk. Compliance officers increasingly push for AI deployment architectures that keep sensitive data within controlled boundaries.

Small language models SLMs for business run entirely on company infrastructure. Zero data crosses the perimeter. Compliance teams get clean data lineage. Security audits become straightforward. Legal exposure disappears. The privacy architecture is simple because no external dependency exists. Regulated industry enterprises find this benefit alone justifies SLM investment.

Top Small Language Models Making an Impact in Business

The SLM market expanded rapidly between 2023 and 2025. Several models stand out for business deployment based on performance, licensing terms, ecosystem support, and deployment flexibility.

Microsoft Phi-3 and Phi-4 Family

Microsoft Research built the Phi series around one core insight. Training data quality matters more than training data quantity. The Phi-3 Mini model at 3.8 billion parameters achieves benchmark scores that embarrass models three to five times its size. Phi-3 Small at 7 billion parameters and Phi-3 Medium at 14 billion parameters extend the performance profile to more demanding tasks.

Small language models SLMs for business deploying on Azure infrastructure gain native integration benefits with the Phi family. Azure AI Studio provides fine-tuning tooling, deployment management, and monitoring directly for Phi models. Phi-4 continued this trajectory with further performance improvements at comparable sizes. For enterprises seeking a strong out-of-the-box performance baseline that fine-tunes efficiently on domain data, Phi models represent a strong starting choice.

Google Gemma 2

Google’s Gemma 2 family spans 2 billion, 9 billion, and 27 billion parameter variants. Gemma 2 at 9 billion parameters competes directly with models in the 30-billion to 70-billion parameter class on standard language understanding and reasoning benchmarks. The open-weight license allows commercial deployment without per-query API fees, which changes the cost structure fundamentally for high-volume business applications.

Gemma 2 models handle multiple languages more capably than many competitors at equivalent sizes. For businesses with global operations requiring AI support across several languages, Gemma 2 offers competitive multilingual performance without moving to a much larger model. Google Cloud integration provides additional tooling for teams in the Google Cloud ecosystem.

Meta Llama 3.2 Small Variants

Meta’s Llama 3.2 release introduced one-billion and three-billion parameter models specifically optimized for edge and on-device deployment. These models bring the quality characteristics of the Llama 3 architecture to hardware environments that cannot accommodate larger models. Mobile devices, embedded industrial systems, and edge computing nodes all become viable AI deployment targets with Llama 3.2 small variants.

The Llama ecosystem offers the broadest community support of any open-weight model family. Fine-tuning tools, quantization libraries, deployment frameworks, and pre-built task adapters are available from dozens of projects built around Llama architecture. Small language models SLMs for business teams starting with Llama benefit from this ecosystem depth at every stage of development.

Mistral 7B and Mixtral Variants

Mistral AI’s 7-billion-parameter base model set a new efficiency standard when it launched. Mistral 7B outperformed Llama 2 13B on most benchmarks at roughly half the parameter count. The sliding window attention mechanism enabled longer context handling than competing models at the same size. Mixtral variants extended capability using sparse mixture-of-experts architecture.

Small language models SLMs for business benefit from Mistral’s aggressive open licensing and strong instruction-following performance after fine-tuning. Customer service, document analysis, and internal Q&A applications built on Mistral 7B consistently deliver production-quality results at modest infrastructure costs.

High-Impact Business Use Cases for Small Language Models

Understanding where small language models SLMs for business deliver maximum value requires looking at the characteristics of tasks they handle best. High-volume, domain-specific, latency-sensitive, and privacy-constrained tasks all align with SLM strengths. Here is where business teams consistently find the strongest ROI.

Specialized Customer Support Systems

Generic chatbots frustrate customers. They answer broadly but poorly on specific product questions. Fine-tuned SLMs answer narrowly but accurately on everything within their domain. A customer support SLM trained on three years of support tickets, product documentation, and resolution records knows your products at a depth no general model matches.

Resolution rates improve dramatically. Escalation rates to human agents drop. Customer satisfaction scores rise. Cost per interaction falls by 80% to 90% compared to frontier API alternatives. Speed improves to near-real-time response. Small language models SLMs for business deployed in customer support consistently rank among the highest-ROI AI investments enterprises make.

Legal and Compliance Document Analysis

Legal teams review enormous document volumes daily. Contracts, regulatory filings, compliance reports, and litigation documents all require careful analysis. Manual review is slow and expensive. Frontier model APIs introduce data sovereignty concerns for privileged client information.

Fine-tuned SLMs running on-premises handle legal document analysis with accuracy that matches or exceeds general frontier models on domain-specific tasks. Clause extraction, risk flagging, obligation identification, and regulatory alignment checking all perform at production quality after fine-tuning on relevant legal corpora. Privacy concerns disappear because the model runs inside the firm’s own infrastructure.

Healthcare Clinical Documentation

Clinical documentation consumes enormous physician time. Doctors spend two hours on documentation for every one hour of patient care according to multiple studies. AI that accurately captures clinical notes, codes diagnoses, and populates electronic health records from physician dictation saves significant time and reduces burnout.

Small language models SLMs for business fine-tuned on clinical notes, medical coding references, and specialty-specific terminology perform this task with accuracy that general models cannot achieve. More importantly, they do so on-premises within HIPAA-compliant infrastructure. No patient data leaves the healthcare system’s controlled environment.

Software Development Acceleration

Development teams deploy AI coding assistants that suggest completions, generate functions, write tests, and review code quality. The most valuable coding assistants know the specific codebase, internal libraries, and architectural patterns of the team they serve. General models suggest generic solutions. Codebase-aware SLMs suggest ready-to-use contributions.

A fine-tuned SLM trained on a company’s entire codebase, internal API documentation, and coding standards produces suggestions that fit actual project context. Pull request acceptance rates for AI-generated code increase dramatically when suggestions reference real internal APIs and follow actual team conventions. Small language models SLMs for business serve development teams with genuine productivity gains rather than suggestive but impractical code snippets.

Supply Chain and Operations Intelligence

Manufacturing, logistics, and retail operations generate massive volumes of structured and unstructured data. Maintenance logs, supplier communications, shipping records, inventory reports, and incident notes all contain intelligence that manual analysis cannot process at scale.

SLMs deployed at the edge of operational environments process this data in real time without cloud connectivity dependencies. A plant floor assistant answers technician questions about maintenance procedures using on-device inference. A logistics hub processes natural language shipping exception queries locally. Small language models SLMs for business make AI accessible in operational environments that cloud-dependent systems simply cannot serve.

Building and Deploying SLMs: A Practical Framework

Deploying small language models SLMs for business follows a repeatable framework. Teams that approach SLM deployment methodically achieve production quality faster and avoid costly rework.

Selecting the Right Base Model

Base model selection should match your task profile, hardware constraints, and language requirements. Start by characterizing your task. Is it classification, generation, extraction, or reasoning-heavy? Classification and extraction tasks perform well on smaller base models. Complex reasoning benefits from larger SLM variants. Match hardware constraints to model size before committing to a base model family.

Evaluate benchmark performance on tasks similar to your target application. Generic reasoning benchmarks give a starting point. Task-specific evaluations using representative samples from your actual use case give the real answer. Download top candidate models and run your own evaluation before committing to a fine-tuning investment.

Fine-Tuning Efficiently with LoRA

Low-Rank Adaptation is the standard technique for efficient SLM fine-tuning. LoRA adds small trainable adapter layers to the frozen base model. Only adapters train, not the full model. Memory requirements drop dramatically. A seven-billion-parameter model fine-tunes on a single consumer GPU with 24 gigabytes of VRAM using LoRA. Training time for a well-prepared dataset of 10,000 examples runs 6 to 16 hours on a single GPU.

QLoRA extends LoRA by quantizing the base model to four-bit precision before adapter training. Memory requirements fall further. A 13-billion-parameter model fine-tunes comfortably on hardware that would otherwise require a much smaller base model. Quality impact on most business task types remains minimal. QLoRA is the practical choice for teams with constrained hardware budgets.

Data Preparation Is the Critical Success Factor

Fine-tuning quality depends on data quality above all else. A small dataset of 3,000 to 5,000 high-quality, well-formatted examples outperforms a dataset of 50,000 poorly formatted, inconsistent examples. Invest heavily in training data curation. Every hour spent improving training data quality pays back in model performance.

Format training data as instruction-response pairs. Each example pairs a clear task instruction with a high-quality target response that represents exactly what you want the model to produce in production. Include diverse examples covering the full range of inputs the model will encounter. Represent edge cases explicitly in training data rather than hoping the model handles them intuitively.

Evaluation, Monitoring, and Iteration

Build a held-out evaluation set before any fine-tuning begins. Use examples the model never sees during training to measure actual generalization performance. Evaluate final model outputs against this set for accuracy, format compliance, hallucination rate, and task completion. These numbers reveal the true production-readiness of the fine-tuned model.

Deploy with monitoring instrumentation from day one. Log every input, output, confidence signal, and user feedback event. Analyze logged data weekly in early deployment phases. Identify failure patterns early. Build additional training examples targeting those failure patterns. Re-fine-tune quarterly as new training data accumulates. Small language models SLMs for business improve continuously when teams treat deployment as the beginning of an ongoing optimization cycle rather than a finish line.

Measuring ROI from Small Language Model Deployments

Business leaders need ROI clarity before approving SLM investments. The value case for small language models SLMs for business spans four distinct categories. Quantify all four to build a complete investment case.

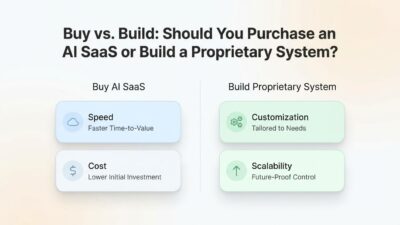

Direct cost savings compare frontier API spend against SLM infrastructure and operational costs. Calculate your current or projected API token consumption. Price it at frontier rates. Compare to SLM infrastructure costs including hardware amortization, maintenance, and engineering support. The difference represents direct cost savings. For high-volume applications, this number alone justifies investment.

Productivity recovery measures the value of human time reclaimed from tasks the SLM automates or accelerates. Identify target tasks. Measure current human time per task completion. Estimate post-SLM time reduction. Multiply by average fully-loaded hourly labor cost. Sum across all impacted roles. This calculation typically reveals larger value than direct cost savings for knowledge worker applications.

Quality improvement value captures the financial impact of better accuracy, lower error rates, and faster response times. Reduced escalation rates in customer support carry cost savings. Lower contract review error rates reduce legal exposure. Faster clinical documentation reduces physician overtime costs. Assign financial values to quality improvements by connecting them to measurable business outcomes.

Risk reduction value accounts for compliance benefits, data breach exposure reduction, and regulatory fine avoidance from keeping sensitive data on-premises. Work with legal and compliance teams to quantify risk reduction. Include this in total ROI calculations even though it requires estimation rather than direct measurement.

FAQs:

How do small language models compare to large language models for accuracy?

On general knowledge and broad reasoning tasks, large language models maintain clear accuracy advantages. On domain-specific tasks after fine-tuning, small language models SLMs for business match or outperform general frontier models. The critical factor is task specificity. A 7-billion-parameter model fine-tuned on 10,000 medical coding examples outperforms a 70-billion-parameter general model on medical coding tasks. Domain fit and fine-tuning quality matter more than raw parameter count for most practical business applications.

What infrastructure does a business need to run an SLM?

Entry-level SLM deployment for development and testing runs on a workstation with a modern consumer GPU carrying 16 to 24 gigabytes of VRAM. Production deployments handling concurrent user requests need dedicated GPU servers with 40 to 80 gigabytes of GPU memory for comfortable headroom. Cloud GPU instances from major providers work well for teams not ready for on-premises hardware investment. Organizations prioritizing data sovereignty invest in on-premises GPU hardware where the infrastructure cost justifies itself within one to three months of API cost savings.

How long does fine-tuning an SLM for a business task take?

Data preparation is the longest phase and often takes three to six weeks for organizations starting with raw internal data. Actual fine-tuning runs on well-prepared datasets of 5,000 to 20,000 examples complete in four to sixteen hours on a single modern GPU. Evaluation, iteration, and production hardening add two to four additional weeks. Total project timelines from kickoff to production deployment typically run six to twelve weeks for teams new to SLM fine-tuning and four to eight weeks for experienced teams.

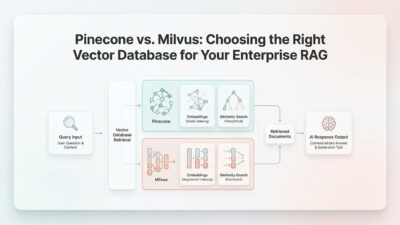

Can SLMs handle tasks requiring real-time information?

Base SLMs carry knowledge only from their training data cutoff. Retrieval-augmented generation addresses this limitation effectively. RAG systems connect the SLM to live data sources at inference time. The model receives retrieved current information as context and generates responses grounded in real-time facts. Small language models SLMs for business deployed with RAG architectures handle time-sensitive queries accurately without retraining. This combination covers the majority of business use cases that require current information access.

What are the biggest mistakes businesses make when deploying SLMs?

The most common mistake is under-investing in training data quality while over-investing in model selection. Model choice matters less than data quality. Teams that choose a slightly suboptimal base model but prepare excellent training data consistently outperform teams that choose the ideal base model but use mediocre training data. The second most common mistake is skipping rigorous evaluation before production deployment. Models that perform well on spot-checked examples often fail systematically on edge cases that evaluation datasets surface. The third mistake is treating deployment as a completion event rather than the beginning of a continuous improvement cycle.

Are SLMs suitable for multilingual business applications?

Multilingual capability varies across SLM architectures. Google Gemma 2 and certain Llama variants handle multiple European and Asian languages well in base form. Fine-tuning on multilingual domain data improves performance further on specific language pairs. For businesses needing strong accuracy in one or two non-English languages alongside English, domain-tuned small language models SLMs for business deliver competitive results. Applications requiring high accuracy across ten or more languages simultaneously may still benefit from larger multilingual foundation models for the cross-lingual reasoning tasks that complexity demands.

Read More:-AI ROI Calculator: How to Measure the True Value of Your Automation Spend

Conclusion

Scale captured the AI industry’s imagination for years. Bigger models attracted bigger headlines, bigger investment rounds, and bigger enterprise contracts. Scale delivered genuine capability advances. It also delivered costs, latency, privacy risks, and deployment complexity that most businesses cannot practically manage.

Small language models SLMs for business answer the practical question that scale never solved. How does an organization embed AI capability into daily operations reliably, affordably, and securely? The answer sits in domain-focused models that run on accessible hardware, deliver millisecond responses, stay within corporate infrastructure, and improve continuously through ongoing fine-tuning.

The evidence from production deployments is consistent. Customer support accuracy improves. Documentation processing speeds multiply. Legal review capacity scales without proportional headcount growth. Development teams ship AI-assisted code faster. Healthcare workflows shed administrative burden. Operations teams access intelligence at the edge where cloud APIs never reach.

None of this requires abandoning frontier models entirely. Smart enterprise AI strategy uses large models where breadth genuinely matters and small language models SLMs for business where domain precision, speed, cost, and data control define success. Most actual business workloads belong in the second category.

The organizations building SLM capability now are developing a compounding advantage. Each fine-tuning cycle improves model quality. Each improvement increases user adoption. Each adoption data point feeds the next improvement cycle. The gap between SLM-native enterprises and those still running every query through expensive frontier APIs widens every quarter.

Smaller is not a compromise. For most business applications, smaller is the right choice. Small language models SLMs for business are not the future of enterprise AI. They are the present. The competitive window for early adoption advantage is open right now. The organizations walking through it first will set the operational standard for everyone who follows.