Introduction

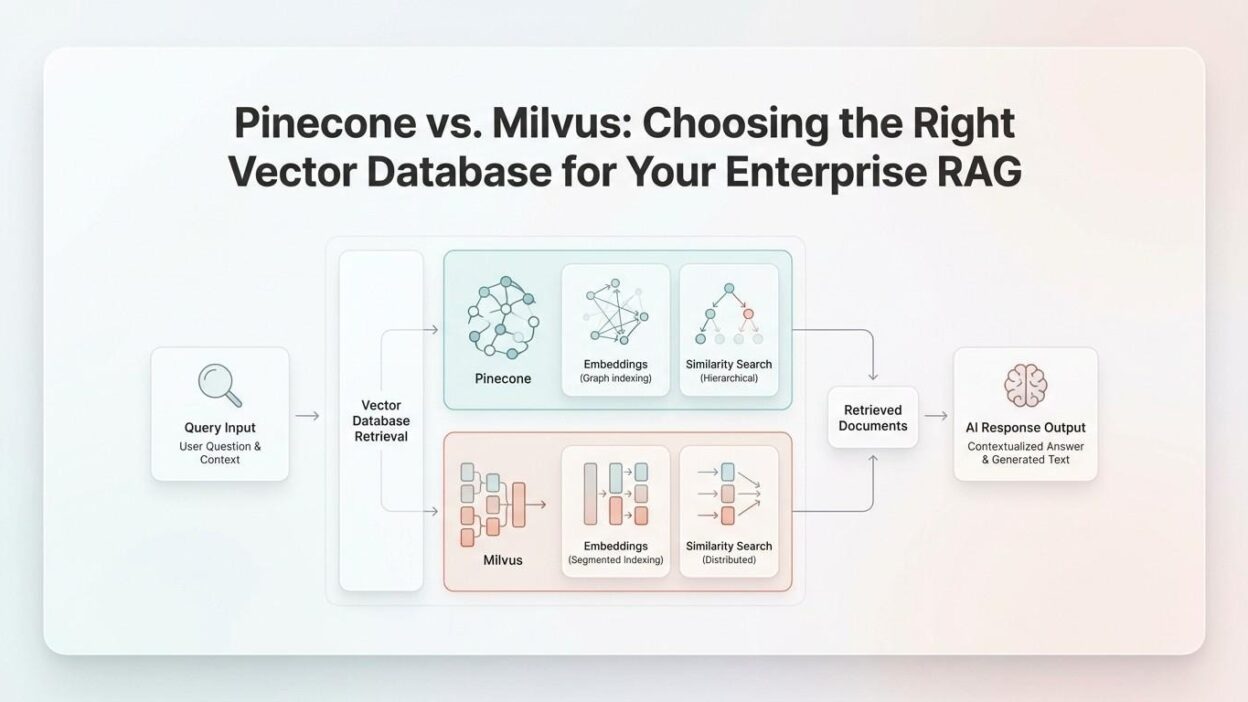

TL;DR Every enterprise AI team faces a critical infrastructure decision. The choice of a vector database shapes the performance of every RAG pipeline. The Pinecone vs Milvus vector database debate sits at the center of that decision.

Both tools handle high-dimensional vector search at scale. Both serve production-grade retrieval pipelines. Still, they differ in architecture, cost structure, and operational control.

This blog breaks down each platform honestly. It covers architecture, performance, pricing, and real enterprise use cases. By the end, you will know exactly which tool fits your stack.

Table of Contents

What Is a Vector Database and Why Does It Matter for RAG?

A vector database stores numerical representations of data called embeddings. These embeddings capture semantic meaning. A similarity search over embeddings retrieves contextually relevant results.

RAG stands for Retrieval-Augmented Generation. It grounds large language models with real-world, up-to-date context. The LLM retrieves relevant chunks from a vector store before generating a response.

Without a powerful vector database, RAG pipelines slow down. Retrieval quality drops. Hallucinations increase. The database becomes the backbone of the entire system.

Enterprises deal with billions of vectors. They need low latency, high throughput, and strong filtering. That is where the Pinecone vs Milvus vector database comparison becomes very important.

Choosing wrong costs time and money. Choosing right delivers fast, accurate, and scalable AI retrieval.

Core Requirements for Enterprise Vector Search

Enterprise teams need more than just fast search. They need metadata filtering at scale. They need multi-tenancy support. They need consistent uptime under heavy load.

Security and access control are non-negotiable. Data residency requirements often limit cloud options. Integration with existing ML stacks also plays a role.

Both Pinecone and Milvus address these needs. They do it in very different ways.

What Is Pinecone?

Pinecone is a fully managed, cloud-native vector database. It was built from the ground up for production AI workloads. Users do not manage infrastructure. Everything runs on Pinecone’s servers.

The platform launched in 2021. It quickly became popular for its simplicity and speed. Developers can spin up a vector index in minutes. No DevOps knowledge is required.

Pinecone supports approximate nearest neighbor (ANN) search. It uses its own proprietary indexing under the hood. Users interact through a clean REST API or Python SDK.

Namespaces allow logical data separation within a single index. This supports basic multi-tenancy. Metadata filtering lets users narrow results by custom attributes.

Pinecone Serverless introduced a new pricing model in 2024. It charges per query rather than per pod. This lowered costs for low-traffic workloads significantly.

In the Pinecone vs Milvus vector database comparison, Pinecone wins on ease of use. It is the go-to for teams that want fast deployment without infrastructure overhead.

Pinecone Architecture Overview

Pinecone separates storage from compute. The control plane manages metadata. The data plane handles vector operations.

The Serverless tier stores vectors in object storage. It scales automatically with usage. The Pod-based tier offers dedicated resources and lower latency for high-throughput needs.

Pinecone does not expose the underlying index type. Users cannot configure HNSW or IVF parameters directly. This is intentional. It simplifies the experience.

What Is Milvus?

Milvus is an open-source vector database. Zilliz created it and donated it to the Linux Foundation in 2019. It is now one of the most starred vector database projects on GitHub.

Milvus supports multiple index types. HNSW, IVF_FLAT, IVF_SQ8, and DiskANN are all available. Users choose the index that fits their accuracy and speed trade-off.

The platform runs on-premise or on any cloud. Kubernetes deployment is fully supported. This gives enterprises full control over data placement and infrastructure costs.

Milvus supports hybrid search. It combines dense vector search with sparse keyword search. This is a major advantage for RAG pipelines that need both semantic and keyword relevance.

The Milvus Lite version runs locally for development. Milvus Standalone handles single-node deployments. Milvus Distributed scales to billions of vectors across multiple nodes.

In the Pinecone vs Milvus vector database space, Milvus wins on flexibility and control. It is the top choice for teams that need custom configurations and self-hosted infrastructure.

Milvus Architecture Overview

Milvus uses a decoupled architecture. It separates storage, compute, and coordination layers. Each component scales independently.

The system relies on etcd for metadata management. MinIO or S3 handles object storage. Pulsar or Kafka manages message streaming between nodes.

This architecture adds complexity. It also adds resilience and horizontal scalability at enterprise scale.

Pinecone vs Milvus: Feature-by-Feature Comparison

Deployment and Infrastructure

Pinecone is fully managed. There is nothing to install or maintain. Zilliz Cloud offers a managed version of Milvus. However, open-source Milvus requires self-managed Kubernetes.

Enterprises with strict data residency needs prefer Milvus. It runs inside their own cloud accounts or on-premise data centers. Pinecone operates only on its own cloud infrastructure.

Teams without dedicated infrastructure engineers benefit from Pinecone. The managed service removes operational burden entirely.

Indexing and Search Capabilities

Milvus supports HNSW, IVF, DiskANN, SCANN, and GPU-accelerated indexes. Users tune recall, speed, and memory usage for each use case. This level of control is unmatched.

Pinecone uses its own managed index. Users do not control index parameters. This is simpler but limits optimization potential.

Milvus supports sparse-dense hybrid search natively. Pinecone added sparse vector support later. Milvus still offers more mature hybrid search capabilities.

Scalability and Performance

Both platforms scale to billions of vectors. Pinecone scales by adding pods or using Serverless auto-scaling. Milvus scales by adding query nodes and data nodes independently.

Milvus achieves sub-10ms query latency at billion-scale with GPU-powered indexes. Pinecone consistently delivers low single-digit millisecond latency for pod-based deployments.

For throughput-heavy workloads, both platforms perform well. Milvus allows granular tuning to push performance further.

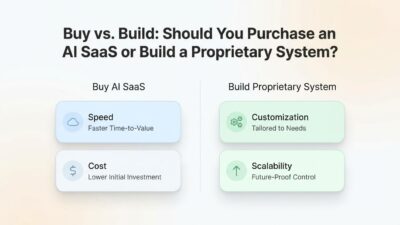

Pricing and Total Cost of Ownership

Pinecone Serverless charges per read unit and write unit. Costs scale with query volume and vector count. Pod-based pricing charges for reserved compute regardless of usage.

Milvus open-source is free. You only pay for the cloud infrastructure it runs on. At large scale, this can mean significant savings over managed services.

Zilliz Cloud, the managed Milvus offering, competes directly with Pinecone on pricing. It often works out cheaper at high query volumes.

Hidden costs matter too. Milvus requires DevOps time. Pinecone eliminates that. Factor in engineering hours when comparing total cost of ownership.

Security and Compliance

Pinecone supports SOC 2 Type II compliance. It offers encryption at rest and in transit. RBAC is available at the organization level.

Milvus supports TLS encryption, RBAC, and authentication. Self-hosted deployments allow custom security configurations. This is critical for regulated industries like finance and healthcare.

Milvus gives more control over data governance. Pinecone gives less control but handles compliance certification for you.

Pinecone vs Milvus for Enterprise RAG Use Cases

Customer Support Automation

Customer support bots need fast, accurate retrieval from large knowledge bases. Query volume spikes during product launches. Latency must stay under 200ms for good user experience.

Pinecone works well here. Its Serverless tier handles spiky traffic without pre-provisioning. Teams can focus on prompt engineering instead of infrastructure tuning.

The Pinecone vs Milvus vector database choice for support bots often favors Pinecone. Speed of deployment and auto-scaling justify the higher per-query cost.

Enterprise Search and Document Intelligence

Enterprise document search requires hybrid retrieval. Employees search by keywords and semantic meaning at the same time. Milvus native sparse-dense hybrid search handles this natively.

Large document corpora with billions of chunks need granular index tuning. Milvus DiskANN handles billion-scale datasets with disk-based indexing at lower memory cost.

Document intelligence pipelines at enterprise scale often land on Milvus. The customization wins over the convenience factor.

Real-Time Recommendation Systems

Recommendation systems need extremely low latency. They retrieve relevant items for millions of users simultaneously. Both platforms can handle this workload.

Milvus with GPU-accelerated indexes delivers exceptional throughput for recommendation workloads. Pinecone’s pod-based p2 instances also serve recommendation use cases reliably.

Companies running recommendations at e-commerce scale usually go with Milvus. The performance tuning options and cost savings matter more at that volume.

Developer Experience and Ecosystem

Pinecone has excellent documentation. Its Python SDK is beginner-friendly. LangChain and LlamaIndex both offer native Pinecone integrations. Getting started takes less than 30 minutes.

Milvus has strong documentation too. Its Python SDK, PyMilvus, is well-maintained. LangChain and LlamaIndex also integrate with Milvus natively. The learning curve is steeper.

The Milvus community is large and active. GitHub issues get resolved quickly. Zilliz provides enterprise support contracts. Stack Overflow has hundreds of Milvus answers.

Pinecone offers a generous free tier. Developers experiment without a credit card. This speeds up adoption in startup and mid-market segments.

In the Pinecone vs Milvus vector database developer experience race, Pinecone leads for beginners. Milvus wins for teams that need advanced integrations and custom tooling.

When Should You Choose Pinecone?

Choose Pinecone when your team lacks dedicated infrastructure engineers. It eliminates all deployment and operational complexity.

Pick Pinecone when you need to ship fast. A RAG prototype becomes production-ready in days. No Kubernetes expertise needed.

Pinecone suits startups and mid-sized teams well. The Serverless tier keeps costs low during early growth. Scaling up is automatic.

The Pinecone vs Milvus vector database decision favors Pinecone for teams that value simplicity, speed, and fully managed reliability above all else.

When Should You Choose Milvus?

Choose Milvus when data sovereignty matters. Self-hosted deployment keeps vectors inside your infrastructure. Regulators require this in many industries.

Pick Milvus when you need hybrid search. Combining sparse and dense vectors in one query improves RAG quality for complex document retrieval tasks.

Milvus works best for teams with strong engineering capacity. The performance optimization potential rewards teams that invest in tuning.

The Pinecone vs Milvus vector database choice points to Milvus when you operate at massive scale, need custom indexes, or must keep data fully under your control.

FAQs: Pinecone vs Milvus Vector Database

Is Milvus faster than Pinecone?

It depends on the configuration. Milvus with GPU-accelerated HNSW indexes can outperform Pinecone on raw throughput. Pinecone Pod-based deployments deliver very competitive single-query latency. The answer changes based on workload type, data size, and index configuration.

Is Pinecone more expensive than Milvus?

Milvus open-source has no licensing cost. You pay only for cloud infrastructure. Pinecone charges per query or per pod. At very high query volumes, Milvus on your own cloud is cheaper. At low-to-medium volumes, Pinecone Serverless is often more cost-efficient.

Can Milvus replace Pinecone?

Yes, technically. Milvus can handle all core use cases that Pinecone supports. The replacement requires infrastructure setup, migration scripts, and operational readiness. For teams without those resources, replacing Pinecone with Milvus adds more overhead than value.

Which vector database is best for LangChain RAG?

Both Pinecone and Milvus have official LangChain integrations. Pinecone is easier to set up inside LangChain. Milvus offers more retrieval customization. For quick prototyping, use Pinecone. For production RAG with hybrid search needs, use Milvus.

What is Zilliz Cloud and how does it relate to Milvus?

Zilliz Cloud is the fully managed version of Milvus. It removes the infrastructure complexity of open-source Milvus. It competes directly with Pinecone as a managed vector database service. Zilliz Cloud uses the same Milvus core but with a polished enterprise interface.

Does Pinecone support hybrid search?

Pinecone supports sparse-dense hybrid search through its sparse vector feature. It became available in 2023. Milvus has supported native hybrid search longer and offers more configuration options for combining search modes.

Read More:-The Rise of Small Language Models (SLMs): Why Smaller is Often Better for Business

Conclusion

The Pinecone vs Milvus vector database decision is not about which tool is better in absolute terms. It is about which tool fits your team, your scale, and your infrastructure strategy.

Pinecone wins when you need speed to market, zero infrastructure overhead, and reliable managed performance. It is the right tool for fast-moving teams and early-stage production pipelines.

Milvus wins when you need data control, cost efficiency at scale, advanced index tuning, and hybrid search maturity. It is the right tool for engineering-heavy teams with complex retrieval needs.

Both tools are production-proven. Both have strong communities and active development. The Pinecone vs Milvus vector database landscape continues to evolve rapidly.

Evaluate your team’s strengths. Measure your query volumes. Assess your data residency requirements. Then pick the tool that removes friction from your AI pipeline rather than adding it.

Your RAG pipeline is only as strong as the retrieval layer beneath it. Choose wisely.