Introduction

TL;DR AI hallucinations destroy trust fast. A model confidently states a wrong fact. A workflow produces a fabricated legal citation. A customer receives incorrect product information. These failures are not random glitches. They are predictable outcomes of poorly designed AI systems. Prompt orchestration AI workflows exist to solve exactly this problem.

Most teams treat prompting as a one-shot interaction. They write a question, get an answer, and hope for accuracy. That approach works for simple queries. It collapses under the weight of complex, multi-step business tasks. Complex tasks need structured orchestration, not improvised prompting.

Prompt orchestration gives AI systems a spine. It defines how instructions flow, how context builds, how outputs get verified, and how errors get caught before they propagate. Teams that master prompt orchestration AI workflows consistently outperform those relying on raw model capability alone.

This blog is your practical guide to understanding, designing, and deploying prompt orchestration AI workflows that minimize hallucinations and maximize reliable output quality. Whether you manage AI products, build enterprise automation, or lead a development team, this roadmap applies directly to your work.

Table of Contents

What Is Prompt Orchestration and Why Does It Matter?

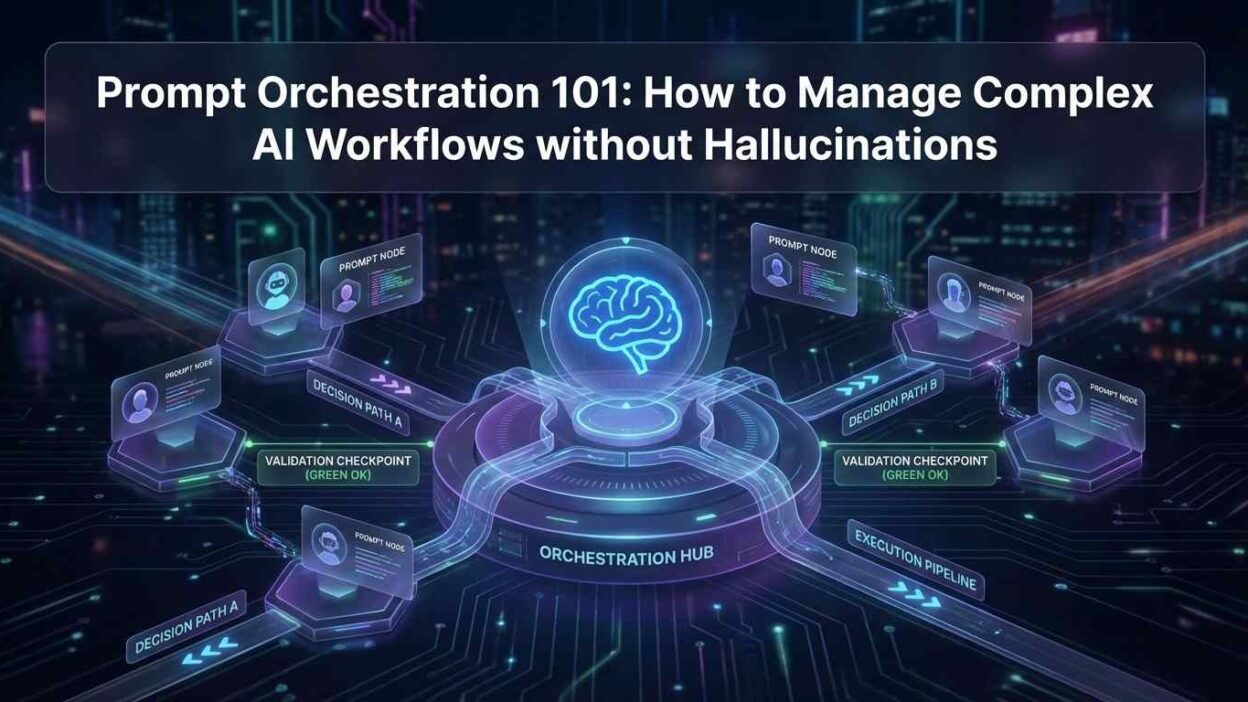

Prompt orchestration is the discipline of managing how prompts, models, tools, and outputs work together across a complex AI task. It moves beyond single-prompt interactions into structured sequences where each step depends on previous results and feeds into the next.

Think of it this way. A single prompt is a question. Prompt orchestration AI workflows are the entire conversation architecture. They define who asks what, in what order, with what context, and how results get validated before moving forward.

Without orchestration, AI systems face compounding error risk. A hallucination in step two of a ten-step workflow corrupts every step that follows. Orchestration catches errors early. It validates outputs at critical checkpoints. It prevents flawed information from cascading through the rest of the pipeline.

The business stakes are real. Organizations using unstructured AI prompting report higher error rates, lower user trust, and more expensive human review requirements. Teams using well-designed prompt orchestration AI workflows report 40% to 60% reductions in output errors. That gap translates directly to productivity and reliability differences.

Understanding Hallucinations in AI Workflows

Hallucinations are outputs where the AI model generates confident-sounding information that is factually wrong, invented, or inconsistent with the actual context provided. They are not bugs in the traditional sense. They are a natural consequence of how language models generate text.

Why Models Hallucinate

Language models predict the next most probable token based on training patterns. They do not retrieve facts from a verified database. They generate plausible-sounding continuations of text. When the model lacks specific knowledge, it fills the gap with statistically likely words rather than admitting uncertainty.

Hallucinations increase when prompts are vague. Ambiguous instructions give the model too much creative latitude. The model fills gaps with invented details. Specificity in prompt design is the first line of defense against hallucination.

Hallucinations also increase as context windows grow longer. Models lose track of earlier information in very long conversations. Important constraints stated early in a prompt get effectively forgotten by the time the model generates a response. Prompt orchestration AI workflows manage context length deliberately to avoid this failure mode.

The Three Types of Hallucinations to Watch

Factual hallucinations occur when the model states wrong information as fact. A model might cite a study that does not exist or state an incorrect statistic with full confidence. These are the most damaging in professional and enterprise contexts.

Contextual hallucinations occur when the model ignores or contradicts information explicitly provided in the prompt. A user provides a contract and asks for a summary. The model summarizes clauses not present in the document. This happens when context management fails in complex workflows.

Instruction hallucinations occur when the model does not follow formatting or behavioral instructions reliably. The model generates a numbered list when a paragraph was requested. Or it produces five items when three were specified. Instruction clarity and enforcement mechanisms address this type.

Core Principles of Effective Prompt Orchestration AI Workflows

Mastering prompt orchestration AI workflows requires internalizing a small set of design principles. These principles apply regardless of which model, framework, or use case you work with.

Decompose Complex Tasks into Atomic Steps

Never ask a language model to do ten things in one prompt. Complex tasks overwhelm context management and increase hallucination risk. Decompose every complex goal into atomic subtasks. Each subtask gets its own focused prompt. Each prompt has one clear objective.

A document analysis workflow illustrates this well. Step one extracts the key claims from the document. Step two verifies each claim against a reference source. Step three flags unverified claims for human review. Step four generates the final summary using only verified claims. Each step is simple. The sequence produces a reliable, hallucination-resistant output.

Atomic decomposition also makes debugging practical. When an orchestrated workflow produces an error, you isolate the failing step quickly. A monolithic prompt that fails gives you nothing to debug. A decomposed workflow gives you a precise failure location.

Use Structured Output Formats

Unstructured text outputs are hard to validate and harder to pipe into subsequent workflow steps. Structured output formats solve both problems. Instruct models to respond in JSON, XML, or clearly delimited text blocks that downstream systems can parse reliably.

Structured outputs enable automated validation. A validator function checks that the JSON contains required fields before passing the result to the next step. Missing fields or type mismatches trigger error handling rather than silent propagation of bad data. This is a core hallucination containment technique in mature prompt orchestration AI workflows.

Modern language models follow structured output instructions reliably when the format specification is explicit and placed prominently in the prompt. Specify the output schema at the start of the prompt. Repeat key constraints at the end. Models follow instructions more consistently with this framing technique.

Implement Verification and Validation Layers

Every critical output in a prompt orchestration workflow needs a verification step. Verification can take several forms. A second model prompt reviews the first output and checks for consistency with the source material. A code-based validator confirms format compliance and required content. A retrieval system cross-references claims against authoritative data.

Verification layers add latency and cost. Design them proportionally to risk. High-stakes outputs like legal summaries, financial figures, or medical information demand rigorous verification. Low-stakes outputs like draft email subject lines need lighter-touch validation. Match verification intensity to output consequence.

Self-consistency checking is a powerful verification technique. Run the same prompt multiple times with temperature variation. Compare outputs. Divergent responses signal low model confidence on that specific question. Consistent responses across multiple runs indicate higher reliability. Use self-consistency as a confidence signal in your prompt orchestration AI workflows.

Manage Context Windows Deliberately

Context window management is one of the most underappreciated skills in prompt orchestration AI workflows. Every token in the context window affects what the model attends to. Long, unfocused context windows dilute important instructions and increase hallucination risk.

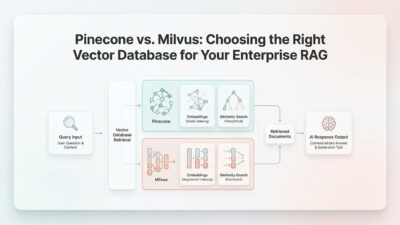

Trim context aggressively at each workflow step. Only pass information relevant to the current subtask. Summarize earlier steps rather than passing their full outputs. Use retrieval-augmented generation to pull specific facts on demand rather than loading entire documents into every prompt.

System prompt design matters enormously for context quality. System prompts establish the model’s role, behavioral constraints, and output requirements. A well-crafted system prompt reduces the cognitive burden on every user-turn prompt. The model already knows its job before the task begins.

Design Explicit Error Handling Paths

Every workflow step can fail. Models return unexpected formats. Tools throw errors. Retrieved context contains contradictory information. Well-designed prompt orchestration AI workflows anticipate these failures and define explicit handling paths for each scenario.

Retry logic with modified prompts handles transient model failures. Fallback models step in when a primary model returns low-confidence or malformed outputs. Human escalation paths route genuinely ambiguous situations to appropriate reviewers. Graceful degradation returns a partial result rather than a complete failure when upstream steps succeed but downstream steps fail.

Error handling is not just a technical design concern. It is a trust design concern. Systems that fail gracefully and transparently maintain user trust far better than systems that produce confident wrong answers or silent failures.

Prompt Orchestration Patterns for Complex AI Workflows

Several established orchestration patterns address different categories of complex AI tasks. Understanding these patterns accelerates workflow design and reduces architectural mistakes.

The Chain Pattern

The chain pattern is the most fundamental prompt orchestration structure. Output from step one feeds as input to step two. Step two feeds step three. The sequence continues until the final output. Each step handles one specific transformation of the data.

Chain patterns work best for linear processes with clear dependencies. Document translation, summarization, and reformatting workflows use chains effectively. The key design decision is what information passes between steps. Pass only what the next step needs. Avoid accumulating unnecessary context that clutters downstream prompts.

The Router Pattern

The router pattern uses a classifier prompt to direct inputs to different downstream workflows. A customer inquiry arrives. The router classifies it as a billing question, a technical support request, or a general inquiry. Each category routes to a specialized prompt workflow optimized for that task type.

Routers improve output quality by matching task types to specialized prompt designs. A general-purpose prompt handles everything adequately. A specialized prompt handles its domain excellently. Routing investments pay off in quality improvement at scale. This pattern is central to mature prompt orchestration AI workflows handling diverse input types.

The Map-Reduce Pattern

Map-reduce handles large documents and data sets that exceed single-context-window limits. The map phase splits the input into chunks and processes each chunk with the same prompt. Each chunk produces a partial result. The reduce phase synthesizes all partial results into a final output.

This pattern scales to arbitrarily large inputs. A 500-page contract review uses map-reduce to analyze each section independently. The reduce phase combines section-level findings into an overall risk summary. Quality stays high because no single prompt overloads its context window.

The Critic Pattern

The critic pattern adds a dedicated review step to any workflow. A generator prompt produces an initial output. A separate critic prompt reviews the output against specific quality criteria. The critic identifies errors, gaps, or quality issues. A revision prompt incorporates the critic’s feedback and produces an improved final output.

The critic pattern dramatically reduces hallucination rates in complex content generation. The generator focuses on creation. The critic focuses on verification. Separating these concerns into distinct prompts with distinct instructions outperforms asking a single prompt to generate and verify simultaneously. This separation is a cornerstone technique in robust prompt orchestration AI workflows.

The Parallel Execution Pattern

Parallel execution runs multiple prompts simultaneously and merges their outputs. A competitive analysis workflow runs separate research prompts for each competitor in parallel. Results arrive faster than sequential processing. A merge prompt synthesizes the parallel outputs into a unified analysis.

Parallel patterns require careful merge prompt design. The merge step must reconcile potentially conflicting or overlapping information from parallel branches. Explicit merge instructions, structured output requirements, and conflict resolution rules in the merge prompt prevent the merge step from becoming the new hallucination risk point.

Tools and Frameworks for Building Prompt Orchestration AI Workflows

Several mature tools accelerate prompt orchestration AI workflows development. Choosing the right tool depends on your technical environment, scale requirements, and team capabilities.

LangChain and LangGraph

LangChain is the most widely adopted framework for building prompt orchestration AI workflows. It provides chains, agents, memory modules, tool integrations, and retrieval connectors. LangGraph extends LangChain to support stateful, graph-based workflow structures with explicit error handling and conditional branching.

LangChain works well for Python-first teams building complex multi-step workflows. Its extensive ecosystem of pre-built integrations reduces development time for common use cases. The framework does carry complexity overhead. Simple workflows often need simpler tools.

Microsoft Semantic Kernel

Semantic Kernel is Microsoft’s open-source orchestration framework. It supports Python and C#. It integrates natively with Azure OpenAI and other model providers. Semantic Kernel organizes AI capabilities into plugins and planners that dynamically construct execution plans for complex tasks.

Enterprise teams already in the Microsoft ecosystem benefit most from Semantic Kernel. Its integration with Azure services, Active Directory authentication, and enterprise logging infrastructure aligns with corporate IT requirements that open-source alternatives handle less elegantly.

DSPy for Systematic Prompt Optimization

DSPy from Stanford treats prompt engineering as a programming problem. Instead of manually crafting prompts, DSPy compiles high-level task specifications into optimized prompt programs. It automatically adjusts prompt wording based on performance evaluation against labeled examples.

DSPy is ideal for teams building production-quality prompt orchestration AI workflows where prompt quality needs systematic optimization rather than manual iteration. The learning curve is steeper. The output quality ceiling is significantly higher than manual prompting approaches.

Lightweight Alternatives for Simpler Workflows

Not every prompt orchestration challenge needs a full framework. Simple chain workflows build cleanly in raw Python or JavaScript using direct API calls and basic control flow. Guardrails AI adds validation and output structuring without requiring a full orchestration framework. Instructor by Jason Liu handles structured output enforcement elegantly for teams focused on reliable JSON outputs from language models.

Match your tooling to your actual complexity. Over-engineering simple workflows with heavy frameworks creates unnecessary maintenance burden. Under-engineering complex workflows with basic scripts creates fragile systems that break unpredictably at scale.

Measuring and Improving Prompt Orchestration Workflow Quality

Building prompt orchestration AI workflows is not a one-time event. Quality requires continuous measurement and systematic improvement. Teams that instrument their workflows well improve faster than teams that rely on intuition.

Key Metrics to Track

Hallucination rate measures how often workflow outputs contain factually incorrect or invented information. Measure this through human evaluation on a sampled output set and through automated fact-checking where reference sources exist. A baseline hallucination rate measurement drives improvement prioritization.

Task completion rate measures how often a workflow successfully produces a usable output from start to finish. Failures, format errors, and error-path escalations all reduce task completion rate. Improving this metric requires diagnosing which steps fail most frequently and under what input conditions.

Output consistency measures whether the same input produces consistently similar outputs across multiple runs. High consistency signals reliable prompt design. High variance signals prompts that give the model too much interpretive latitude. Tighten constraints in high-variance workflow steps to improve consistency scores.

Latency and cost metrics matter at scale. Complex prompt orchestration AI workflows that run ten model calls to complete one task carry real compute cost implications. Optimize model selection, context length, and parallel execution to reduce cost per task without sacrificing output quality.

Systematic Prompt Testing and Evaluation

Prompt testing requires a labeled evaluation dataset. Collect representative input examples and define what correct outputs look like. Run workflow versions against the evaluation set. Compare outputs against correct answers using automated scoring and human evaluation.

A/B testing prompt variations against live traffic identifies improvements in production settings. Gradually roll out improved prompt versions to a subset of requests. Measure quality metrics on the test group versus the control group. Promote improvements when metrics confirm genuine gains.

Red-teaming exercises deliberately probe prompt orchestration AI workflows for failure modes. Team members craft adversarial inputs designed to trigger hallucinations, format failures, or instruction violations. Findings feed directly into prompt hardening and validation layer improvements.

FAQs

What is the difference between prompt engineering and prompt orchestration?

Prompt engineering focuses on designing individual prompts for optimal model responses. Prompt orchestration manages how multiple prompts, models, tools, and outputs work together across a complex task. Prompt engineering is a skill. Prompt orchestration is a system design discipline. Both matter. Prompt orchestration AI workflows require strong prompt engineering at each individual step and thoughtful architecture connecting all steps together.

Can prompt orchestration fully eliminate hallucinations?

No system eliminates hallucinations completely. Well-designed prompt orchestration AI workflows reduce hallucination rates dramatically through structured decomposition, verification layers, and context management. Production systems achieving under 2% hallucination rates are achievable with rigorous orchestration design. Zero hallucinations remain impossible with current language model technology. The goal is containment and detection, not elimination.

How many steps should a prompt orchestration workflow have?

Use as many steps as the task genuinely requires and no more. Simple workflows need two to four steps. Complex enterprise workflows regularly use ten to twenty steps. Each additional step adds latency and cost. Design the minimum number of steps that achieves reliable output quality. Audit existing workflows periodically. Merge steps that do not require separation. Split steps that consistently produce errors.

Which model should I use for different steps in an orchestrated workflow?

Match model capability to task complexity at each step. Routing, classification, and format validation steps work well with smaller, faster, cheaper models. Complex reasoning, synthesis, and judgment steps benefit from larger frontier models. Hybrid architectures using small models for simple steps and large models for critical steps reduce cost significantly without sacrificing output quality on the steps that matter most.

How do I handle confidential enterprise data in prompt orchestration workflows?

Data handling in prompt orchestration AI workflows requires deliberate security design. Use on-premise or private cloud model deployments for highly sensitive data. Implement data minimization — pass only the specific information each workflow step needs. Mask or redact sensitive fields before passing data to external model APIs. Log all data access at the orchestration layer for audit compliance. Work with your security and legal teams to define acceptable data handling protocols before deploying workflows on sensitive data.

What is retrieval-augmented generation and how does it reduce hallucinations?

Retrieval-augmented generation is a technique where the workflow retrieves relevant information from a verified knowledge base before passing it to the language model as context. Instead of relying on the model’s parametric memory, the model generates answers grounded in retrieved facts. This grounds outputs in verified information rather than statistical patterns. RAG is one of the most effective hallucination reduction techniques available in modern prompt orchestration AI workflows and sees widespread adoption in enterprise knowledge management, legal research, and customer support applications.

Read More:-Claude 3.5 Sonnet vs GPT-4o: The Best LLM for Automated Code Refactoring

Conclusion

Hallucinations are not a reason to distrust AI. They are a reason to design AI systems better. Prompt orchestration AI workflows give teams the structural tools to build AI systems that produce reliable, verifiable, consistent outputs at scale.

The principles are clear. Decompose complex tasks into atomic steps. Use structured outputs. Verify critical results. Manage context windows deliberately. Design explicit error handling paths. Measure quality continuously and improve systematically.

The patterns are proven. Chains, routers, map-reduce, critics, and parallel execution each solve specific orchestration challenges. The right combination of patterns depends on your specific workflow requirements. Start simple. Add complexity only where the task demands it.

The tools are mature. LangChain, Semantic Kernel, DSPy, and lightweight alternatives all provide solid foundations for building prompt orchestration AI workflows in production environments. Choose tools that match your team’s existing skills and your workflow’s actual complexity.

The ROI is measurable. Teams investing in serious prompt orchestration design report dramatic reductions in output error rates, lower human review costs, and higher user trust in AI-generated results. These gains compound as workflows mature and measurement drives continuous improvement.

Every organization deploying AI at scale will face the hallucination problem. The teams that solve it through disciplined prompt orchestration AI workflows will build AI systems that users trust, businesses rely on, and competitors struggle to match. That is the real competitive advantage this discipline delivers.