Introduction

TL;DR AI is changing how people work every single day. New tools appear constantly. New techniques follow right behind them. One technique stands out above the rest right now. That technique is prompt chaining.

Prompt chaining is not a buzzword. It is a real, practical method. Developers use it. Marketers use it. Researchers use it too. Anyone working with large language models can benefit from it.

This guide explains what prompt chaining is at its core. It covers why it matters. It walks through how it works. It shows where you can apply it right now.

Table of Contents

What is Prompt Chaining? The Core Definition

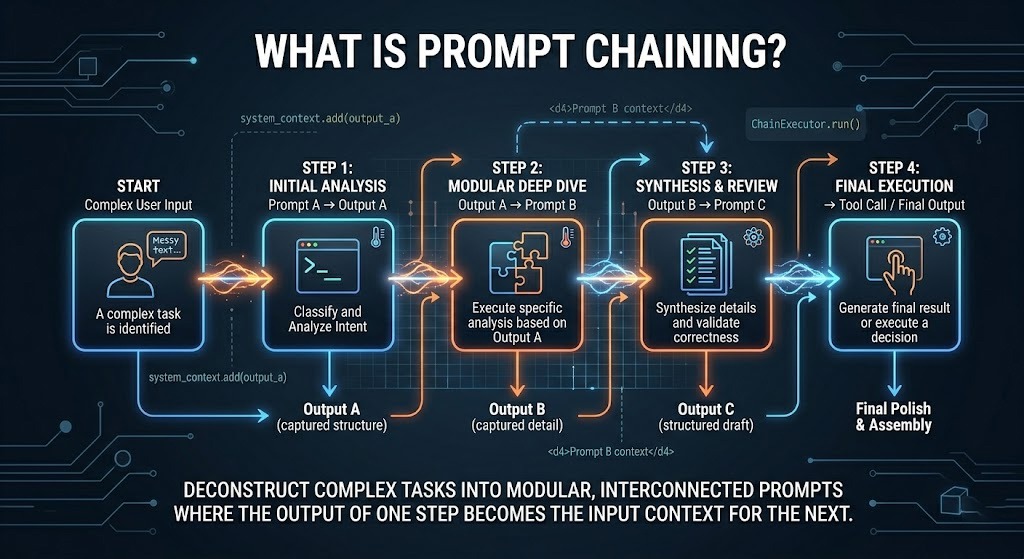

Prompt chaining is the process of breaking one large task into smaller steps. Each step gets its own prompt. The output of one prompt becomes the input of the next. You chain these prompts together. That is where the name comes from.

Think about writing a full research report. You cannot just ask an AI to write it in one shot. The result will be shallow. It will miss key details. Prompt chaining solves this problem directly.

With prompt chaining, you start by asking the AI to gather key facts. Then you ask it to organize those facts. Then you ask it to write each section one by one. Each prompt feeds into the next. The final result is much stronger.

Prompt chaining works because AI models handle focused tasks better than broad ones. A single prompt asking for too much leads to errors. It leads to hallucinations. It leads to missed logic. Smaller, focused prompts reduce all of that.

You stay in control at every step. You can review each output. You can correct mistakes early. You can adjust the direction before moving forward. This makes prompt chaining one of the most reliable AI workflows available today.

Why Prompt Chaining Matters for AI Workflows

AI models have context limits. They can only hold so much information at one time. A massive single prompt overloads that context window. The model starts forgetting earlier details. The output becomes inconsistent.

Prompt chaining keeps each step lean and focused. The model processes one clear task. It gives you a clean output. You move to the next step. This approach respects the model’s architecture.

There is another big reason prompt chaining matters. It makes complex reasoning possible. A single prompt cannot ask a model to analyze data, form hypotheses, test logic, and write conclusions all at once. Breaking these into a chain makes each step achievable.

Teams working on automation love prompt chaining. Each chain node becomes a testable unit. If one step fails, you know exactly where. You fix only that step. You do not restart everything from scratch.

Quality improves dramatically with this technique. Research shows that multi-step prompting consistently outperforms single-step prompting for complex tasks. The improvement is not small. It is significant.

Businesses that implement prompt chaining into their pipelines report faster turnaround times. They report fewer errors. They report better output quality overall. These are real, measurable benefits.

How Prompt Chaining Works: Step-by-Step Process

Define the Final Goal

Every chain starts with a destination. You need to know the final output before writing any prompt. Ask yourself what you want at the end. A report? A code module? A marketing strategy? A customer support script?

Write that goal clearly. Make it specific. Vague goals produce vague chains. A clear goal helps you break the work into logical steps.

Break the Goal into Subtasks

Look at your goal. Identify the logical steps needed to reach it. Each step should do one thing. It should not do two things. It should not be vague.

For a blog post, your subtasks might include keyword research, outline creation, introduction writing, section writing, and final editing. Each one becomes a separate prompt.

Write Individual Prompts for Each Subtask

Each prompt should be self-contained. Include the context from the previous step inside the new prompt. The AI cannot remember past conversations automatically. You must carry the context forward manually or through code.

Keep each prompt short and focused. Use clear language. Specify the format you want. Tell the AI exactly what to produce. Avoid open-ended instructions.

Pass Outputs Forward in the Chain

Take the output from step one. Include it in the prompt for step two. This is the heart of prompt chaining. The chain grows stronger with each step. The model builds on previous work.

You can automate this with code. Python is a popular choice for building prompt chaining pipelines. Frameworks like LangChain make it even easier.

Review and Refine at Each Stage

Do not blindly pass outputs forward. Check each output. Does it match what you expected? Is the quality acceptable? Fix problems at the source. A small error early becomes a big error later.

Types of Prompt Chaining You Should Know

Sequential Prompt Chaining

This is the most common type. Prompts follow one another in a straight line. Output A feeds into Prompt B. Output B feeds into Prompt C. It continues until you reach the final result.

Sequential prompt chaining works well for writing, coding, and data processing tasks. It is easy to build. It is easy to debug. Most beginners start here.

Branching Prompt Chaining

Some tasks need different paths depending on the output. Branching prompt chaining handles this. If the AI returns one type of answer, you follow path A. If it returns another, you follow path B.

Customer service bots use this approach often. A user asks a question. The AI classifies it. The chain branches based on that classification. Each branch has its own follow-up prompts.

Iterative Prompt Chaining

Iterative prompt chaining loops back on itself. The AI produces an output. Another prompt evaluates that output. If it is not good enough, the chain runs again with feedback included. This loop continues until the quality meets your standard.

Code review automation uses this method. The AI writes code. Another prompt checks it for errors. If errors exist, a third prompt fixes them. The loop runs until the code is clean.

Parallel Prompt Chaining

Parallel prompt chaining runs multiple chains at the same time. Each chain handles a different part of the task. The outputs merge at the end. This approach saves time for large projects.

Imagine analyzing customer feedback from five different regions at once. Each region gets its own prompt chain. All five chains run simultaneously. The results combine into one final report.

Real-World Applications of Prompt Chaining

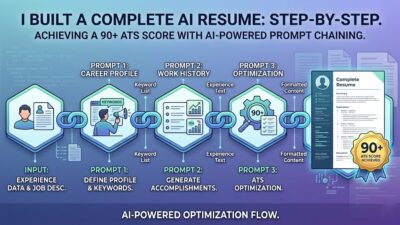

Content Creation and SEO Writing

Content teams use prompt chaining to produce long-form articles. The first prompt generates a keyword list. The second creates an outline. The third writes each section. A final prompt optimizes for SEO. The entire pipeline is repeatable.

This method ensures every article follows a consistent structure. It saves writers hours of work. It keeps quality high across all pieces.

Software Development and Code Generation

Developers build entire features using prompt chaining. One prompt defines the requirements. A second writes the function structure. A third fills in the logic. A fourth writes unit tests. A fifth handles documentation.

This process keeps code organized. It reduces errors. It also teaches junior developers how to think through problems systematically.

Customer Support Automation

Support teams build smart bots with prompt chaining. A chain first classifies the user issue. A second chain finds the relevant solution. A third formats a helpful response. A fourth checks tone before sending.

This produces responses that feel human. They are accurate. They stay on-brand. Customers get help faster without sacrificing quality.

Data Analysis and Research

Analysts feed raw data into a chain. The first prompt cleans and structures the data. The second identifies patterns. The third interprets those patterns. The fourth writes the executive summary. The whole pipeline runs in minutes.

Academic researchers use similar workflows. Literature reviews that once took weeks can now take days. Prompt chaining handles the heavy lifting while researchers focus on insights.

Best Practices for Effective Prompt Chaining

Keep Each Prompt Specific and Narrow

The biggest mistake in prompt chaining is writing prompts that are too broad. Broad prompts confuse the model. They produce scattered results. Write one clear task per prompt. Nothing more.

Always Carry Context Forward

The model does not remember previous conversations. Every new prompt starts fresh. Include the relevant output from the previous step inside the next prompt. This keeps the chain coherent.

You can do this manually or through a script. Automated systems handle this with variables. They inject the previous output into a template prompt automatically.

Add Validation Steps Between Nodes

Build quality checks into your chain. After each major step, add a prompt that evaluates the output. Ask the AI to check for accuracy. Ask it to flag logical gaps. Fix problems before they compound.

Document Your Chains

Save every prompt you write. Label them clearly. Note what worked and what did not. Over time, you build a library of effective prompt chains. This library becomes a major competitive advantage.

Test With Different Models

Not every model handles every chain equally. GPT-4, Claude, and Gemini each have strengths. Test your prompt chains across different models. Find the best fit for your specific use case.

Tools and Frameworks That Support Prompt Chaining

Several powerful tools make prompt chaining easier to build and manage. Knowing them saves you time.

LangChain is the most popular framework for building prompt chaining applications. It provides ready-made chain structures. It handles context passing automatically. It integrates with most major AI models.

LlamaIndex focuses on document-based chains. If your chain involves analyzing large documents or knowledge bases, LlamaIndex is ideal. It structures how data flows through the chain intelligently.

Flowise offers a visual interface for building chains. You drag and drop nodes. You connect them visually. No code is required for basic chains. This makes prompt chaining accessible to non-developers.

Langflow is similar to Flowise but open-source. Teams with technical resources prefer it. It gives full control over the chain architecture.

For developers building custom pipelines, raw Python with the OpenAI or Anthropic API works well. You manage context manually. You have total flexibility. This approach suits complex, highly customized chains.

Common Mistakes in Prompt Chaining and How to Fix Them

Many people make the same errors when learning prompt chaining. Knowing them early saves you a lot of wasted time.

Overloading the first prompt is mistake number one. Beginners try to pack too much into step one. The output becomes massive. It is hard to parse. It breaks the next step. Keep step one small and focused.

Not passing context is mistake number two. A prompt that does not reference the previous output has no connection to the chain. The model starts fresh. The chain logic breaks entirely. Always include the previous output in the next prompt.

Skipping validation is mistake number three. People rush through the chain. They do not check intermediate outputs. A bad output in step two corrupts step three, four, and five. Add validation checkpoints.

Making chains too long is mistake number four. A 20-step chain looks impressive. In practice, errors multiply at each step. Start with 3 to 5 steps. Expand only when each short chain works perfectly.

Using the same model for every task is mistake number five. Some models excel at reasoning. Others excel at writing. Match the model to the step. A small optimization here creates a big improvement in the final output.

Frequently Asked Questions About Prompt Chaining

Is prompt chaining only for developers?

No. Anyone can use prompt chaining manually in any chat interface. Open ChatGPT or Claude. Write a focused first prompt. Review the output. Write a second prompt that builds on it. That is prompt chaining. No code required.

Developers automate it with code. Marketers do it manually. Writers do it step by step. The concept works at every skill level.

How is prompt chaining different from a single long prompt?

A single long prompt asks the model to do everything at once. The model tries to handle all parts simultaneously. Quality drops. Errors appear. The output feels generic.

Prompt chaining splits that same work into focused steps. Each step gets the model’s full attention. Each step produces a higher-quality output. The chain builds toward a better final result.

What is the best use case for prompt chaining?

Prompt chaining excels at complex, multi-step tasks. Writing long documents, generating and reviewing code, analyzing research data, building customer support workflows — all of these benefit enormously from prompt chaining.

Simple tasks do not need chaining. Asking for a short summary or a quick translation works fine as a single prompt. Use chaining when a single prompt clearly falls short.

Does prompt chaining increase API costs?

Yes, it can. Multiple API calls cost more than one. Each step in the chain consumes tokens. However, the quality improvement usually justifies the extra cost. You waste fewer tokens on failed single-prompt attempts.

You can also reduce costs by using smaller, cheaper models for simpler chain steps. Reserve the powerful models for the most complex steps. This balances quality and cost effectively.

What tools work best with prompt chaining?

LangChain remains the top choice for developers. Flowise suits non-technical users. Raw Python scripts work great for custom pipelines. The best tool depends on your technical skill and the complexity of your use case.

Prompt Chaining vs. Related Techniques: Key Differences

People often confuse prompt chaining with similar AI techniques. The differences matter.

Chain-of-thought prompting asks a single model to reason step by step within one prompt. Prompt chaining uses multiple separate prompts. Chain-of-thought stays inside one call. Prompt chaining spans multiple calls.

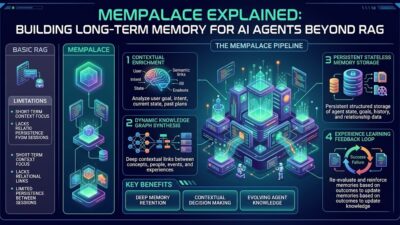

Retrieval-augmented generation (RAG) pulls external data into a prompt. Prompt chaining focuses on breaking a task into steps. The two techniques are not opposites. RAG can be one node inside a larger prompt chain.

Few-shot prompting gives the model examples inside the prompt. Prompt chaining gives the model a sequence of tasks. Few-shot prompting improves output quality for one prompt. Prompt chaining manages complex multi-step workflows.

Agentic AI allows models to make decisions and use tools autonomously. Prompt chaining is a subset of agentic behavior. A well-designed prompt chain can act as a simplified agent. True agents go further by selecting their own chains dynamically.

Understanding these distinctions helps you choose the right tool for each problem. Prompt chaining is your best option when you have a defined multi-step process to automate.

Read More:-How to Structure a Claude Code Project that Thinks Like an Engineer

Conclusion: Why Prompt Chaining is the Future of AI Workflows

Prompt chaining is not a passing trend. It is a foundational technique for anyone serious about AI. It makes complex tasks manageable. It improves output quality. It gives you control over every step.

The businesses winning with AI today are using structured workflows. Prompt chaining is at the center of those workflows. They break big tasks into small steps. They validate at each stage. They build repeatable systems.

You do not need to be a developer to start. Pick one complex task you do every week. Write a three-step prompt chain for it. Run it. Measure the output quality against your current method. The improvement will speak for itself.

As AI models grow more powerful, prompt chaining grows more valuable. The ability to orchestrate these models intelligently separates average AI users from expert ones. Master prompt chaining now. The advantage compounds over time.

Start small. Stay consistent. Build your chain library. The results will follow.