Introduction

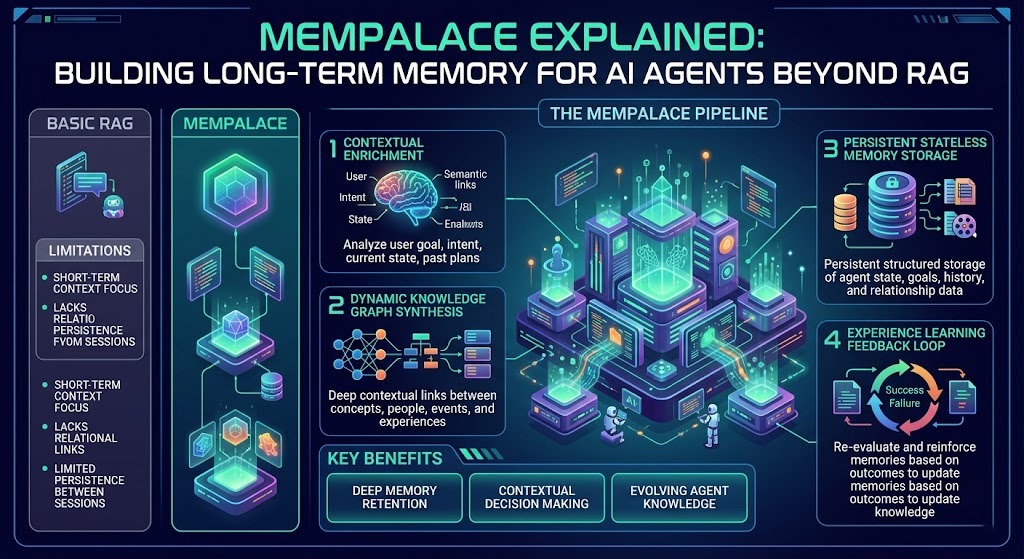

TL;DR AI agents are getting smarter every month. Yet most of them still forget everything the moment a conversation ends. That is a fundamental design flaw. RAG systems help with retrieval, but they were never built for true long-term memory. MemPalace Explained is the concept the AI development community needed. It gives agents a structured, persistent, and intelligent memory architecture that goes far beyond simple document retrieval. This blog covers everything you need to understand about MemPalace. You will learn why RAG falls short for agent memory, what MemPalace does differently, how it stores and retrieves knowledge across sessions, and how to implement it in your own AI systems. Whether you are a developer building production agents or a researcher exploring cognitive AI architectures, this guide gives you a clear and complete picture of what MemPalace Explained really means in practice.

Table of Contents

Why RAG Is Not Enough for Long-Term AI Agent Memory

RAG stands for Retrieval Augmented Generation. It retrieves relevant text chunks from a vector database and injects them into the model context before generation. This works well for question-answering over static documents. It struggles badly when AI agents need persistent, evolving, personal memory across long time horizons.

RAG treats memory as a search problem. You ask a question, it finds matching chunks, and it hands them to the model. The model itself does not remember anything. Every session starts fresh. The agent has no sense of history, identity, or continuity. It cannot learn from past mistakes. It cannot build on previous conversations. It cannot develop a relationship with the user over time.

RAG also suffers from retrieval noise. When you store thousands of documents, similarity search returns chunks that are semantically close but contextually wrong. The agent receives irrelevant information alongside useful information and cannot always distinguish between them. For short-term tasks, this noise is acceptable. For long-term agent memory, it breaks coherence completely. MemPalace Explained addresses this by redesigning the memory layer from the ground up. It treats agent memory the way human cognition treats memory: structured, layered, and purpose-driven.

The Core Limitations of Vector-Only Memory Systems

Vector databases store information as numerical embeddings. They retrieve based on cosine similarity between the query vector and stored vectors. This is powerful for document search. It creates serious problems for agent memory management.

The first limitation is flat structure. All memories sit at the same level with no hierarchy. An agent cannot distinguish between a fact it learned yesterday and a foundational belief it has held for months. Importance, recency, and context all collapse into a single similarity score. The second limitation is no forgetting mechanism. Vector stores accumulate data forever. Old, irrelevant memories compete with recent, important ones during retrieval. The third limitation is no memory consolidation. Humans consolidate related memories into abstract schemas over time. Vector stores cannot do this natively. MemPalace Explained resolves all three limitations through a layered memory model that mirrors how biological cognition actually organizes experience.

What Is MemPalace and Where Did the Concept Come From?

MemPalace Explained draws its name from the ancient Method of Loci, a mnemonic technique where humans visualize placing memories inside imagined physical spaces. Each location in the palace holds a distinct memory. Recall means mentally walking through the palace and retrieving what sits at each location. The technique works because spatial memory in humans is exceptionally strong.

The MemPalace framework for AI agents translates this cognitive architecture into a software design pattern. Instead of a single flat vector store, MemPalace organizes agent memory into distinct rooms or layers. Each layer serves a specific memory function. Working memory holds the current conversation context. Episodic memory stores specific past interactions. Semantic memory holds generalized knowledge and facts. Procedural memory stores learned behaviors and workflows.

Researchers at several AI labs began publishing papers on structured agent memory in 2023 and 2024. The MemPalace concept emerged from the intersection of cognitive science, AI agent research, and practical engineering needs. Development teams building long-running autonomous agents kept running into the same problem. Their agents forgot too much and retrieved too poorly. MemPalace Explained is the community’s answer to that shared frustration.

How MemPalace Differs From Traditional RAG Architectures

Traditional RAG has one job: retrieve relevant chunks and inject them into context. MemPalace has four jobs: store, organize, prioritize, and retrieve. That distinction drives every architectural difference between the two systems.

RAG stores all information in one flat index. MemPalace stores information across multiple specialized memory layers. RAG retrieves based only on semantic similarity. MemPalace retrieves based on similarity, recency, importance, and emotional salience. RAG never forgets anything explicitly. MemPalace implements active forgetting by decaying low-importance memories over time. RAG has no consolidation step. MemPalace runs periodic consolidation processes that merge related episodic memories into semantic knowledge. MemPalace Explained is not an upgrade to RAG. It is a replacement for the memory layer entirely. RAG can still serve as one tool within a MemPalace system, handling specific document retrieval tasks while MemPalace manages the agent’s personal memory.

The Four Memory Layers Inside a MemPalace Architecture

Understanding MemPalace Explained requires understanding its four-layer memory model. Each layer handles a distinct type of memory with different storage duration, retrieval priority, and update frequency. Together they give the agent a rich and coherent sense of its own history and knowledge.

Working Memory: The Agent’s Active Context

Working memory holds everything the agent is currently processing. It includes the current conversation turns, the active task description, any tool results from the current session, and the most recently retrieved episodic and semantic memories. Working memory is temporary. It resets at the end of each session. Its capacity is bounded by the model’s context window.

Effective working memory management is critical for agent performance. You cannot dump everything into context and hope the model sorts it out. MemPalace Explained defines a working memory budget. Only the most relevant memories from deeper layers get promoted into working memory for any given task. A memory scheduler handles these promotions based on task type and user query. This keeps the context clean and focused. The agent thinks more clearly with a well-managed working memory layer than with an overloaded context window.

Episodic Memory: What the Agent Experienced

Episodic memory stores specific past interactions. Every conversation session generates episodic memories. The agent remembers that it helped User A debug a Python script last Tuesday. It remembers that User B prefers formal language. It remembers that a particular API integration failed during a previous task. These are episodic facts tied to specific moments in time.

MemPalace Explained stores episodic memories with rich metadata. Each memory includes a timestamp, a session identifier, a list of participants, an importance score, and a decay rate. The decay rate controls how quickly the memory fades from active retrieval. High-importance episodic memories decay slowly. Trivial interactions decay quickly. A consolidation process runs periodically. It examines episodic memories that share common themes and merges them into semantic knowledge. This mirrors the way humans process daily experiences into long-term understanding during sleep.

Semantic Memory: What the Agent Knows

Semantic memory holds generalized knowledge that the agent has accumulated over time. It does not tie to specific events. It represents distilled understanding. The agent knows that User B is a software engineer working in fintech. It knows that its primary task domain is customer support. It knows that a specific company’s return policy changed three months ago.

Semantic memory in MemPalace Explained is structured as a knowledge graph. Nodes represent concepts, entities, and facts. Edges represent relationships between them. This graph structure enables reasoning that flat vector stores cannot support. The agent can traverse relationships, infer missing information, and identify contradictions between stored facts. Updating semantic memory happens through the consolidation pipeline, not through direct writes. This prevents noisy, unvalidated information from polluting the agent’s core knowledge base.

Procedural Memory: How the Agent Behaves

Procedural memory stores learned behaviors, workflows, and response strategies. It captures how the agent solves problems, not just what it knows about them. An agent that repeatedly succeeds with a specific debugging workflow stores that workflow in procedural memory. Future similar tasks trigger retrieval of that workflow automatically.

MemPalace Explained implements procedural memory as a library of reusable action templates. Each template has a trigger condition, a sequence of steps, and a success metric. The agent evaluates stored templates when it encounters a new task. If a template matches, it executes the stored workflow as a starting point. If no template matches, the agent builds a new strategy and, upon success, stores it as a new procedural memory. This creates genuine learning across sessions rather than just information accumulation.

The Memory Consolidation Pipeline in MemPalace

Consolidation is the process that transforms raw experience into lasting knowledge. MemPalace Explained borrows this concept directly from neuroscience. The biological brain consolidates memories during sleep, converting hippocampal episodic traces into cortical semantic knowledge. MemPalace runs a software equivalent of this process on a scheduled basis.

The consolidation pipeline runs after each session or on a nightly schedule for always-on agents. It begins by scanning recent episodic memories for thematic clusters. Memories that share entities, topics, or outcomes get grouped together. The system then generates a semantic summary of each cluster. This summary gets written into the semantic memory graph as a new node or as an update to an existing node.

Contradictions surface during consolidation. If a new episodic memory conflicts with an existing semantic fact, the system flags the conflict for resolution. In some implementations, the agent resolves conflicts autonomously using confidence scores. In others, the conflict gets flagged for human review. MemPalace Explained treats memory integrity as a first-class concern. A memory system that silently stores contradictions produces unreliable agents. Active conflict resolution is what separates a production-grade memory architecture from a research prototype.

Implementing MemPalace in Your AI Agent Stack

You do not need to build MemPalace from scratch. Several open-source libraries provide the foundational components. Mem0 is the most mature implementation of structured agent memory available today. It offers episodic and semantic memory layers with a clean Python API. Zep is another strong option, particularly for conversational AI applications. LangChain’s memory modules provide working memory management that integrates well with a MemPalace-style architecture.

Start your implementation with the episodic memory layer. Log every agent session with full metadata. Add a simple importance scoring function based on user feedback and task completion signals. Run a basic consolidation script weekly that summarizes episodic clusters into semantic facts. This minimal implementation already outperforms pure RAG for long-running agents.

Add the semantic memory graph next. Use Neo4j or a similar graph database for production deployments. NetworkX works well for local development and experimentation. Connect your consolidation pipeline to write new facts into the graph. Build a retrieval function that combines graph traversal with vector similarity search. MemPalace Explained works best when the graph and vector layers complement each other rather than compete.

Choosing the Right Database Stack for MemPalace

Your database choices determine the performance and scalability of your MemPalace implementation. Working memory lives in your application’s runtime state. No external database is needed for this layer. Episodic memory needs a database that supports fast writes, rich metadata filtering, and time-series queries. PostgreSQL with a JSON column works well. MongoDB is another solid choice for its native document structure.

Semantic memory requires a graph database. Neo4j is the industry standard for production knowledge graphs. It offers a powerful query language called Cypher that expresses relationship traversals naturally. For smaller deployments, SQLite with a self-join structure can handle basic graph queries. Vector similarity search for retrieval works best with Pinecone, Weaviate, or ChromaDB depending on your scale requirements. MemPalace Explained is database-agnostic at the architecture level. Choose the stack that fits your team’s expertise and your deployment environment.

Frequently Asked Questions About MemPalace Explained

Is MemPalace a specific open-source library or a design pattern?

MemPalace is primarily a design pattern and architectural philosophy for AI agent memory. It describes how to structure and manage memory across multiple layers with consolidation, decay, and prioritization. Several libraries implement parts of this pattern. Mem0 and Zep are the closest to full implementations. You can also build your own MemPalace-style system using standard databases and scheduled scripts. The pattern is the valuable insight. The specific library you use to implement it is a secondary decision.

Can MemPalace work with any language model?

Yes. MemPalace Explained is model-agnostic. It sits in the infrastructure layer between your database and your model. The model receives retrieved memories as formatted context just like any other RAG system. GPT-4, Claude, Gemini, Llama, and Mistral all work with a MemPalace memory layer. The model does not need to understand the memory architecture. It just needs to process the context it receives intelligently. Model choice affects response quality, but it does not affect whether MemPalace functions correctly.

How does MemPalace handle privacy and sensitive user data?

MemPalace stores personal interaction data by design. This creates real privacy obligations. Encrypt all stored memories at rest and in transit. Implement per-user memory isolation so one user’s memories are never accessible to another. Build a memory deletion API that removes all stored memories for a given user on request. Follow GDPR, CCPA, and any other relevant data protection regulations for your deployment region. MemPalace Explained as an architecture does not mandate specific privacy implementations. Your engineering team must design those safeguards deliberately for every deployment.

What is the performance overhead of running a MemPalace system?

The retrieval overhead is minimal for most deployments. Graph queries on a properly indexed Neo4j database return in milliseconds. Vector similarity searches on modern infrastructure take under 100 milliseconds. The consolidation pipeline is the most compute-intensive component. Running it asynchronously during off-peak hours eliminates any user-facing latency impact. For high-volume deployments serving thousands of concurrent users, plan for dedicated consolidation infrastructure. The performance investment pays back in dramatically better agent coherence and user experience quality.

Does MemPalace replace the system prompt for agent configuration?

No. The system prompt and MemPalace serve different purposes. The system prompt defines the agent’s role, persona, and behavioral constraints. It is static and set by the developer. MemPalace stores dynamic, user-specific memories that evolve over time. At the start of each session, relevant MemPalace memories get injected into the context alongside the system prompt. The system prompt tells the agent who it is. MemPalace tells the agent what it knows and what it has experienced. Both are essential for a fully capable long-running agent.

Related Concepts That Extend the MemPalace Framework

MemPalace Explained sits within a broader field of research on cognitive architectures for AI. SOAR and ACT-R are classical cognitive architectures from cognitive science that share structural similarities with MemPalace. Both separate declarative knowledge from procedural knowledge. Both use production rules to govern behavior. Studying them deepens your understanding of why MemPalace is designed the way it is.

Continual learning is a closely related research area. It focuses on training models that accumulate knowledge over time without catastrophic forgetting. MemPalace operates at the inference layer rather than the training layer, but the goals are the same. Combining MemPalace-style inference-time memory with continual learning fine-tuning creates the most capable long-running agent architectures available today.

Agent identity is an emerging concept in AI research. As agents accumulate memories over months and years, they develop something resembling a persistent self. MemPalace Explained is foundational infrastructure for agent identity. The memories an agent carries define its perspective, preferences, and behavioral tendencies. Designing memory systems thoughtfully is not just an engineering problem. It is a design problem with real implications for how AI agents relate to the humans they serve.

Read More:-Google AI Studio Guide: Every Feature Explained

Conclusion

RAG was a major leap forward for knowledge-augmented AI. Long-term agent memory requires something more sophisticated. MemPalace Explained gives the AI development community a concrete framework for building agents that truly remember, learn, and grow. The four-layer memory model, the consolidation pipeline, the decay mechanisms, and the knowledge graph combine into a system that mirrors how intelligent beings actually manage experience over time. Start with episodic memory logging in your next agent project. Add consolidation scripts when your episodic store grows meaningful. Build toward a full semantic graph as your agent matures. Every step of that journey produces a measurably better agent. The developers who invest in memory architecture today are building the intelligent agents that will define the next decade of AI applications. MemPalace Explained is your starting point. The rest is engineering, iteration, and a genuine commitment to making agents that remember what matters most.