Introduction

TL;DR Large GitHub repositories grow fast. Issues pile up every week. Maintainers feel constant pressure. They scan new bugs. They label them. They route them to the right people. They try to keep the backlog clean. This work takes time and focus.

AI agents now step into this workflow. They read new issues. They match patterns. They predict labels. They suggest owners. They group duplicates. They learn from your history. Teams gain a new helper. They move faster with less manual sorting.

You can build AI agents for automated bug triaging that plug into GitHub. They listen to webhooks. They fetch issue data. They run models. They update labels and assignees. They post comments with context. They free maintainers for deeper work.

This article explains how that setup works. It focuses on large codebases. It covers architecture. It covers data sources. It covers training. It covers evaluation. It also explains how to keep humans in control. You will see where automation helps. You will see where judgment still matters. You will also see how AI agents for automated bug triaging can evolve with your repo over time.

Table of Contents

Why bug triaging breaks in large GitHub repos

Bug triaging looks simple on paper. One person reads each issue. They decide if it is valid. They add labels. They assign an owner. They close duplicates. They ask for more info. In a small repo, that system works.

Large repos face different stress. Hundreds of contributors open issues. Many new users skip templates. Descriptions stay vague. Logs stay missing. Labels drift. Old owners move teams. The signal drops. The noise grows.

Maintainers feel the pain first. They skim long threads. They guess the right label. They ping people who no longer work on that area. Some bugs sit open for months. Some duplicates stay hidden. Teams lose track of real priorities.

Context spread adds more friction. Clues sit in code history. More clues sit in previous issues. Some clues sit in pull requests. Many clues sit in comments from experts. No single human can scan all of that for every bug. AI agents for automated bug triaging can help with that search.

They read text at scale. They scan linked issues. They measure similarity. They spot likely duplicates. They map stack traces to files. They map files to teams. They suggest better labels. They give maintainers a head start. Maintainers still decide. They work from richer context.

What AI agents can do in GitHub bug workflows

AI agents for automated bug triaging act like smart assistants inside your repo. They watch the same stream that maintainers watch. They stay active at all hours. They never tire.

One agent can focus on classification. It reads new issues. It looks at titles and descriptions. It checks file paths and tags. It predicts label sets. It posts a suggested label list. Maintainers review and accept or adjust. Over time, the model learns from those edits.

Another agent can rank severity. It looks at keywords. It reads stack traces. It checks how often similar bugs appeared. It inspects linked logs if you provide them. It proposes severity levels like critical, high, medium, or low. Humans make the final call. They use the suggestion as a starting point.

A third agent can detect duplicates. It compares new issues with the backlog. It searches open and closed tickets. It looks at shared words and stack frames. It links likely matches. It posts a comment with references. This task suits AI agents for automated bug triaging very well.

Agents can also surface ownership hints. They map files to teams. They map modules to code owners. They suggest the right group to involve. They may tag people directly. They might update assignees once you trust them.

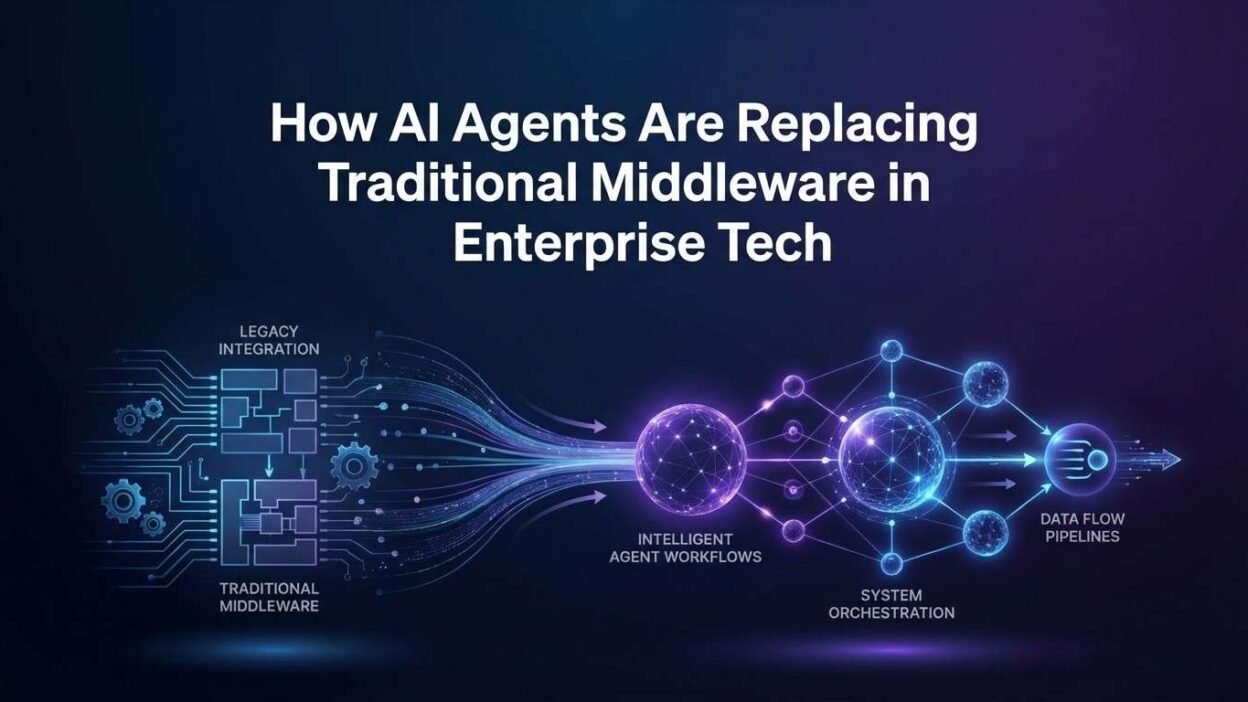

Architecture for AI-driven bug triage in GitHub

A clear architecture keeps your triage system stable. You can think in three layers. One layer listens to GitHub. One layer hosts AI agents. One layer connects to your code and data sources.

The GitHub layer receives events. New issues and comments trigger webhooks. A small service receives those payloads. It validates them. It filters by repo. It routes them to the agent layer. This piece stays simple. It holds no complex logic.

The agent layer holds your AI agents for automated bug triaging. It runs models. It orchestrates tasks. It calls tools. It keeps state when needed. Each agent follows a clear role. One agent labels. One agent ranks severity. One agent hunts duplicates. A coordinator service manages which agent reacts to which event.

The data layer feeds context. It connects to GitHub APIs for issues and pull requests. It connects to your code index. It connects to logs and metrics if you allow that. It may connect to internal documentation. Agents call this layer when they need more details. They do not store raw secrets. They request scoped views on demand.

You can deploy this system in your cloud. You can run it close to your CI and observability stack. You keep logs for all actions. You keep a trail of suggestions and overrides. These logs help you improve your AI agents for automated bug triaging over time.

Designing prompts and tools for bug triage agents

Strong prompt design steers each agent. It explains the job clearly. It defines inputs. It defines outputs. It defines limits.

You describe the triage context in simple language. You mention the tech stack. You mention the main subsystems. You list label categories. You explain severity rules. You share examples of good labels. You share examples of bad labels. The agent learns the tone and terms.

You also give agents tools. These tools fetch extra context. One tool gets file contents. One tool gets past issues. One tool gets code owners. One tool gets logs. Each call has strict input types. Each call returns structured data. AI agents for automated bug triaging use these tools instead of guessing.

The prompt should stress safety. You instruct the agent to ask for more data when things feel unclear. You instruct it to avoid firm claims on security topics without proof. You ask it to mark uncertainty. You remind it of human oversight.

You can refine prompts through trials. You test with real issues. You see where the agent chose wrong labels. You adjust descriptions. You adjust examples. Over a few cycles, outputs get closer to your team style.

Training models on issue history and labels

Models learn best from your own history. Your repo holds thousands of examples. Each issue and pull request tells a story. Each label reflects a decision. Each assignee reflects ownership. This is rich training data.

You can export issue data from GitHub. You include titles. You include bodies. You include labels. You include state. You include linked pull requests. You feed this data into fine‑tuning jobs. You use it for embeddings and similarity search as well.

For label prediction, you build a supervised dataset. Each record links text to a label set. You remove noisy or random labels. You focus on consistent ones. You may group rare labels under broader buckets. This keeps outputs stable.

For duplicate detection, you build pairs. You mark which pairs count as duplicates. You mark which pairs look similar but differ in root cause. You train similarity models with these examples. AI agents for automated bug triaging then use those models to rank links.

You should also refresh data often. Teams create new labels. Ownership changes. Code evolves. Fresh data keeps models aligned with current practice. Monthly updates work for many repos. Some may need faster cycles.

Keeping humans in the loop

Automation should help maintainers, not replace them. Humans still own the repo. They still own quality. They still own releases. AI supports this work. It does not take control.

You can design a tiered trust system. At first, AI agents for automated bug triaging only suggest labels and assignees. They post comments with their reasoning. Maintainers accept or reject with simple reactions. You log those decisions as feedback.

Over time, you may allow automatic labels for low‑risk categories. For example, docs issues or typo reports. You still keep human review for security, data, and core logic bugs. You move slowly and measure results.

You can also create review dashboards. They group issues by agent suggestions. Maintainers scan batches. They correct patterns. They flag bad calls. Product owners and triage captains see trends here. They guide improvements.

Metrics that matter for AI-powered triage

You should track a few clear metrics. They show if AI agents for automated bug triaging help or harm. They guide tuning. They support roadmap talks.

Label accuracy stands first. You measure how often the agent suggests labels that humans keep. You count both exact matches and partial matches. You set baselines from old manual triage.

Time to first triage comes next. You measure how long an issue waits for initial labels and an owner. AI often cuts this time. It reacts on arrival. Maintainers then step in with context.

You also track reopen rates and misroutes. Reopens show deeper quality issues. Misroutes show gaps in ownership logic. Both numbers should drop after you tune agents.

Finally, you should watch satisfaction. Ask maintainers how they feel about the system. Check if they ignore suggestions. Check if they rely on them. Real adoption matters more than raw automation stats.

Security, privacy, and open source concerns

Large GitHub repos often hold sensitive knowledge. Even open source projects face security and privacy constraints. You must design AI flows with care.

You limit what data agents see. You redact secrets from logs. You mask tokens. You avoid sending raw credentials. You restrict access to private repos. You follow company rules and legal needs.

If you use external models, you review their policies. You check data retention. You check training usage. You prefer options that respect your code and user data. You may host models yourself for strict projects.

Open source communities add one more angle. Contributors may worry about tracking. They may worry about heavy automation. You should share your plans. You should document AI agents for automated bug triaging in CONTRIBUTING guides. You should invite feedback and build trust.

FAQs on AI agents for GitHub bug triaging

Do AI agents replace human triagers

No. They act as helpers. They handle repetitive parts like initial labels and basic routing. Humans still review and adjust. Humans keep final responsibility for decisions and releases. AI agents for automated bug triaging support their workflow.

How hard is it to add these agents to an existing repo

Complexity depends on your stack. Basic setups use simple webhooks, a small service, and hosted models. Deeper setups add code indexing and custom models. Most teams can start small within a few sprints. They extend as they see value.

Can AI agents handle multi-language repos

Yes. Modern models understand many languages and frameworks. You should still provide examples from your stack. You should tune prompts with your own code and issues. This context helps AI agents for automated bug triaging perform better.

What about false positives in duplicate detection

False links will appear. You handle them with review and feedback. Agents suggest duplicates. Humans confirm or reject. You use those decisions to improve similarity models. Over time, false positives drop to acceptable levels for your team.

Do we need to train our own model

Not always. Many teams start with general models and good prompts. They only fine‑tune when scale or quality needs demand it. You can also train smaller models for label prediction, and keep generative models for explanations.

How do we measure success over time

You track accuracy, triage speed, reopen rates, and maintainer feedback. You compare before and after automation. You review these numbers with engineering leaders. Clear improvement shows that AI agents for automated bug triaging add real value.

Read More:-Implementing Agentic Workflows for Automated Lead Qualification

Conclusion

Large GitHub repositories need strong triage habits. Human focus alone does not scale. Backlogs grow. Important bugs hide. Engineers feel tired and overloaded. AI provides support. It reads issues. It spots patterns. It offers structure.

AI agents for automated bug triaging turn chaotic issue streams into organized queues. They propose labels. They find duplicates. They hint at owners. They do this work at any hour. They feed on real history. They improve with feedback.

You still keep humans in charge. Teams design prompts and tools. Teams guide training. Teams set rules. They review automation results. They approve real changes. AI stays in the assistant role.

If you start small and measure impact, you can grow this system with confidence. Begin with suggestions only. Watch behavior. Tune prompts and models. Expand to more repos and more labels. Over time, your AI agents for automated bug triaging will feel like part of the team. They will sit beside maintainers and help them protect quality at scale.