Introduction

TL;DR Most AI projects die between the demo and the deployment. A proof of concept impresses stakeholders in a boardroom. It runs smoothly on a curated dataset. It produces results that generate genuine excitement. Then the team tries to move it into production and everything changes.

Infrastructure breaks under real load. Data quality problems surface that never appeared in testing. Edge cases the PoC never encountered start causing failures daily. The model that worked perfectly on clean sample data behaves unpredictably on messy real-world inputs. Timelines stretch. Budgets expand. Confidence erodes.

Scaling AI from PoC to production automation is where most enterprise AI initiatives stall. Research consistently shows that more than 80% of AI projects never make it to full production deployment. The gap between a working prototype and a reliable production system is wider than most teams anticipate.

This blog covers that gap completely. You will learn why PoC environments create false confidence, what production-ready AI actually requires, and the exact steps to move your AI system from prototype to reliable automation at scale.

Every section delivers specific, practical guidance. No vague advice about “aligning stakeholders” or “embracing change.” Real engineering and operational decisions that determine whether your AI investment produces lasting value or ends up as another shelved project.

Scaling AI from PoC to production automation is achievable with the right approach. This guide shows you how.

Table of Contents

Why Most AI PoCs Fail to Reach Production

Understanding failure patterns protects your project from repeating them. The reasons AI PoCs stall at the production gate follow predictable patterns across industries and team sizes.

The Clean Data Illusion

A PoC uses carefully prepared data. Data scientists clean it, normalize it, and remove edge cases before training or testing. The model performs well because the data was selected to make it perform well. Production data is never that clean.

Real-world data arrives in inconsistent formats. Fields that were always populated in the sample dataset sometimes arrive empty in production. Values that fell within a clean range during testing sometimes arrive as outliers that break preprocessing pipelines. The model encounters inputs it was never trained to handle gracefully.

Scaling AI from PoC to production automation requires facing this data reality head-on. Production data pipelines need validation, cleaning logic, and graceful error handling built in from the start — not bolted on after the first production failure.

The Performance Cliff

A model that achieves 94% accuracy in testing sometimes drops to 78% in production. This performance cliff has multiple causes. Training data does not perfectly represent production data distribution. The evaluation metrics used during development do not fully capture the business impact of errors. Model behavior on rare but important edge cases gets averaged away in aggregate accuracy numbers.

Infrastructure Gaps

PoC environments run on developer laptops or simple cloud instances with generous manual oversight. Production environments need automated orchestration, load balancing, monitoring, and recovery. The infrastructure investment required to bridge that gap surprises teams that underestimated it during planning.

Organizational Resistance

Technical teams underestimate the organizational dimension of production deployment. End users resist AI systems that replace or change their workflows. IT teams raise security and compliance concerns. Leadership questions ROI before results materialize. These human factors delay production as much as technical ones.

What Production-Ready AI Actually Means

“Production-ready” gets used loosely. It means different things to different teams. A precise definition helps you build toward the right target rather than discovering gaps after launch.

Reliability at Scale

A production AI system runs reliably under real-world load. It handles volume spikes without degrading. It recovers automatically from transient failures. It maintains consistent performance over weeks and months, not just hours. A PoC that works for one user or one batch does not satisfy this requirement.

Scaling AI from PoC to production automation means designing for the load profile your system will actually experience — not the load profile convenient for testing.

Explainability and Auditability

Production AI systems in most business contexts need to explain their outputs. A model that classifies a lead as unqualified must be able to tell the sales rep why. A model that flags a transaction as fraudulent must produce a reason that a compliance officer can review. Black-box outputs acceptable in a PoC become compliance and trust problems in production.

Build explainability requirements into your production design from the beginning. Retrofitting explainability into an already-deployed system is significantly harder than designing for it upfront.

Monitoring and Drift Detection

Production models degrade over time. The world changes. Customer behavior shifts. Market conditions evolve. The data distribution the model trained on diverges from the data distribution it now receives. This phenomenon — model drift — silently erodes performance without triggering obvious errors.

Production-ready AI includes continuous monitoring of model performance metrics. Drift detection alerts fire before performance drops become noticeable to end users. Retraining pipelines exist to refresh model knowledge when drift exceeds acceptable thresholds.

Security and Access Control

A PoC often runs with broad data access and minimal security controls because convenience matters more than security during experimentation. Production systems handle sensitive data under real security requirements. Role-based access, data encryption, audit logging, and vulnerability management all apply. Production readiness requires meeting your organization’s security standards fully.

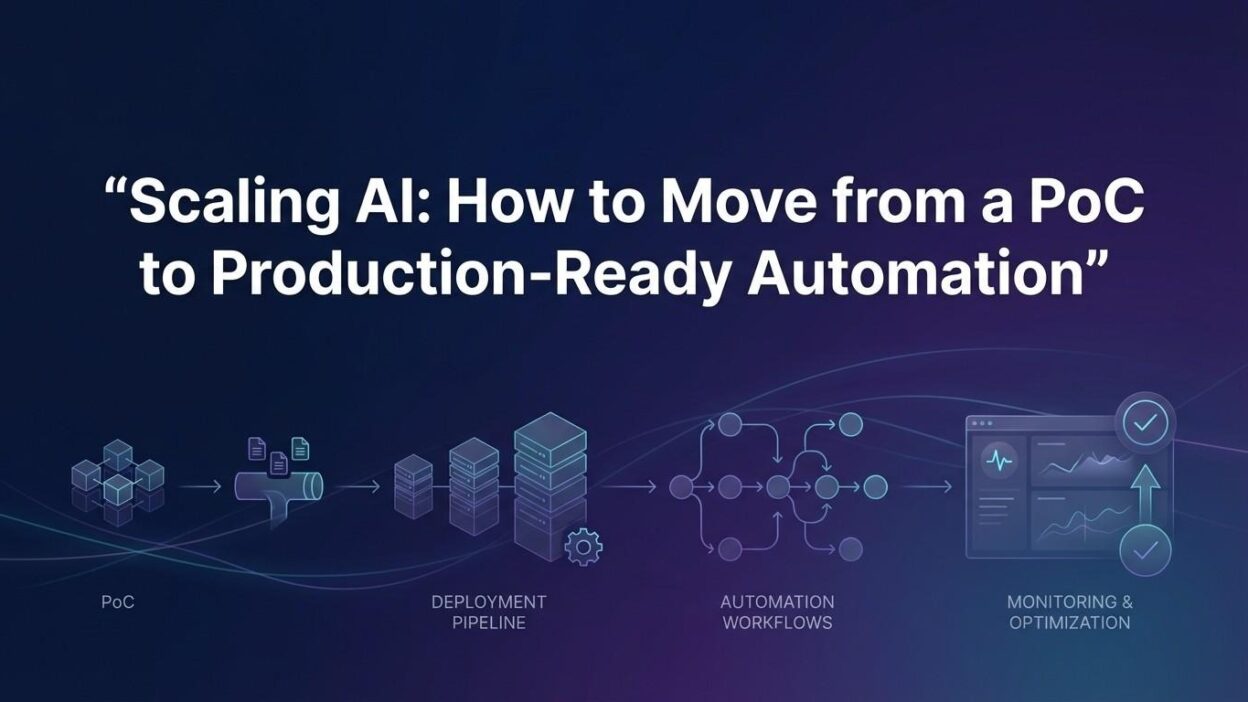

The Six Stages of Scaling AI from PoC to Production

Scaling AI from PoC to production automation follows a structured progression. Each stage builds the foundation the next stage requires. Rushing stages produces fragile systems that fail under real conditions.

Production Scoping and Requirements Definition

Start by redefining success for production. A PoC success metric — accuracy on a test set — does not translate directly to a production success metric. Define what the system must achieve in business terms. Cost per decision, time saved per week, error rate impact on downstream processes, and user adoption rate are production metrics. Build your production requirements document around these business outcomes.

Define non-functional requirements explicitly. Maximum acceptable latency. Minimum uptime target. Data freshness requirements. Supported concurrent users. These specifications drive every infrastructure and architecture decision that follows.

Data Pipeline Productionization

The data pipeline is the most common source of production failures. Build a production-grade data pipeline that validates incoming data, handles missing values, detects schema changes, and logs anomalies for review.

Implement schema validation at every ingestion point. Data that arrives in an unexpected format should trigger an alert and enter a quarantine queue rather than silently corrupting model inputs. Build idempotent processing logic so that retried jobs do not produce duplicate records or double-counted results.

Version your datasets. Know exactly which data trained which model version. This traceability is essential for debugging production issues and satisfying compliance audits.

Model Hardening and Edge Case Coverage

Take the model from your PoC and expose it to adversarial inputs. Run it against the messiest, most unusual real-world data you can collect. Document every failure mode. Fix the ones that represent realistic production scenarios. Build handling logic for the ones you cannot fix at the model level.

Scaling AI from PoC to production automation at this stage means stress-testing the model the way a QA team stress-tests software. Find the failures in a controlled environment before users find them in production.

Infrastructure Architecture for Production

Design your production infrastructure around your non-functional requirements. High-availability requirements need multi-region deployment and automatic failover. Low-latency requirements need model serving infrastructure optimized for response time. High-throughput requirements need batch processing infrastructure with horizontal scaling.

Containerize your model serving code using Docker. Deploy using Kubernetes or a managed container orchestration service. Use a model serving framework — TorchServe, BentoML, or Triton Inference Server — rather than building custom serving code. These frameworks handle the engineering complexity of production model serving so your team focuses on business logic.

MLOps Pipeline Implementation

MLOps is the operational backbone of production AI. An MLOps pipeline automates the repetitive work of maintaining a production model — retraining, evaluation, deployment, and monitoring.

Implement CI/CD for your models the same way software engineering teams implement CI/CD for their applications. A model update follows a defined pipeline: data validation, training run, evaluation against baseline, staged deployment, monitoring confirmation. No manual steps. No ad-hoc deployments that bypass testing.

Tools like MLflow, Weights & Biases, and Kubeflow provide the infrastructure for experiment tracking, model registry, and pipeline orchestration. Pick the tool that matches your team’s existing cloud platform and engineering culture.

Monitoring, Alerting, and Continuous Improvement

Production AI needs eyes on it at all times. Set up dashboards that display model performance metrics, prediction distributions, data quality indicators, and infrastructure health in one place.

Define alert thresholds for each metric. When accuracy drops below your acceptable floor, an alert fires and triggers investigation. When data quality scores decline, an alert fires before model performance degrades. When latency spikes above your SLA, an alert fires before users start complaining.

Build a feedback loop from production outputs back into your training process. User corrections, labeled edge cases, and newly encountered failure modes all become training data for the next model version. Scaling AI from PoC to production automation is not a one-time deployment. It is a continuous improvement cycle.

Building the Right Team Structure

Most PoC teams are small. A data scientist, a data engineer, and a product manager can build a compelling prototype. Production AI requires a broader set of skills working in coordination.

Roles Required for Production AI

A machine learning engineer translates the data scientist’s model into production-grade serving code. These are different skill sets. Data scientists optimize for model performance. ML engineers optimize for reliability, latency, and maintainability. Both roles are essential and often confused.

A data engineer owns the production data pipeline. This person builds the ingestion, validation, transformation, and storage systems that feed the model. Data engineering is distinct from data science and often underrepresented on PoC teams.

A platform or DevOps engineer owns the infrastructure. Container orchestration, CI/CD pipelines, monitoring systems, and security configurations all fall in this domain. Production AI infrastructure is meaningfully different from standard web application infrastructure. Platform engineers with ML system experience are valuable and uncommon.

Cross-Functional Integration

Scaling AI from PoC to production automation requires integrating AI capabilities into existing business systems. That integration touches every team whose workflows change when the AI system goes live. Product managers coordinate requirement gathering across these stakeholders. They translate business needs into engineering specifications.

Legal, compliance, and security teams review production AI deployments in regulated industries. Engage these teams early. Discovering a compliance blocker after building the production system wastes months of work. Discovering it during design adds a few days of requirements gathering.

End users are often overlooked during technical implementation. The people who will interact with the AI system’s outputs daily shape whether adoption succeeds or fails. Include them in UAT testing. Act on their feedback before launch.

Common Technical Pitfalls in Production AI Deployments

Scaling AI from PoC to production automation surfaces technical issues that PoC environments never reveal. Knowing these pitfalls in advance prevents costly delays.

Training-Serving Skew

Training-serving skew happens when the features the model uses during training differ subtly from the features it receives during serving. A feature calculated one way during training gets calculated slightly differently in the production pipeline. Model accuracy degrades in ways that are difficult to diagnose.

Prevent this by using the same feature computation code in both training and serving. Do not allow separate implementations of the same feature calculation to exist in different parts of the codebase. A shared feature store like Feast or Tecton enforces this consistency automatically.

Feedback Loop Contamination

When a production AI model influences the data it is later trained on, contamination occurs. A recommendation model promotes certain items. Users click those items more. The next training run learns that those items are more popular — because the model made them appear so. Performance metrics look good but actual recommendation quality may degrade.

Design your data collection and training pipeline to detect and correct for these feedback loops before they distort model quality silently.

Dependency Management Failures

A production model depends on specific versions of libraries, frameworks, and data sources. When those dependencies update without explicit management, model behavior changes unexpectedly. Pin your dependencies. Test dependency updates explicitly before promoting them to production. Version your model artifacts alongside the dependencies used to produce them.

Underestimating Inference Cost

PoC teams rarely calculate the cost of running inference at production scale. A model that costs $0.10 per inference looks cheap in a demo with 100 examples. At 100,000 daily inferences, that becomes $10,000 per day. Model optimization — quantization, distillation, caching — becomes a financial necessity at scale.

Governance, Ethics, and Compliance in Production AI

Production AI systems carry responsibilities that PoCs do not. Governance and compliance requirements become real the moment a model starts making decisions that affect real people.

Bias Auditing Before Launch

Run systematic bias audits before deploying any model that makes decisions affecting people. Test model performance across demographic subgroups. Identify disparate impact — situations where the model performs significantly worse for one group than another. Address identified biases before launch, not after a public incident forces the issue.

Scaling AI from PoC to production automation in regulated industries requires documented evidence that bias auditing occurred. Build that documentation into your pre-launch checklist.

Data Lineage and Compliance Documentation

Production AI in finance, healthcare, and legal sectors requires detailed documentation of data provenance. Know exactly where every piece of training data came from. Know what permissions cover its use. Know how long you can retain it under applicable regulations.

Data lineage tools like Apache Atlas or commercial alternatives track this documentation automatically as data flows through your pipeline. Manual documentation falls out of date and creates compliance gaps.

Model Cards and Decision Documentation

Write a model card for every production model. A model card documents the model’s intended use, performance characteristics, known limitations, and evaluation methodology. This documentation helps downstream teams use the model appropriately. It also creates an audit trail that regulators and internal governance teams can review.

Measuring Success After Production Deployment

Production deployment is not the finish line. It is the starting line for measuring real business impact. Scaling AI from PoC to production automation succeeds when measurable business outcomes improve.

Technical Performance Metrics

Track model accuracy, precision, recall, and F1 score continuously in production. Set baseline values from your pre-production evaluation. Treat deviations from baseline as incidents requiring investigation. Track latency at the 50th, 95th, and 99th percentiles. The average latency hides the tail latency that your slowest users experience.

Monitor data quality scores for your input pipeline. Drops in data quality often precede drops in model accuracy by days or weeks. Catching data quality issues early prevents performance degradation before users notice.

Business Impact Metrics

Connect model performance to business outcomes explicitly. If your AI system qualifies leads, track whether qualified leads convert at higher rates than before. If your AI system detects fraud, track whether financial losses from fraud decline. If your AI system automates document processing, track whether processing time and cost per document improve.

These business metrics justify continued investment in your AI system. They also surface situations where model performance looks technically acceptable but business outcomes are not improving — a signal that the model is solving the wrong problem or that human processes around the model need redesign.

Frequently Asked Questions

What is the biggest challenge in scaling AI from PoC to production automation?

Data quality and pipeline reliability cause the most production failures. A PoC works on clean, carefully prepared data. Production systems receive messy, inconsistent, real-world data that no test environment fully replicates. Building data validation, cleaning logic, and anomaly detection into the production pipeline is the single most impactful investment teams can make during the transition.

How long does it take to move from PoC to production?

Simple AI automation systems with narrow scope take three to six months from PoC to stable production deployment. Complex multi-model systems with significant integration requirements take six to eighteen months. Teams that underestimate the infrastructure, MLOps, and organizational work consistently overshoot their original timelines by two to three times.

What is MLOps and why does it matter for production AI?

MLOps applies software engineering best practices — version control, automated testing, CI/CD pipelines, monitoring — to machine learning systems. It matters because production AI models need to be updated, monitored, and maintained continuously. Without MLOps discipline, production models degrade silently, deployments become risky manual events, and teams spend more time firefighting than improving. Scaling AI from PoC to production automation without MLOps creates technical debt that slows every future improvement.

How do you prevent model drift in production?

Monitor the statistical distribution of your model’s inputs and outputs continuously. Compare current distributions to the training data distribution. When the gap exceeds a defined threshold, trigger a retraining run using more recent data. Schedule periodic retraining even when drift alerts have not fired — some drift accumulates slowly and metrics-based detection lags behind real-world degradation.

When should you consider rebuilding rather than scaling a PoC?

Rebuild when the PoC architecture makes production requirements structurally impossible to achieve. If the PoC uses a synchronous processing model and production requires real-time streaming, the architecture needs replacement. If the PoC used a model type that cannot meet production latency requirements, rebuild around a more efficient model class. Rebuilding feels costly but is almost always faster than trying to retrofit incompatible architectural constraints.

What infrastructure does production AI require that a PoC does not?

Production AI needs containerized model serving, automated CI/CD pipelines, distributed data processing infrastructure, real-time monitoring and alerting, a model registry for version management, a feature store for consistent feature computation, backup and recovery systems, and security controls including encryption and access logging. Most PoCs run on a laptop or a single cloud instance with none of these components in place.

Read More:-Groq vs NVIDIA: Comparing LPU vs GPU for Ultra-Fast AI Inference

Conclusion

Scaling AI from PoC to production automation is the hardest part of any enterprise AI initiative. The technical complexity multiplies. The organizational challenges surface. The gap between what a prototype promised and what production requires becomes brutally clear.

Teams that succeed share a common approach. They treat the production build as a separate engineering project from the PoC. They invest in data pipeline reliability before model sophistication. They implement MLOps discipline from the first production deployment rather than as an afterthought. They measure business outcomes, not just model accuracy.

The six-stage framework in this guide gives you a structured path through that complexity. Define production requirements precisely. Harden your data pipeline. Stress-test your model against real-world edge cases. Build infrastructure around your non-functional requirements. Implement MLOps automation. Monitor everything continuously.

Scaling AI from PoC to production automation also requires organizational patience. Production AI does not deliver its full value on day one. It delivers compounding value as the feedback loop between production outputs and model training matures over months.

Organizations that commit to that timeline — and invest in the engineering discipline to execute it — consistently produce AI systems that transform their operations. The teams that rush from demo to deployment without the foundational work consistently join the 80% whose AI projects never reach production scale.

Build deliberately. Measure rigorously. Improve continuously. That formula works for every AI system, in every industry, at every scale.