Introduction

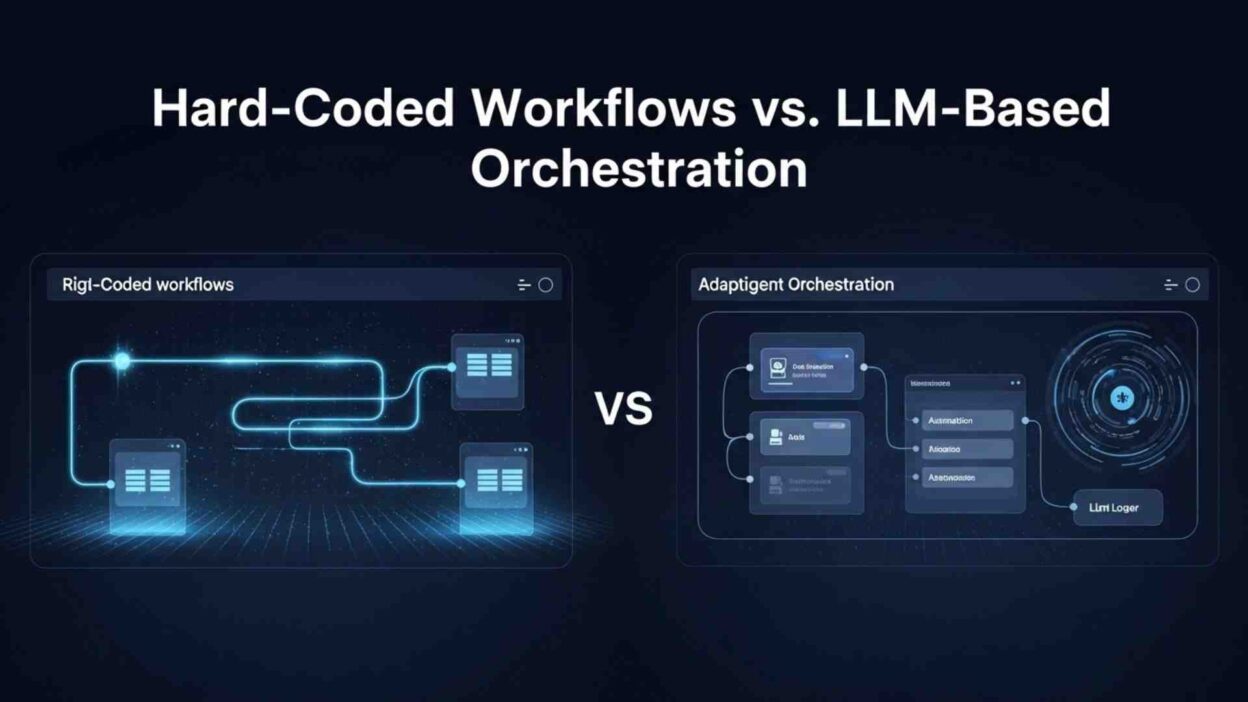

TL;DR Every software team building AI systems faces the same architectural fork. The first path: define every step explicitly. Write the logic. Hardcode the decision points. Control every branch. The second path: hand orchestration to a language model. Let it decide which steps to take, which tools to call, and how to sequence actions based on the situation at hand.

LLM-based orchestration vs hard-coded workflows is not a debate with a clean winner. Both approaches solve real problems. Both create real problems. The engineering teams that understand this distinction deeply make better architectural decisions and build systems that perform reliably under real-world conditions.

This blog examines both approaches from every angle. It covers what each does well, where each fails, how to choose between them, and how leading engineering teams combine both in production systems that deliver consistent results at scale.

Table of Contents

Understanding Hard-Coded Workflows

What Hard-Coded Workflows Actually Are

A hard-coded workflow defines every step of a process explicitly in code. Each action has a fixed place in the sequence. Decision branches have explicit conditions. The system follows a predetermined path from input to output without any adaptive reasoning about what to do next. The logic lives in the code. The code runs the logic.

Traditional software automation exemplifies this pattern. An order processing system receives an order, validates the customer, checks inventory, calculates shipping, charges the payment method, and sends a confirmation email. Every step is defined. Every branch condition is written. The system never decides what step comes next. The engineer decided that before deployment.

LLM-based orchestration vs hard-coded workflows comparisons often undervalue hard-coded approaches. Hard-coded workflows are deterministic. Given the same input, they always produce the same output through the same path. This predictability makes them easy to test. Unit tests cover each branch. Integration tests verify the full path. A passing test suite gives the engineering team high confidence the system will behave as expected in production.

Hard-coded workflows are also auditable. A compliance team can review the code and understand exactly what the system does and does not do. Every decision point is visible and documented in the logic itself. Regulatory environments that require auditability often mandate this level of transparency. A hard-coded workflow produces a complete, inspectable record of what happened and why.

Performance characteristics are predictable with hard-coded workflows. The latency profile is consistent because the same code executes the same steps every time. No inference calls introduce variable latency. No model reasoning adds unpredictable processing time. Systems with strict latency requirements benefit from this consistency. Hard-coded workflows can execute in milliseconds when the logic is simple. LLM-based orchestration vs hard-coded workflows in latency-critical applications frequently favors the hard-coded approach.

Where Hard-Coded Workflows Break Down

Hard-coded workflows fail when the real world deviates from the assumptions the engineer made when writing the code. Every conditional branch in a workflow represents a predicted scenario. The world contains scenarios that no engineer predicted. When an unpredicted scenario arrives, the hard-coded workflow has no graceful response. It either follows the closest matching branch incorrectly or fails with an error.

Maintenance burden accumulates as workflows grow complex. A simple workflow with ten steps and five branch conditions is easy to maintain. A workflow with one hundred steps and fifty branch conditions becomes a maze. Adding a new requirement means finding where the new branch belongs, ensuring it does not break existing paths, and updating tests across all affected paths. Teams that maintain large hard-coded workflow codebases describe the work as archaeology rather than engineering.

Edge cases multiply in proportion to the variety of inputs a workflow handles. Customer service workflows must handle every possible customer message type. Document processing workflows must handle every possible document format variation. Medical coding workflows must handle every possible clinical note structure. The combinatorial explosion of edge cases quickly exceeds what hard-coded logic can anticipate. LLM-based orchestration vs hard-coded workflows shows this limitation starkly in domains with high input variety.

Natural language inputs break hard-coded workflows entirely. A workflow designed to process structured form submissions fails when a user submits free-text instead. A workflow designed to handle specific command patterns fails when a user phrases a request differently than the engineer anticipated. Hard-coded workflows assume structured, predictable inputs. Natural language is neither.

Understanding LLM-Based Orchestration

How LLM Orchestration Actually Works

LLM-based orchestration puts a language model in the role of the orchestrator. Instead of following predetermined steps, the model reasons about what to do next at each decision point. It receives a description of the available tools, the current state, and the goal. It decides which tool to call, what arguments to pass, and what to do with the result. This reasoning loop continues until the model determines the goal is achieved.

The ReAct pattern, reasoning and acting, describes the most common LLM orchestration approach. The model generates a thought about the current state and what needs to happen next. It generates an action to take based on that thought. It observes the result of the action. It generates another thought incorporating the observation. It repeats this cycle until it reaches the goal or determines it cannot proceed.

LLM-based orchestration vs hard-coded workflows at the fundamental level comes down to where the decision logic lives. In hard-coded workflows, decision logic lives in the code. In LLM orchestration, decision logic lives in the model’s reasoning at inference time. This shift has profound implications for how systems handle novel situations.

When an LLM orchestrator encounters a situation no engineer anticipated, it reasons about the situation using its training knowledge and context. It might call an unexpected combination of tools. It might request clarification from the user. It might recognize that the goal cannot be achieved with available tools and explain why. This adaptive behavior handles the long tail of edge cases that hard-coded workflows cannot reach.

Tool use is central to LLM orchestration. The model has access to a defined set of tools, each with a name, description, and parameter schema. The model selects which tool to call based on its reasoning about what the situation requires. Tool calls execute in real systems, databases, APIs, file systems, and external services. The results return to the model as context for the next reasoning step. LLM-based orchestration vs hard-coded workflows in tool-rich environments shows LLM orchestration’s capacity to combine tools in ways the original designer never anticipated.

Multi-agent orchestration extends this pattern. Multiple LLM agents each handle a domain of responsibility. An orchestrator agent decomposes a complex goal into subtasks. Specialist agents handle each subtask. Results flow back to the orchestrator for integration. This architecture handles problems that no single agent could solve effectively. LLM-based orchestration vs hard-coded workflows at the multi-agent level enables qualitatively different problem-solving capabilities.

The Failure Modes Unique to LLM Orchestration

LLM orchestration introduces failure modes that hard-coded workflows never exhibit. Understanding these failure modes is essential for any team considering this architectural approach.

Non-determinism is the most fundamental challenge. The same input produces different outputs across different runs. The model might call different tools in different orders and reach different conclusions. This variability makes testing fundamentally harder. A test suite that passes one hundred times does not guarantee the system behaves correctly on run one hundred and one. LLM-based orchestration vs hard-coded workflows in testability almost always favors hard-coded approaches.

Reasoning errors compound through multi-step workflows. An early incorrect inference leads the model to call the wrong tool. The wrong tool returns unexpected output. The model interprets the unexpected output incorrectly. Each step builds on the previous error. By step five, the workflow is entirely off course. Hard-coded workflows fail at specific defined points. LLM orchestration can fail in cascading ways that are harder to diagnose.

Prompt injection is a security concern specific to LLM orchestration. Malicious content in tool outputs or user inputs can manipulate the model’s reasoning. An attacker who controls data that the model reads can embed instructions that redirect the model’s actions. A hard-coded workflow ignores irrelevant content and follows its predefined logic. An LLM orchestrator is susceptible to content that masquerades as instructions.

Cost and latency scale with reasoning complexity. Each reasoning step requires an inference call. A workflow that requires ten reasoning steps costs ten times as much as a workflow that requires one. Latency accumulates across sequential reasoning steps. LLM-based orchestration vs hard-coded workflows in cost-sensitive, high-volume applications frequently reveals LLM orchestration as economically impractical for simple, repetitive tasks.

Choosing the Right Approach for Your Use Case

Decision Criteria That Actually Matter

Choosing between LLM-based orchestration vs hard-coded workflows requires honest analysis of the specific application requirements. Generic advice about which approach is better misses the point. The right choice depends on the nature of the task.

Input variety is the first criterion. Tasks with highly structured, predictable inputs suit hard-coded workflows well. An invoice processing system that always receives PDFs in one of three known formats has predictable input variety. A customer support system that receives free-text messages from millions of customers in hundreds of languages has extreme input variety. LLM-based orchestration handles high input variety far better than hard-coded workflows.

Reasoning complexity is the second criterion. Tasks that require simple, conditional logic suit hard-coded workflows. If the order total exceeds five hundred dollars, apply the premium shipping discount. Tasks that require nuanced judgment about ambiguous situations suit LLM orchestration. Should this customer complaint be escalated to a senior agent, handled with an automated response, or routed to a specialist team? This judgment depends on dozens of factors that hard-coded logic cannot enumerate.

Consistency requirements are the third criterion. Tasks where the same input must always produce the same output require hard-coded workflows. Financial calculations, regulatory determinations, and safety-critical decisions cannot tolerate non-determinism. Tasks where adaptive, situation-specific responses add value suit LLM orchestration. Creative writing assistance, research synthesis, and complex problem-solving benefit from the model’s flexible reasoning.

LLM-based orchestration vs hard-coded workflows based on frequency and volume also matters. A task that runs a billion times per day needs the cost efficiency and speed of hard-coded execution. A task that runs a thousand times per day with high per-task value can absorb LLM inference costs. Volume shapes the economics of the choice significantly.

The Hybrid Architecture That Most Production Systems Use

Most production AI systems that handle complex tasks use both approaches simultaneously. LLM-based orchestration vs hard-coded workflows in practice is not a binary choice. It is an architectural decision about which layer handles which responsibility.

The common hybrid pattern uses hard-coded workflows for the outer structure and LLM orchestration for the inner reasoning. A customer support system defines hard-coded workflow stages: intake, classification, routing, resolution, and closure. Within the resolution stage, an LLM agent handles the nuanced reasoning about how to address the specific customer’s issue. The outer workflow ensures every ticket goes through the right stages. The inner LLM ensures each resolution is appropriate for the specific situation.

Another hybrid pattern uses LLM orchestration for planning and hard-coded execution for action. The LLM agent reasons about what steps to take and generates a plan. A hard-coded execution engine validates the plan against safety rules and executes the approved steps deterministically. This pattern captures LLM flexibility in planning while maintaining hard-coded control over execution. LLM-based orchestration vs hard-coded workflows at the planning versus execution level often resolves to this hybrid naturally.

Guardrails implemented as hard-coded rules wrap LLM orchestration in production systems. The LLM reasons freely within defined boundaries. The guardrails catch outputs that violate business rules, safety requirements, or quality standards before they reach users or trigger actions. A content moderation guardrail rejects LLM-generated responses that contain prohibited content regardless of the model’s reasoning. A spending guardrail blocks the LLM from authorizing transactions above a defined threshold. Hard-coded guardrails make LLM orchestration safe for production deployment.

Testing and Observability for Both Approaches

Testing Hard-Coded Workflows

Testing hard-coded workflows follows established software engineering practices. Unit tests cover individual functions and conditional branches. Integration tests verify that workflow stages connect correctly. End-to-end tests validate the complete flow from input to output. A comprehensive test suite gives high confidence that the workflow behaves as designed.

Branch coverage metrics measure test quality for hard-coded workflows. If a workflow has fifty conditional branches and tests cover forty-five of them, the team knows they have gaps in five specific areas. They can prioritize closing those gaps. Coverage tools make this analysis automated and continuous. LLM-based orchestration vs hard-coded workflows in test coverage visibility clearly favors hard-coded approaches.

Mutation testing strengthens hard-coded workflow test suites. The testing framework introduces small changes to the code and verifies that tests catch the changes. If a mutated condition passes the test suite, the test suite is not comprehensive enough to catch that class of error. Mutation testing identifies weak areas in the test suite and guides improvement. This disciplined testing approach makes hard-coded workflow behavior highly reliable.

Testing and Monitoring LLM Orchestration Systems

Testing LLM orchestration requires different approaches because traditional unit testing does not apply to non-deterministic systems. LLM-based orchestration vs hard-coded workflows in testability requires teams to develop new testing methodologies specifically for language model behavior.

Evaluation datasets replace unit tests for LLM orchestration. A dataset of representative inputs with expected outputs measures how well the orchestrator handles each case. Automated evaluation metrics score responses against expected outputs. Human evaluators rate response quality on dimensions that automated metrics miss. Building a high-quality evaluation dataset requires significant investment but produces meaningful quality measurements.

Behavioral testing checks LLM orchestration outputs against defined properties rather than exact expected values. A behavioral test might verify that a customer response always contains an apology when the customer reports a serious problem, regardless of the exact wording. It might verify that a research synthesis always cites at least three sources. Behavioral tests capture the intent of the output specification without requiring exact string matching.

Observability is particularly critical for LLM orchestration in production. Logging every reasoning step, every tool call, every tool result, and every model response creates the audit trail needed to diagnose failures. LangSmith, Langfuse, and similar observability platforms capture this trace data for LLM orchestration systems. When a production LLM orchestrator produces an incorrect output, the trace shows exactly what reasoning path led to the error. LLM-based orchestration vs hard-coded workflows in production debugging requires this trace-level observability to achieve equivalent diagnosability.

Performance, Cost, and Scalability Considerations

Latency and Throughput Profiles

Hard-coded workflows execute with consistent, predictable latency. A simple workflow completes in milliseconds. A complex workflow with many steps might take seconds. The latency profile is stable because the same code always runs. Load testing produces reliable performance benchmarks that hold in production.

LLM orchestration latency varies with reasoning complexity. A simple task that the model resolves in one reasoning step returns quickly. A complex task requiring ten reasoning steps and six tool calls takes much longer. The latency distribution is wide. P50 latency might be three seconds. P99 latency might be thirty seconds for the same workflow with different inputs. User experience design must account for this variability.

Throughput scaling works differently for each approach. Hard-coded workflows scale horizontally with standard compute. Adding more instances handles more requests. The scaling relationship is linear and predictable. LLM orchestration throughput depends on LLM API rate limits and inference infrastructure capacity. Scaling LLM orchestration requires managing API rate limits, implementing queuing, and potentially managing inference infrastructure directly. LLM-based orchestration vs hard-coded workflows in throughput scaling shows hard-coded approaches scaling more predictably and economically at high volume.

Cost modeling favors hard-coded workflows for high-volume, simple tasks. Every LLM inference call has a token cost. A task that requires five hundred input tokens and three hundred output tokens across three inference calls costs significantly more than executing a deterministic function. For tasks running millions of times per day, this cost difference is substantial. LLM-based orchestration vs hard-coded workflows in cost efficiency shows the breakeven point depends heavily on task complexity and volume.

Frequently Asked Questions

What is LLM-based orchestration?

LLM-based orchestration uses a language model as the decision-maker in a workflow system. Instead of following predetermined steps defined by an engineer, the model reasons about what to do next at each stage. It selects which tools to call, what arguments to pass, and how to interpret results. This adaptive reasoning handles novel situations and complex multi-step tasks that hard-coded logic cannot anticipate. LLM-based orchestration vs hard-coded workflows fundamentally differs in where decision logic lives: in the model’s reasoning versus in the engineer’s code.

When should I use hard-coded workflows instead of LLM orchestration?

Use hard-coded workflows when inputs are structured and predictable, when the same input must always produce the same output, when auditability and regulatory compliance require inspectable logic, when latency requirements are strict, or when task volume makes LLM inference costs prohibitive. LLM-based orchestration vs hard-coded workflows in financial processing, safety-critical systems, and high-volume simple automation almost always favors hard-coded approaches for these reasons.

Can LLM orchestration and hard-coded workflows work together?

Yes. The most effective production AI systems combine both approaches. Hard-coded workflows handle the outer structure, stage sequencing, and safety guardrails. LLM orchestration handles the inner reasoning within stages where adaptive judgment adds value. LLM-based orchestration vs hard-coded workflows in production architecture frequently resolves to a hybrid where each approach handles what it does best. Hard-coded control provides reliability. LLM reasoning provides flexibility.

How do I test an LLM orchestration system?

Testing LLM orchestration requires evaluation datasets, behavioral testing, and production monitoring rather than traditional unit testing. Build a dataset of representative inputs with expected output properties. Define behavioral tests that verify output characteristics without requiring exact string matches. Implement trace-level observability to capture every reasoning step in production. LLM-based orchestration vs hard-coded workflows in testability requires accepting that exhaustive test coverage is impossible for non-deterministic systems. Focus on evaluating average-case quality and monitoring for degradation over time.

What are the biggest risks of LLM-based orchestration in production?

The biggest risks are non-determinism leading to inconsistent outputs, reasoning errors compounding across multi-step workflows, prompt injection vulnerabilities in tool outputs, unpredictable latency under load, and cost scaling with task complexity. LLM-based orchestration vs hard-coded workflows in risk assessment shows LLM systems requiring more sophisticated guardrails, monitoring, and incident response capabilities. Teams that deploy LLM orchestration without addressing these risks discover them through production failures rather than testing.

Read More:-Why Python is Still King of the AI Automation Era

Conclusion

LLM-based orchestration vs hard-coded workflows is one of the defining architectural questions of the current AI era. Both approaches are valuable. Both have limits. Neither is universally superior.

Hard-coded workflows excel at deterministic, auditable, high-volume, low-complexity tasks. They test cleanly, scale predictably, and perform reliably within the boundaries the engineer defined. They fail when the world presents situations outside those boundaries. They become unmaintainable when the problem domain grows complex enough to require hundreds of conditional branches.

LLM orchestration excels at adaptive, reasoning-intensive, input-diverse tasks. It handles edge cases and novel situations that no engineer anticipated. It combines tools in creative ways to solve problems. It degrades gracefully when goals prove impossible. It fails non-deterministically, costs more per task, and introduces security risks that hard-coded systems never face.

The production systems that perform best use both approaches deliberately. They apply hard-coded workflows where determinism, auditability, and efficiency matter most. They apply LLM orchestration where adaptive reasoning and input variety demand flexibility. They wrap LLM reasoning with hard-coded guardrails that ensure safety and quality. LLM-based orchestration vs hard-coded workflows in practice is a design question about layer responsibilities, not a binary choice.

Teams that understand both approaches deeply make better decisions at every architectural fork. They do not default to LLM orchestration because it feels modern. They do not default to hard-coded workflows because it feels safe. They analyze what each part of the system actually requires and apply the right tool to the right problem. That discipline produces systems that work reliably, scale economically, and evolve without architectural debt.