Introduction

TL;DR Developers building AI applications face a critical early decision.

Which framework do you build on? The wrong choice costs weeks of rework. The right choice accelerates your entire project.

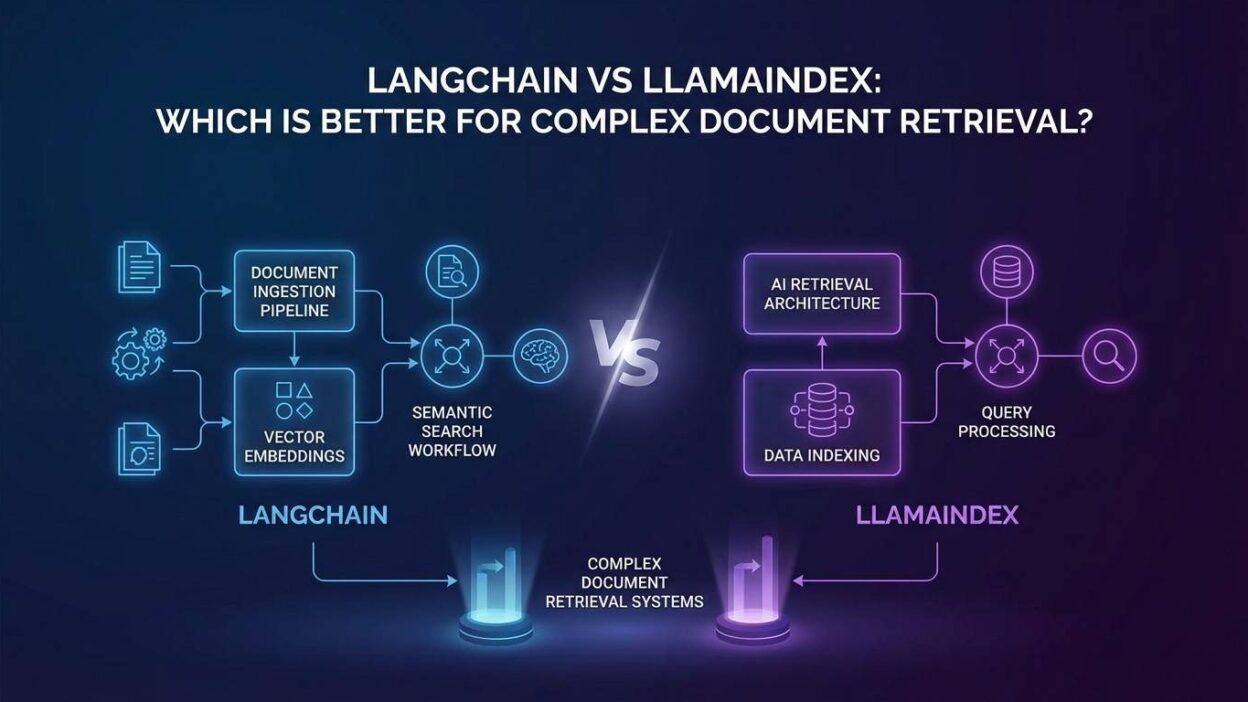

LangChain and LlamaIndex dominate this space. Both frameworks help developers connect large language models to external data. Both support retrieval-augmented generation. Both have strong open-source communities and active development cycles.

Yet they are built with different philosophies. They excel at different tasks. They impose different trade-offs on your architecture.

The debate around LangChain vs LlamaIndex for document retrieval comes up in every AI engineering team. It surfaces in GitHub discussions, Discord servers, and engineering blogs. The reason is simple: the choice genuinely matters. It shapes how you ingest data, how you structure queries, how you scale, and how much time you spend maintaining your pipeline.

This blog gives you a direct, honest comparison. You will understand what each framework does well, where each one struggles, and exactly which use cases favor one over the other. The focus stays on complex document retrieval throughout because that is the context where the differences matter most.

No framework wins every category. The right answer depends on your specific requirements. By the end of this blog, you will know exactly which framework fits your project.

Table of Contents

Why the Framework Choice Matters for Document Retrieval

Document retrieval is deceptively complex. Retrieving a single chunk of text from a small collection of PDFs is easy. Retrieving the right context from tens of thousands of documents, across multiple file types, with varied structures, under latency constraints, while maintaining answer accuracy — that is hard.

The framework you choose determines how much of that complexity you handle yourself versus what the framework handles for you. A framework with strong retrieval primitives lets you iterate fast. A framework that requires you to build retrieval logic from scratch adds weeks to your timeline.

The debate around LangChain vs LlamaIndex for document retrieval is fundamentally a question about this division of labor. LangChain gives you composable building blocks. LlamaIndex gives you specialized retrieval infrastructure. Each approach has genuine advantages depending on what you are building.

Teams that choose the wrong framework for their retrieval requirements end up one of two ways. Some over-engineer a simple retrieval pipeline with unnecessary abstractions. Others under-engineer a complex retrieval need with a framework that lacks the specialized tooling to handle it.

Understanding the difference between these two frameworks prevents both outcomes. The comparison below covers architecture, retrieval capabilities, data ingestion, query handling, ecosystem strength, and production readiness. Each dimension matters when you are building something that needs to work reliably at scale.

What Is LangChain and How Does It Approach Retrieval

LangChain’s Core Design Philosophy

LangChain launched in October 2022 and grew rapidly into one of the most widely adopted LLM application frameworks. Its core design philosophy centers on composability. LangChain gives developers a set of modular components — chains, agents, tools, memory, retrievers — that combine into complex workflows.

The framework treats document retrieval as one capability among many. Retrieval sits alongside tool use, multi-step reasoning, memory management, and agent orchestration within a unified component model. This breadth is LangChain’s greatest strength and its primary trade-off.

For teams evaluating LangChain vs LlamaIndex for document retrieval, this design choice has direct consequences. LangChain handles retrieval well. It does not treat retrieval as the center of gravity for everything else. Teams building applications where retrieval is one component of a larger workflow benefit from this approach.

How LangChain Handles Document Retrieval

LangChain’s retrieval stack starts with document loaders. It ships with loaders for PDF, Word, HTML, CSV, JSON, Markdown, and dozens of other formats. These loaders parse raw files into LangChain Document objects.

Text splitters divide documents into chunks. LangChain offers recursive character splitting, token-based splitting, and semantic splitting. Each splitter serves different document types and retrieval strategies.

Embeddings convert chunks into vector representations. LangChain integrates with OpenAI, Cohere, HuggingFace, and other embedding providers through a consistent interface. Switching embedding providers requires minimal code changes.

Vector stores handle storage and similarity search. LangChain integrates with Pinecone, Weaviate, Chroma, FAISS, and many others. The integration layer abstracts away vector database differences. Your retrieval code stays consistent regardless of which database you use underneath.

Retrievers sit above vector stores. They accept a query string and return relevant documents. LangChain offers several retriever variants beyond basic similarity search. Multi-query retrieval generates multiple query variations from a single user question. Contextual compression retrieval extracts only the relevant portions of retrieved chunks. Ensemble retrieval combines results from multiple retrieval strategies.

What Is LlamaIndex and How Does It Approach Retrieval

LlamaIndex’s Core Design Philosophy

LlamaIndex launched in 2022 under the name GPT Index before rebranding. Its core design philosophy centers on data infrastructure for LLM applications. Where LangChain treats retrieval as one component, LlamaIndex treats retrieval as the central problem it exists to solve.

This retrieval-first orientation shapes every decision in the framework. Data ingestion, indexing, query processing, and response synthesis all optimize for retrieval quality. The framework asks: how do we make retrieval as accurate, efficient, and flexible as possible?

When comparing LangChain vs LlamaIndex for document retrieval in complex scenarios, this focus produces measurable advantages. LlamaIndex ships with retrieval primitives that LangChain requires you to build yourself. For retrieval-heavy applications, this head start matters significantly.

How LlamaIndex Handles Document Retrieval

LlamaIndex’s retrieval stack starts with data connectors called Readers. LlamaIndex ships with over one hundred readers covering local files, databases, APIs, and SaaS platforms. These readers normalize diverse data sources into LlamaIndex’s Document format.

Node parsing divides documents into nodes — LlamaIndex’s equivalent of chunks. The framework offers multiple node parsers for different document types. Sentence-level parsing, semantic parsing, and hierarchical parsing all serve different retrieval strategies.

Indexes organize nodes for retrieval. LlamaIndex offers multiple index types beyond standard vector indexes. A summary index creates a hierarchical summary of document content for high-level queries. A knowledge graph index extracts entity relationships for structured reasoning. A document summary index stores both document summaries and detailed chunks for two-stage retrieval.

Query engines handle the full retrieval-to-response pipeline. LlamaIndex’s query engines combine retrieval strategy, post-processing, and response synthesis in a single configurable object. This tight integration makes query pipeline configuration more straightforward than assembling equivalent chains in LangChain.

Retrievers in LlamaIndex support fusion retrieval, recursive retrieval, and auto-retrieval with metadata filtering. These capabilities address real complexity in production document retrieval scenarios that go beyond basic similarity search.

Head-to-Head: LangChain vs LlamaIndex for Document Retrieval

Data Ingestion and Document Processing

LangChain’s data loading ecosystem covers the most common document types well. Its loaders for PDF, HTML, and common office formats work reliably. Integration with Unstructured.io expands its ability to handle complex document layouts.

LlamaIndex has a larger reader ecosystem with over one hundred pre-built connectors. It handles SaaS integrations — Notion, Confluence, Google Drive, Slack — more comprehensively out of the box. For teams ingesting from multiple heterogeneous sources, LlamaIndex’s reader library reduces integration time.

Both frameworks handle standard document types with similar quality. LlamaIndex pulls ahead for complex ingestion scenarios involving many source types or SaaS platforms. LangChain performs equally well for simpler, file-based ingestion pipelines.

Indexing and Storage Flexibility

LangChain’s storage layer abstracts across vector databases cleanly. Its consistent retriever interface means your application code does not care whether you use Pinecone, Weaviate, or Chroma underneath. Database switching requires minimal refactoring.

LlamaIndex’s index variety goes beyond what LangChain offers natively. The summary index, knowledge graph index, and document summary index enable retrieval strategies that pure vector search cannot match. For applications that need hierarchical retrieval, relationship-aware retrieval, or two-stage retrieval, LlamaIndex’s index types provide direct support. Replicating these strategies in LangChain requires custom implementation.

When the question is LangChain vs LlamaIndex for document retrieval at scale, the index variety in LlamaIndex matters for complex document collections with diverse structural requirements.

Query Processing and Retrieval Strategies

LangChain’s query processing shines in multi-step reasoning scenarios. Its agent framework lets a language model decide which retrieval tool to use, execute that retrieval, interpret the result, and decide whether to retrieve again before generating a response. This agentic retrieval pattern handles complex questions that require multiple information lookups.

LlamaIndex’s query processing shines in retrieval precision. Its recursive retrieval, sub-question decomposition, and fusion retrieval strategies address accuracy problems that simple similarity search fails on. Complex questions get decomposed into sub-questions. Each sub-question retrieves specific context. The answers combine into a coherent final response.

Both frameworks now support agent-based retrieval. LangChain’s agent ecosystem is more mature. LlamaIndex’s retrieval-specific query strategies are more specialized. For most complex document retrieval scenarios, LlamaIndex’s built-in strategies require less custom code to achieve high retrieval accuracy.

Response Synthesis and Output Formatting

LangChain gives developers precise control over how retrieved context feeds into prompt templates. This control suits teams that need custom output formats, structured data extraction, or multi-step reasoning chains over retrieved content.

LlamaIndex’s response synthesizers handle common synthesis patterns with less configuration. Tree summarization, compact synthesis, and refine synthesis all work out of the box. For standard question-answering over documents, LlamaIndex’s synthesis layer requires less setup.

For custom output formats and complex reasoning chains, LangChain offers more flexibility. For standard document question-answering with high retrieval accuracy, LlamaIndex offers faster time to production.

Ecosystem, Community, and Integrations

LangChain has a larger overall community. Its GitHub star count and documentation breadth reflect years of developer adoption. Its agent framework and tool ecosystem extend well beyond retrieval into general LLM application development.

LlamaIndex has a focused community built around data infrastructure and retrieval. Its documentation for retrieval-specific use cases is more detailed. Its integration with enterprise data sources has grown substantially.

Both frameworks actively develop. LangChain releases new agent capabilities and integrations. LlamaIndex releases new retrieval strategies and index types. The ecosystem gap narrows each quarter.

Production Readiness and Observability

LangChain introduced LangSmith for tracing, evaluation, and monitoring of LLM applications. This tooling provides visibility into chain execution, retrieval performance, and response quality. Production teams debugging retrieval failures benefit significantly from this observability layer.

LlamaIndex introduced Arize Phoenix integration and its own evaluation framework for measuring retrieval quality. Both tools help teams identify where retrieval fails and why.

For production document retrieval at scale, both frameworks now offer adequate observability tooling. LangChain’s LangSmith provides a more polished end-to-end experience. LlamaIndex’s evaluation framework targets retrieval quality metrics more specifically.

When to Choose LangChain for Document Retrieval

Complex Multi-Step Workflows Beyond Retrieval

Choose LangChain when document retrieval is one component of a larger workflow. If your application retrieves documents, uses those documents to make tool calls, processes results from those tools, and generates a final response — LangChain’s composable architecture handles this elegantly.

Multi-agent systems with retrieval capabilities suit LangChain well. One agent retrieves relevant documentation. Another agent uses that documentation alongside API calls to take action. LangChain’s agent framework coordinates these interactions in a way LlamaIndex does not natively support at the same depth.

Teams already deep in the LangChain ecosystem gain significant productivity advantages by staying within it. The learning curve investment already made compounds with every new application built on the same framework. Switching frameworks for retrieval alone rarely justifies that cost.

Flexible Prompt Engineering Requirements

LangChain gives developers precise control over every prompt in the pipeline. This matters for teams that need to customize how retrieved context gets formatted before reaching the language model.

Applications with strict output format requirements — structured JSON extraction, table generation, formatted reports — benefit from LangChain’s template system. The framework makes it straightforward to engineer exact prompt structures around retrieved content.

When evaluating LangChain vs LlamaIndex for document retrieval in scenarios requiring heavy prompt customization, LangChain’s flexibility reduces friction significantly.

Teams Prioritizing General LLM Application Development

LangChain suits teams building a broad range of LLM applications, not just retrieval systems. Memory management, conversational agents, tool use, structured output extraction — all fall within LangChain’s core capabilities.

Engineering teams that expect to build multiple different LLM applications benefit from mastering LangChain’s component model once and applying it across projects. The investment in understanding the framework pays off across a wider range of use cases.

When to Choose LlamaIndex for Document Retrieval

High-Precision Retrieval Over Large Document Collections

Choose LlamaIndex when retrieval accuracy is your primary concern. Complex document collections with thousands of files, varied structure, and dense technical content challenge basic similarity search. LlamaIndex’s hierarchical indexes, fusion retrieval, and recursive retrieval strategies address this challenge directly.

Research applications, legal document analysis, technical documentation search, and enterprise knowledge management all require retrieval precision that LlamaIndex delivers more completely out of the box. The built-in strategies reduce the custom engineering required to reach production-quality accuracy.

LangChain vs LlamaIndex for document retrieval comparisons consistently show LlamaIndex delivering better retrieval quality on complex benchmarks with minimal custom configuration. Teams that prioritize accuracy over flexibility choose LlamaIndex for this reason.

Complex Multi-Document Reasoning

Some retrieval tasks require reasoning across multiple documents simultaneously. Answering a question that requires synthesizing information from five different reports is fundamentally different from retrieving a single relevant passage.

LlamaIndex’s sub-question query engine decomposes complex questions automatically. Each sub-question retrieves from the relevant document subset. The synthesis layer combines partial answers into a coherent final response. This workflow requires significant custom implementation in LangChain.

For applications where users ask questions that span document collections — cross-report analysis, comparative document review, knowledge base synthesis — LlamaIndex’s native multi-document reasoning support reduces development time substantially.

Teams Focused Primarily on Data and Retrieval Infrastructure

LlamaIndex suits teams whose primary product is the retrieval system itself. If you are building a document intelligence platform, an enterprise search tool, or a RAG-powered knowledge base, LlamaIndex’s retrieval-first philosophy aligns with your product goals.

The framework’s depth in indexing, retrieval strategies, and evaluation tooling provides a strong foundation for teams that need to iterate quickly on retrieval quality. LlamaIndex treats retrieval as the core problem. Teams that share that focus find the framework’s defaults align with their needs more naturally.

Using LangChain and LlamaIndex Together

The LangChain vs LlamaIndex for document retrieval debate sometimes misses an important option. You do not always have to choose one exclusively.

LlamaIndex works as a retrieval backend inside LangChain applications. LangChain’s retriever interface accepts LlamaIndex query engines as data sources. This combination gives you LlamaIndex’s retrieval precision inside LangChain’s composable workflow architecture.

Teams building complex agent systems with high-precision retrieval requirements use this pattern successfully. The agent logic, tool orchestration, and workflow composition live in LangChain. The retrieval over document collections runs through LlamaIndex. Each framework contributes what it does best.

This hybrid approach adds architectural complexity. Teams that use it need engineers comfortable with both frameworks. Debugging requires understanding both codebases. For teams with the expertise to manage this complexity, the combination delivers results that neither framework achieves independently at the same effort level.

For most teams, choose the framework that fits your primary requirement. Add the other if a specific gap demands it. Do not start with the hybrid architecture unless you have a clear retrieval need that your primary framework cannot meet.

What Is Retrieval-Augmented Generation and Why Does It Matter

Retrieval-Augmented Generation is the architecture behind most modern document question-answering systems. A language model answers questions using both its training knowledge and context retrieved from an external document collection.

RAG solves the fundamental limitation of language models: they know only what their training data contains. RAG lets a model answer questions about your specific documents, your proprietary knowledge, and information created after the model’s training cutoff.

Both LangChain and LlamaIndex implement RAG architectures. The frameworks differ in how they implement retrieval, not in whether they support RAG. This distinction matters when comparing LangChain vs LlamaIndex for document retrieval because both solve the core RAG problem — they solve it with different priorities.

What Is Vector Search and Why Does It Power Document Retrieval

Vector search converts text into mathematical representations called embeddings. Documents and queries both convert to vectors in the same high-dimensional space. Similar meaning produces vectors that are close together in that space. Retrieval finds the documents whose vectors are closest to the query vector.

This semantic similarity search outperforms keyword search for document retrieval tasks. A keyword search for “revenue decrease” misses a document that discusses “sales decline.” A vector search finds it because “revenue decrease” and “sales decline” produce similar embeddings.

Both LangChain and LlamaIndex build on vector search foundations. They differ in how they augment basic vector search with additional retrieval strategies. LlamaIndex’s additional index types extend beyond vector search more aggressively than LangChain’s default retrieval stack.

FAQ Section for SEO

Which is better: LangChain or LlamaIndex for document retrieval?

For complex document retrieval with precision requirements, LlamaIndex generally performs better out of the box. For applications where retrieval is one component of a larger agent-based workflow, LangChain offers superior composability. The best choice depends on your specific requirements.

Can you use LangChain and LlamaIndex together?

Yes. LlamaIndex query engines work as retrieval backends inside LangChain applications. Teams with complex retrieval and complex orchestration requirements use both frameworks together. This combination adds architectural complexity but delivers strong results for demanding use cases.

Is LlamaIndex easier to learn than LangChain?

LlamaIndex has a steeper initial learning curve for its index and query engine concepts. LangChain’s chain and agent model is more familiar to developers with software engineering backgrounds. LlamaIndex’s concepts pay off quickly for retrieval-focused applications. LangChain’s concepts pay off across a broader range of application types.

Which framework performs better at scale?

Both frameworks scale with appropriate infrastructure. LlamaIndex’s retrieval quality tends to degrade less as document collections grow due to its hierarchical index types. LangChain scales well for workflow orchestration at volume.

What is the difference between LangChain and LlamaIndex for RAG?

Both support RAG. LlamaIndex offers more retrieval strategies natively. LangChain offers more flexibility in workflow composition. When comparing LangChain vs LlamaIndex for document retrieval in RAG contexts, LlamaIndex usually requires less custom retrieval code to reach high accuracy.

Read More:-Why “Prompt Engineering” Isn’t Enough: The Case for Custom Agents

Conclusion

The LangChain vs LlamaIndex for document retrieval debate does not have a universal answer. Both frameworks are genuinely good. Both have active development teams. Both support the core RAG patterns that power modern document intelligence applications.

The difference is in their priorities. LangChain builds for composability. It treats document retrieval as one capability inside a broad application framework. This suits teams building complex multi-step workflows where retrieval feeds into larger chains of reasoning and action.

LlamaIndex builds for retrieval precision. It treats the indexing, retrieval, and synthesis pipeline as the central engineering challenge. This suits teams where document retrieval quality is the product — where getting the right answer from a large, complex document collection is the core value delivered.

Most straightforward RAG applications work well with either framework. The difference becomes clear when your document collection grows, your queries get more complex, and your accuracy requirements tighten. At that point, LlamaIndex’s specialized retrieval infrastructure reduces the custom engineering required.

For teams building beyond retrieval — agent systems, tool-using workflows, multi-modal applications — LangChain’s composable architecture provides advantages that LlamaIndex does not match.

Book a free AI Strategy Call

Test both on your actual data with your actual queries before committing. A benchmark on generic data tells you little about performance on your specific document collection.

The right framework is the one your team ships with faster and maintains more confidently. Make that decision based on evidence from your own environment.

Build something. Learn from it. Iterate.