Introduction

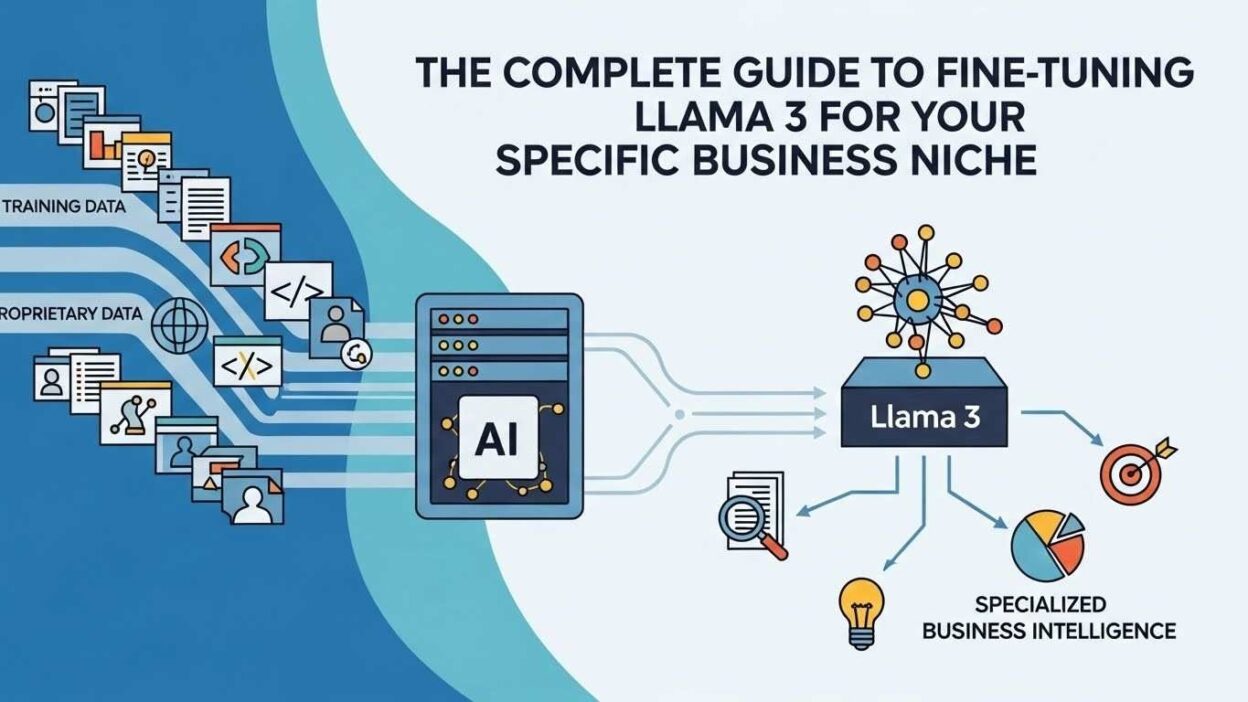

TL;DR Your business speaks a unique language. Generic AI models don’t understand your industry terminology. They miss the nuances that matter to your customers. This gap creates real problems for companies trying to leverage artificial intelligence.

Llama 3 from Meta represents a breakthrough in open-source AI technology. The model delivers impressive performance across diverse tasks. But out-of-the-box capabilities rarely match specific business requirements perfectly. Your company needs an AI that truly understands your domain.

Fine-tuning Llama 3 for business solves this fundamental challenge. You transform a general-purpose model into a specialized expert. The process teaches Llama 3 your industry knowledge, brand voice, and operational context. Your AI becomes genuinely useful rather than just impressive.

This comprehensive guide walks you through every aspect of fine-tuning Llama 3 for business applications. You’ll learn technical requirements, data preparation strategies, and implementation best practices. Real-world examples demonstrate how companies achieve remarkable results. By the end, you’ll know exactly how to customize Llama 3 for your niche.

Table of Contents

Understanding Llama 3 and Its Business Potential

Meta released Llama 3 as a powerful open-source language model. The architecture builds on years of AI research and development. Companies worldwide can access this technology without restrictive licensing.

What Makes Llama 3 Special

Llama 3 comes in multiple size variants. The 8B parameter version runs on modest hardware. The 70B parameter model delivers near-GPT-4 performance. You choose the size that balances capability with your computational budget.

The model demonstrates strong reasoning abilities. It handles complex instructions accurately. Context windows extend to thousands of tokens. Your business conversations maintain coherence across lengthy exchanges.

Open-source licensing provides unprecedented flexibility. You control where the model runs and how you use it. Data privacy stays entirely within your infrastructure. Regulatory compliance becomes significantly easier to achieve.

Why Generic Models Fall Short

Pre-trained models lack industry-specific knowledge. Medical terminology confuses general-purpose AI. Legal concepts get misinterpreted. Financial calculations might contain errors. These gaps make standard models unreliable for specialized work.

Brand voice consistency proves impossible with generic AI. Your company has a distinct communication style. Generic models can’t maintain this consistency. Customer-facing content feels impersonal and disconnected.

Proprietary information never appears in pre-training data. Your internal processes, product details, and business logic remain unknown. The AI can’t reference information it never learned. Fine-tuning Llama 3 for business fixes these limitations completely.

Business Use Cases That Benefit Most

Customer service departments gain enormous value. The AI handles inquiries with domain expertise. It understands product specifications perfectly. Technical support becomes more accurate and helpful.

Content creation scales dramatically with fine-tuned models. Marketing teams generate on-brand copy consistently. The AI knows your products, competitors, and market positioning. Output quality matches your best human writers.

Internal knowledge management improves substantially. Employees ask questions and receive accurate answers. The AI searches through company documentation intelligently. Institutional knowledge becomes accessible to everyone.

Prerequisites for Fine-Tuning Llama 3 for Business

Successful implementation requires proper preparation. You need technical infrastructure, quality data, and clear objectives. Skipping preparation leads to disappointing results.

Hardware and Software Requirements

GPU resources form the foundation of fine-tuning Llama 3 for business. The 8B model trains on consumer-grade GPUs. An NVIDIA RTX 4090 handles smaller fine-tuning jobs. The 70B model demands data center hardware.

Cloud platforms offer practical alternatives. AWS, Google Cloud, and Azure provide GPU instances. You rent computational power as needed. This approach eliminates large upfront hardware investments.

Software dependencies include Python and PyTorch. The Hugging Face Transformers library simplifies implementation. You’ll also need data processing tools. Most requirements install easily through package managers.

Data Collection and Quality Standards

Fine-tuning Llama 3 for business depends entirely on data quality. Garbage data produces garbage results. Your training examples must represent real business scenarios accurately.

Quantity matters but quality matters more. You need hundreds to thousands of examples. Each example should demonstrate correct behavior. Inconsistent training data confuses the model hopelessly.

Domain coverage must be comprehensive. Include common scenarios, edge cases, and everything between. Missing topics create blind spots. The AI performs poorly on situations it never encountered during training.

Budget Considerations and Planning

Training costs vary based on model size and data volume. Smaller jobs cost hundreds of dollars. Enterprise implementations might require thousands. Calculate your specific situation carefully.

Ongoing inference costs require budgeting too. Each query consumes computational resources. Self-hosting reduces long-term expenses. Cloud providers charge per token generated.

Personnel time represents hidden costs. Data preparation takes significant effort. Monitoring and refinement require ongoing attention. Budget for the complete project lifecycle honestly.

Preparing Your Business Data for Training

Data preparation determines fine-tuning success more than any other factor. Fine-tuning Llama 3 for business requires thoughtful data curation. Invest time here to avoid disappointing results later.

Identifying Relevant Data Sources

Customer support transcripts contain valuable training material. These conversations show real problems and solutions. The language reflects how customers actually communicate. Mine your helpdesk archives thoroughly.

Internal documentation provides domain knowledge. Product manuals, process guides, and policy documents teach the AI. This information grounds responses in factual accuracy. Your company’s expertise becomes the AI’s expertise.

Historical content demonstrates your brand voice. Marketing materials, blog posts, and social media establish tone. The AI learns how your company communicates. Output maintains brand consistency automatically.

Creating High-Quality Training Examples

Each training example needs clear structure. Input represents what users will ask. Output shows the desired response. The format must remain consistent across all examples.

Fine-tuning Llama 3 for business works best with diverse examples. Vary the phrasing of similar questions. Include different response lengths. Show the AI multiple valid approaches to each situation.

Accuracy verification prevents teaching mistakes. Every example should be factually correct. Have domain experts review training data. One wrong answer can corrupt the model’s knowledge.

Formatting Data for Optimal Results

Standard formats make training easier. JSON or JSONL work well for structured data. Each entry contains an input-output pair. Metadata can provide additional context.

Prompt templates structure conversations effectively. System messages establish the AI’s role. User messages present the query. Assistant messages demonstrate correct responses. This format trains conversational behavior.

Token limits affect how you structure data. Llama 3 has maximum context lengths. Split very long examples into smaller chunks. Ensure each chunk remains coherent independently.

Data Cleaning and Preprocessing

Remove personally identifiable information rigorously. Customer names, addresses, and sensitive details must go. Privacy protection isn’t optional. Anonymization tools help automate this process.

Eliminate contradictory information carefully. Training data must present consistent facts. Conflicting examples confuse the model severely. Resolve contradictions before training begins.

Normalize formatting for consistency. Standardize date formats, number representations, and terminology. Consistency helps the model learn patterns. Random variations introduce unnecessary complexity.

The Technical Process of Fine-Tuning Llama 3 for Business

Understanding the technical workflow demystifies fine-tuning Llama 3 for business. Each step builds on the previous one. Following the sequence prevents common mistakes.

Choosing the Right Fine-Tuning Approach

Full fine-tuning updates all model parameters. You get maximum customization but need substantial resources. This approach suits large enterprises with unique requirements.

LoRA (Low-Rank Adaptation) offers efficiency. Only small adapter layers get trained. The original model remains unchanged. You achieve 95% of full fine-tuning results with 10% of the cost.

QLoRA adds quantization for even greater efficiency. The technique runs on consumer hardware. Fine-tuning Llama 3 for business becomes accessible to smaller companies. Performance remains surprisingly good.

Setting Up Your Training Environment

Install required libraries systematically. Start with PyTorch and CUDA drivers. Add Hugging Face Transformers and PEFT libraries. Verify each installation before proceeding.

Download the base Llama 3 model. Meta provides official weights through Hugging Face. You’ll need to accept their license terms. The download takes time due to file size.

Configure your training script carefully. Set learning rates, batch sizes, and training epochs. These hyperparameters dramatically affect results. Start with recommended defaults from the community.

Training and Validation Strategies

Split your data into training and validation sets. Keep 10-20% for validation purposes. Never test on training data. This separation reveals true model performance.

Monitor training metrics closely. Loss should decrease steadily. Validation loss indicates generalization ability. Diverging validation loss signals overfitting problems.

Fine-tuning Llama 3 for business typically takes hours to days. Smaller datasets finish faster. Larger models require more time. Plan training runs during off-hours.

Evaluating Model Performance

Quantitative metrics provide objective measurements. Perplexity indicates how well the model predicts text. Accuracy measures correct responses. F1 scores balance precision and recall.

Qualitative evaluation matters equally. Run test queries and review outputs. Check factual accuracy against known information. Verify the model maintains your desired tone.

Compare results against the base model. Your fine-tuned version should outperform significantly. Document specific improvements for stakeholders. Real examples demonstrate value clearly.

Advanced Techniques for Superior Results

Basic fine-tuning Llama 3 for business delivers good results. Advanced techniques push performance even higher. These methods require more expertise but provide substantial benefits.

Instruction Tuning Best Practices

Instruction formatting teaches the model how to follow directions. Prefix each training example with clear instructions. The AI learns to parse and execute commands accurately.

Vary instruction phrasing to build robustness. Ask the same question multiple ways. The model generalizes better across different user inputs. Rigidity decreases with exposure to variation.

Include negative examples strategically. Show what the AI should not do. Demonstrate incorrect responses to avoid. This technique reduces unwanted behaviors effectively.

Multi-Task Training Approaches

Combine multiple business tasks in one training run. Customer support, content generation, and data analysis can coexist. The model learns to context-switch appropriately.

Task-specific tokens help differentiate purposes. Prefix inputs with task identifiers. The AI recognizes which capability to engage. This organization prevents task confusion.

Balance training data across different tasks. Overrepresentation of one task creates bias. The model becomes better at common tasks. Rare tasks suffer in performance.

Incorporating Domain-Specific Knowledge

Create knowledge bases in structured formats. Product specifications, pricing tables, and technical documentation provide grounding. The AI references these facts during inference.

Retrieval-augmented generation enhances factual accuracy. The model searches knowledge bases before responding. Current information supplements training data. Fine-tuning Llama 3 for business combined with RAG produces outstanding results.

Regular knowledge updates keep information current. Business facts change over time. Fine-tune periodically with new data. The model stays accurate as your business evolves.

Deployment Strategies for Business Applications

Training completes only half the journey. Deploying fine-tuning Llama 3 for business requires careful planning. Your infrastructure must handle production workloads reliably.

Self-Hosting vs Cloud Deployment

Self-hosting provides maximum control. You manage infrastructure directly. Data never leaves your premises. Security-conscious industries prefer this approach.

Cloud deployment offers convenience and scalability. Providers handle infrastructure maintenance. You pay for actual usage. Scaling happens automatically with demand.

Hybrid approaches combine both advantages. Train models in the cloud. Deploy inference on-premises. This balance optimizes costs and security.

Optimizing Inference Performance

Quantization reduces model size dramatically. 4-bit and 8-bit versions run faster. Memory requirements drop substantially. Accuracy decreases slightly but remains acceptable.

Batch processing improves throughput. Group similar queries together. Process multiple requests simultaneously. Overall system efficiency increases significantly.

Caching frequent queries saves computation. Store common responses temporarily. Identical questions return instantly. User experience improves while costs decrease.

Integration with Existing Systems

API endpoints expose model functionality cleanly. RESTful interfaces work with any programming language. Your existing applications call the fine-tuned model easily.

Message queues handle asynchronous processing. Users submit requests without waiting. The system processes queries in the background. Notifications alert users when complete.

Monitoring tools track performance metrics. Response times, error rates, and usage patterns need visibility. Problems get detected and resolved quickly.

Real-World Success Stories

Seeing fine-tuning Llama 3 for business in action inspires confidence. These examples demonstrate tangible results across industries.

Healthcare Documentation Automation

A hospital system fine-tuned Llama 3 on medical records. The AI now generates discharge summaries automatically. Doctors save hours on documentation. Accuracy matches human performance in studies.

Medical terminology comprehension improved dramatically. The model understands diagnoses, medications, and procedures perfectly. It suggests appropriate ICD codes. Billing accuracy increased while reducing processing time.

Privacy compliance was maintained throughout. Patient data never left secure servers. The fine-tuned model runs entirely on-premises. HIPAA requirements caused no implementation obstacles.

Legal Contract Analysis

A law firm customized Llama 3 for contract review. The AI identifies problematic clauses automatically. Junior associates spend less time on routine analysis. Partners focus on strategic legal issues.

Legal language presented unique challenges. Contract terminology differs significantly from general text. Fine-tuning Llama 3 for business taught the model this specialized vocabulary. Understanding improved from 60% to 95% accuracy.

Cost savings reached six figures annually. The firm processes more contracts with existing staff. Client turnaround times decreased substantially. Competitive advantage increased in their market.

E-commerce Product Recommendations

An online retailer fine-tuned Llama 3 on purchase history. The AI generates personalized product descriptions. Conversion rates improved by 23%. Customer engagement metrics rose across the board.

Brand voice consistency was crucial. The retailer has a playful, friendly tone. Generic AI couldn’t match this style. Fine-tuning embedded the brand personality deeply.

Multilingual support expanded market reach. The same fine-tuned model serves multiple languages. Translation quality exceeds generic services. International sales grew significantly.

Common Challenges and Solutions

Every fine-tuning Llama 3 for business project faces obstacles. Anticipating problems helps you prepare effective solutions. Learn from others’ experiences.

Overfitting and Generalization Issues

Small datasets cause severe overfitting. The model memorizes training examples exactly. Performance on new queries suffers badly. Collect more diverse training data to combat this.

Data augmentation expands limited datasets. Paraphrase existing examples carefully. Introduce controlled variations systematically. The model learns patterns instead of memorizing.

Regularization techniques prevent excessive memorization. Dropout and weight decay help. These methods slightly reduce training accuracy. Generalization improves substantially in return.

Computational Resource Constraints

Limited GPU memory restricts model sizes. The 70B parameter model won’t fit on consumer cards. Choose smaller variants or use quantization. QLoRA makes large models accessible.

Training time concerns affect project timelines. Large datasets require days of computation. Use gradient accumulation with smaller batches. Training progresses with limited hardware.

Cloud resources solve most hardware limitations. Rent high-end GPUs hourly. Complete training jobs quickly. Cost-benefit analysis usually favors this approach.

Maintaining Model Quality Over Time

Business contexts change constantly. Product lines evolve and policies update. Your fine-tuned model gradually becomes outdated. Regular retraining maintains accuracy.

Monitor model performance continuously. Track error rates and user feedback. Declining metrics signal needed updates. Automated monitoring prevents quality drift.

Versioning strategies manage model updates. Keep previous versions available temporarily. Roll back if new versions underperform. Gradual transitions minimize disruption.

Cost-Benefit Analysis

Understanding the complete economics guides smart decisions. Fine-tuning Llama 3 for business requires investment. The returns often justify costs many times over.

Initial Development Costs

Data preparation consumes significant labor hours. Expect weeks of work for quality datasets. Personnel costs vary by team size. Budget $10,000 to $50,000 for enterprise projects.

Training infrastructure costs depend on approach. Cloud training runs cost $500 to $5,000. Hardware purchases require $5,000 to $50,000. Ongoing expenses follow initial investment.

External expertise might be necessary. Consultants charge $150 to $300 hourly. Full project services run $25,000 to $100,000. In-house development saves money long-term.

Operational Expenses

Inference costs accumulate based on usage. Self-hosted solutions cost electricity and maintenance. Cloud hosting charges per API call. Calculate based on expected query volume.

Maintenance requires ongoing technical attention. Monitoring, updates, and troubleshooting need resources. Budget 20% of development costs annually. Neglecting maintenance degrades performance.

Training updates keep models current. Retrain quarterly or when business changes. Each training cycle incurs additional costs. Plan for continuous improvement expenses.

Return on Investment Metrics

Labor savings often provide the largest returns. Automated tasks free employee time. Calculate hours saved monthly. Multiply by average hourly compensation.

Quality improvements generate revenue increases. Better customer service boosts satisfaction. Personalization drives higher conversion rates. These gains compound over time.

Competitive advantages create intangible value. Being first in your industry matters. Fine-tuning Llama 3 for business differentiates your company. Market positioning improves substantially.

Security and Privacy Considerations

Responsible AI deployment protects your business and customers. Fine-tuning Llama 3 for business creates new security responsibilities. Address these concerns proactively.

Data Privacy During Training

Training data might contain sensitive information. Customer details, trade secrets, and proprietary methods need protection. Anonymization and encryption become critical.

Data governance policies must extend to AI projects. Define what information can be used. Document how data gets processed. Compliance teams should review procedures.

Third-party training services pose risks. Your data enters external systems. Confidentiality agreements provide limited protection. Self-hosting eliminates this exposure entirely.

Model Security and Access Control

Fine-tuned models represent valuable intellectual property. Your customized Llama 3 embodies business knowledge. Unauthorized access could benefit competitors. Implement strong access controls.

API authentication prevents unauthorized usage. Require secure tokens or credentials. Rate limiting stops abuse. Monitor access patterns for suspicious activity.

Model extraction attacks steal your customizations. Attackers query models to recreate them. Input/output monitoring detects these attempts. Defensive measures protect your investment.

Compliance with Industry Regulations

GDPR affects European data processing. Right to deletion applies to training data. Document how you can remove specific information. Model retraining might be necessary.

HIPAA governs healthcare information strictly. Fine-tuned medical models need special handling. Business associate agreements cover third-party services. Compliance audit trails prove adherence.

Financial regulations control data usage. PCI DSS affects payment information. SOC 2 compliance demonstrates security controls. Fine-tuning Llama 3 for business in regulated industries requires expertise.

Future-Proofing Your Investment

Technology evolves rapidly. Smart planning protects your fine-tuning Llama 3 for business investment. Build flexibility into your implementation.

Staying Current with Model Updates

Meta continues improving Llama models. New versions offer better performance. Plan migration paths to future releases. Don’t get locked into outdated technology.

Transfer learning leverages previous work. Fine-tuning on updated base models goes faster. Your data remains valuable. Results improve with each generation.

Community resources accelerate adoption. Forums, tutorials, and tools emerge constantly. Engage with the ecosystem actively. Shared knowledge benefits everyone.

Building Scalable Infrastructure

Start with needs but design for growth. Your business will expand over time. Infrastructure should scale without complete redesign. Cloud-native architectures provide this flexibility.

Containerization simplifies deployment. Docker packages models with dependencies. Kubernetes orchestrates scaling automatically. Modern DevOps practices apply to AI.

Microservices architecture isolates components. Different models serve different purposes. Updates affect only relevant services. System resilience increases substantially.

Developing Internal Expertise

Knowledge transfer prevents vendor lock-in. Train internal team members thoroughly. Documentation captures implementation details. Your organization becomes self-sufficient.

Experimentation culture drives innovation. Encourage team members to try new techniques. Small projects build practical experience. Skills develop through hands-on work.

Cross-functional collaboration spreads AI literacy. Technical and business teams learn together. Shared understanding improves project outcomes. Fine-tuning Llama 3 for business succeeds through teamwork.

Frequently Asked Questions

How long does fine-tuning Llama 3 for business typically take?

Small projects complete in days. You need hours for data preparation. Training runs take several hours. Testing and refinement add additional time. Large enterprise implementations span weeks to months. The scope determines exact duration.

Can I fine-tune Llama 3 without coding experience?

Basic technical skills are required. You don’t need to be an expert programmer. Following tutorials and documentation works. Many platforms simplify the process. Hiring technical help remains an option. No-code solutions are emerging gradually.

What size training dataset do I need?

Quality matters more than quantity. Start with 500-1,000 examples minimum. More data generally improves results. Diminishing returns appear after thousands. Your specific use case determines needs. Testing reveals optimal dataset size.

Is fine-tuning Llama 3 for business cost-effective for small companies?

Absolutely, especially with QLoRA techniques. Cloud resources make it affordable. Initial costs range from hundreds to thousands. ROI appears quickly with automation. Start small and scale gradually. The technology isn’t just for enterprises.

How often should I retrain my model?

Retrain when business changes occur. New products require model updates. Policy changes need reflection. Quarterly retraining works for most businesses. Stable industries retrain less frequently. Monitor performance metrics for guidance.

Can fine-tuned models handle multiple languages?

Yes, Llama 3 supports multilingual capabilities. Include examples in target languages. The model learns each language separately. Performance varies by language. More training data improves results. Cross-lingual transfer helps efficiency.

What happens if my fine-tuning results are poor?

Diagnose the problem systematically. Check data quality first. Verify training hyperparameters next. Try different fine-tuning approaches. Collect more diverse examples. Poor results usually indicate data issues. Iteration improves outcomes.

How do I prevent my model from forgetting general knowledge?

Catastrophic forgetting affects aggressive fine-tuning. Mix general examples with specialized data. Use smaller learning rates. LoRA approaches preserve base knowledge. The balance requires experimentation. Most implementations retain general capabilities.

Read More:-AI in HR: How to Automate Resume Screening Without Bias

Conclusion

Fine-tuning Llama 3 for business transforms generic AI into a specialized asset. Your company gains an expert that understands your industry deeply. The technology democratizes advanced AI capabilities. Small businesses compete with large enterprises effectively.

Success requires thoughtful preparation and execution. Quality data forms the foundation of everything. Technical implementation follows established best practices. Ongoing maintenance keeps models performing well. The investment pays dividends across your organization.

Start with clear objectives and realistic expectations. Choose the right fine-tuning approach for your resources. Build incrementally rather than attempting everything immediately. Learn from each iteration. Your expertise compounds over time.

The future belongs to companies that customize AI thoughtfully. Generic solutions can’t compete with specialized intelligence. Fine-tuning Llama 3 for business provides sustainable competitive advantages. Your AI becomes as unique as your business itself.

Take action now rather than waiting for perfection. Begin with a small pilot project. Prove value with concrete results. Scale based on demonstrated success. The journey of fine-tuning Llama 3 for business starts with a single step.

Your business has unique knowledge worth preserving. Fine-tuning captures institutional expertise in AI form. New employees access this knowledge instantly. The investment protects against knowledge loss. Your company becomes more resilient and capable.

The technology continues evolving rapidly. Models improve every few months. Techniques become more efficient constantly. Starting now builds expertise for future advances. Fine-tuning Llama 3 for business positions your company at the forefront. The competitive advantages multiply over time.